Rankings vs Citations in AI Search define two different visibility systems that determine how content appears in search engines and Large Language Models (LLMs). Search engine rankings order full documents on a SERP by keyword relevance, backlinks, and authority signals. AI citations select specific fragments for inclusion inside AI-generated answers based on entity alignment, fan-out query coverage, and contextual confidence. The core distinction is document ranking versus answer citation, evaluated through visibility, traffic, authority, and attribution.

Traditional SEO rankings drive scalable traffic because a higher SERP position increases click-through rates and session volume. AI citations drive embedded authority because inclusion inside AI Overviews or LLM responses increases brand presence even without a click. 76% of AI Overview citations originate from the top 10 organic results, which shows a correlation. However, 68% of cited pages do not rank in the top 10 for their head term, which confirms decoupling between SERP rankings vs citations in LLMs.

Search rankings and AI Citations differ in selection logic and performance mechanics. SEO prioritizes keyword-specific authority and backlink profiles. AEO prioritizes topical authority, freshness, internal linking, and fan-out query depth. Pages ranking for related fan-out queries experience a 161% increase in citation odds, and the Spearman correlation between fan-out coverage and citation inclusion equals 0.77. AI trigger rates average 76%, which increases the importance of citation optimization for research-heavy queries.

“Better” depends on the objective. Choose SEO rankings for transactional intent, brand navigation, and predictable traffic growth. Choose AI citations for research topics, competitive displacement, and shortlist visibility inside AI-generated answers. Combine both to maximize SERP real estate and LLM presence. Rankings remain the primary traffic engine. Citations increasingly define authority inside AI-driven discovery.

What Does Ranking In Traditional Search Mean?

Ranking in traditional search is a computational information retrieval process that orders web pages by estimated relevance and authority in response to a user query. Ranking in traditional search creates a visible hierarchy of results. This hierarchy appears on Search Engine Results Pages SERPs as blue links, featured snippets, and structured result blocks.

What does ranking represent in search engine systems? Ranking represents the ordering of documents by relevance signals and authority signals within a competitive index. Ranking evaluates indexed pages against a query. Ranking assigns position values that determine visibility and click probability. Position 1 receives higher click-through rates because a higher placement increases exposure.

What is Search Engine Ranking in technical terms? Search Engine Ranking is a scoring and sorting mechanism inside an information retrieval system. Search Engine Ranking scores documents using algorithmic models. Search Engine Ranking sorts documents into descending order of relevance. This ordering distinguishes Search Engine Ranking from generative AI because ranking presents multiple candidate pages instead of one synthesized answer.

Where did ranking in traditional search originate? Ranking in traditional search originated with the PageRank algorithm developed in 1996 by Larry Page and Sergey Brin. PageRank introduced link analysis as an authority scoring model. Link analysis treated backlinks as votes that define web hierarchy. Google adopted these ranking signals in 1998 and established position-based competition across SERPs.

What are the major components of traditional ranking systems? There are 4 major components of traditional ranking systems. The components are listed below.

- Link analysis (1996). Link analysis evaluates backlink quantity and backlink quality as authority endorsements. PageRank calculates link equity to define document importance.

- Keyword relevance models (1970s to present). Keyword relevance models (TF IDF, BM25) measure term frequency against corpus frequency. These models align query terms with page content.

- Semantic understanding engines (2015 to present). Semantic understanding engines (BERT, RankBrain) interpret context and synonym relationships. These engines decode search intent beyond exact keyword matching.

- User behavior metrics (2000s to present). User behavior metrics (CTR, dwell time) measure engagement signals. Engagement signals refine ranking positions through interaction data.

What are the key characteristics of traditional ranking? There are 3 key characteristics of traditional ranking. The characteristics are listed below.

- Position-based performance. Position-based performance uses Information Retrieval metrics (NDCG, MAP) to evaluate ranking quality. A 62 query study recorded 5.83 for traditional ranking versus 5.19 for AI on informational queries.

- Specialized retrieval accuracy. Specialized retrieval accuracy remains higher in indexed systems. A medical study recorded 40% relevant results for Bing Chat versus 0.5% for ChatGPT search.

- Layout and pixel volatility. Layout volatility affects click distribution across SERPs. Pixel distance influences CTR more than numeric rank when ads and AI Overviews shift results below the fold.

What relationships does ranking in traditional search form have in the digital ecosystem? Ranking in traditional search forms, dependencies, enablement structures, and competitive interfaces within the digital ecosystem. Ranking depends on a comprehensive web index and large-scale crawling systems. Google maintains approximately 90% global market share in traditional search. Ranking drives the SEO industry because traffic declines follow ranking losses.

How does ranking in traditional search compete with AI systems? Ranking in traditional search competes with Retrieval Augmented Generation RAG and zero-click result formats. RAG performs implicit ranking to select sources for synthesis. Featured Snippets and Knowledge Cards reduce click necessity through on-page answers. Traditional ranking remains dominant because 90% of global search users rely on ranked interfaces. AI systems are projected to process 25% to 30% of simple queries by 2026, yet traditional ranking remains primary for complex informational and specialized research tasks.

How Do Traditional Search Rankings Work?

Traditional search rankings work by matching a query to indexed documents, scoring those documents, ordering them by relevance and authority, and continuously re-evaluating positions. Traditional search rankings operate inside a large search index that stores hundreds of billions of pages. The ranking system converts a user query into machine-readable signals and compares those signals against indexed content.

How does query matching function in traditional search rankings? Query matching aligns user queries with indexed documents through keyword models and semantic models. Query matching evaluates literal terms using weighting systems (TF IDF, BM25). Semantic models (BERT, RankBrain, Neural Matching) interpret context and intent. This alignment connects query language to document meaning rather than exact string repetition.

How does scoring and ordering determine final positions? Scoring and ordering assign numerical relevance scores and sort documents in descending order. Scoring evaluates page-level signals and site-level signals inside core ranking systems (Panda, Penguin, Helpful Content System). Link analysis through PageRank calculates authority weight across the link graph. The ranking engine orders results based on combined relevance and authority scores.

How does competitive positioning influence rankings? Competitive positioning determines visibility because each document competes against all other indexed documents for the same query. Competitive positioning compares signal strength across domains in real time. A document moves upward when its relevance score exceeds competing pages. A document moves downward when competing pages gain stronger authority or freshness signals.

How does continuous re-evaluation maintain ranking accuracy? Continuous re-evaluation recalculates scores as new content enters the index and signals change. Continuous re-evaluation updates rankings after crawling, indexing, and signal recalibration. Freshness systems prioritize recent documents for time-sensitive queries. Deduplication systems and SpamBrain filters adjust visibility to remove repetition and policy violations.

How do integrated ranking systems coordinate these processes? Integrated ranking systems unify query analysis, scoring, and filtering inside a core architecture. Panda, integrated in 2015, evaluates content quality. Penguin was integrated in 2016 to evaluate link integrity. Helpful Content System, integrated in March 2024, evaluates originality and expertise. This unified structure ensures that query matching, scoring, competitive positioning, and re-evaluation operate within one ranking framework.

What Does Citation In AI Search Mean?

Citation in AI search means source selection inside synthesized answers generated by AI systems. Citation in AI search attributes specific web sources within AI-generated responses. Citation in AI search appears in AI Overviews, answer engines, and Large Language Model LLM responses.

What is Citation in AI Search in technical terms? Citation in AI Search is a probabilistic attribution mechanism based on semantic embeddings and statistical weighting. Citation in AI Search selects documents during Retrieval-Augmented Generation RAG. Citation in AI Search blends pre-trained model knowledge with indexed web data to ground responses in verifiable sources.

Where do citations in AI search appear? Citations in AI search appear in AI Overviews, answer engines, and conversational LLM interfaces. AI Overviews display citations as expandable source links above traditional blue links. Answer engines (Perplexity, Microsoft Copilot) present citations in source panels. LLM responses (ChatGPT Search, Gemini) embed citations as clickable references or inline source markers.

How did citations in AI search emerge? Citation in AI search emerged with the integration of Retrieval-Augmented Generation and AI-native search platforms. Perplexity AI launched in 2022 with citation-based answers. Microsoft Copilot launched in 2023 with web-grounded synthesis. Google introduced AI Overviews in 2023, and AI Overviews expanded from 6.49% query presence in January 2025 to over 50% by October 2025.

How does citation in AI search differ from traditional search links? Citation in AI search differs from traditional search links because citation selects fragments for answer construction instead of ranking full documents. Only 12% of AI-cited links overlap with Google’s top 10 organic results. Citation in AI search prioritizes semantic relevance and topical authority over backlink profiles and keyword density.

What are the major forms of citation in AI search? There are 4 major forms of citation in AI search. The forms are listed below.

- Source references (2022). Source references display URLs in a works cited list or expandable citation panel. Wikipedia recorded 1.1 million AI mentions while page views declined by 8%.

- Name-drop mentions (2023). Name-drop mentions insert brand or product names inside narrative responses. Name-drop mentions increase brand recall in zero-click environments.

- Quoted passages (2023). Quoted passages extract verbatim text from a source domain. Quoted passages prioritize structured content with clear headings and bullet formatting.

- Synthesized mentions (2024). Synthesized mentions reference source insights without explicit hyperlinks. Synthesized mentions increase Share of Voice without direct click attribution.

What are the key characteristics of citation in AI search? There are 3 key characteristics of citation in AI search. The characteristics are listed below.

- Ranking independence. Approximately 80% of cited pages do not rank in traditional top-tier results. Citation selection depends on semantic depth and entity relevance.

- Technical optimization. Citation visibility depends on machine-readable signals (Schema markup – FAQ, HowTo, Article). AI systems prioritize E-E-A-T signals and original research.

- Temporal sensitivity. Citation systems prioritize recency to reduce hallucination risk. Citation frequency fluctuates based on real-time weighting and model recalibration.

What relationships does citation in AI search form in digital ecosystems? Citation in AI search forms dependencies, enablement effects, and competitive dynamics within AI-driven discovery. Citation in AI search depends on structured data (Schema.org), high-quality indexing, and semantic alignment. Citation in AI search enables zero-click brand visibility inside AI interfaces. Citation in AI search competes with traditional search results and paid search placements for attention and attribution.

Why does citation in AI search matter for visibility? Citation in AI search matters because AI Overviews reached over 50% query penetration by October 2025. Citation in AI search shifts performance measurement from traffic volume to Citation Rate and Share of Voice. Citation in AI search redefines visibility from ranked position to answer inclusion within AI-generated summaries.

How Do AI Systems Choose Sources To Cite?

AI systems choose sources to cite through a selection process that evaluates entity relevance, contextual alignment, authority validation, redundancy avoidance, and non-ordering eligibility. AI systems execute retrieval before generation. AI systems select eligible fragments rather than ranking full documents.

How does entity relevance influence citation selection? Entity relevance determines whether a source matches the named entities and attributes inside a query. Entity relevance compares query entities with document entities using semantic embeddings. Strong entity mapping increases retrieval probability because ambiguity lowers confidence thresholds.

How does contextual alignment affect source eligibility? Contextual alignment measures how precisely a fragment matches query intent and sub-intents. Contextual alignment operates at the passage level rather than the full-page level. AI systems generate sub-queries through Query Fan Out and retrieve fragments that align with each sub-intent.

How does authority validation impact AI citation behavior? Authority validation confirms that a source demonstrates distributed trust signals across multiple environments. Authority validation evaluates domain credibility, brand consistency, and structured data signals (Schema markup – FAQ, Article, Organization). Strong external consistency increases citation likelihood because models reduce factual risk.

How does redundancy avoidance shape citation output? Redundancy avoidance filters semantically duplicated fragments during retrieval. Redundancy avoidance clusters similar passages and selects distinct evidence blocks. This filtering ensures citation diversity within a synthesized answer.

Why are AI citations non-ordered rather than ranked? AI citations are non-ordered because selection determines eligibility, not competitive position. AI systems construct answers first and attach supporting references. Citation lists do not reflect hierarchical ranking like SERP positions.

How do platform-specific sourcing patterns differ across AI systems? Platform-specific sourcing patterns differ because each AI system prioritizes distinct data ecosystems. The sourcing distribution is shown below.

| Platform | Primary Source Focus | Top Source Share | Secondary Sources |

|---|---|---|---|

| ChatGPT | Authoritative knowledge | Wikipedia 47.9% of the top 10 | G2 1.1%, TechRadar 0.9% |

| Perplexity | Community discourse | Reddit 46.7% of the top 10 | YouTube 2.0%, Yelp 0.8% |

| Google AI Overviews | Balanced distribution | Reddit 2.2% total citations | YouTube 1.9%, LinkedIn 1.3% |

How do Top-Level Domains influence AI citation distribution? Top-Level Domains strongly influence citation concentration patterns. The domain distribution is shown below.

| Top-Level Domain | Citation Share |

|---|---|

| .com | 80.41% |

| .org | 11.29% |

| Geographic TLDs | 3.5% |

| .uk | 2.16% |

How does risk mitigation affect the inclusion of niche brands? Risk mitigation prioritizes established entities over niche brands during citation selection. AI systems select sources that provide clear factual grounding. Weak entity recognition reduces inclusion probability because confidence scores decline under ambiguity.

Are Search Rankings and AI Citations Connected?

Yes, search rankings and AI citations are connected through correlation, but rankings are not a prerequisite for citations. AI systems derive 76% of citations from Google’s top 10 organic results. Perplexity aligns with Google’s top 10 results 91% of the time. Google AI Overviews show an 85% overlap rate with top-ranked pages.

Why are high-ranking pages more likely to be cited? High-ranking pages are more likely to be cited because AI retrieval systems use traditional search indexes as primary discovery layers. High-ranking pages gain visibility in Google indexes. High visibility increases retrieval probability during Query Fan Out processing. Strong backlink authority and topical relevance strengthen both ranking scores and citation eligibility.

Why can citations occur without top rankings? Citations occur without top rankings because AI systems evaluate entity relationships and passage relevance instead of page position. ChatGPT cites Google’s top 10 results only 44% of the time due to reliance on Microsoft Bing. A 32% gap exists between Google AI Overviews and Google AI Mode citation patterns. Content achieves an AI discoverability score of 9 out of 10 while maintaining a keyword optimization score of 5 out of 10.

Why are rankings not a prerequisite for AI citation? Rankings are not a prerequisite for AI citation because citation operates on fragment retrieval, not competitive ordering. AI systems retrieve semantically aligned passages even from pages outside the top 10. Citation inclusion depends on entity clarity, contextual alignment, and authority validation. Correlation between rankings and citations does not equal dependency because citation selection evaluates eligibility rather than position hierarchy.

What are the Key Differences Between Search Rankings and AI Citations?

The key differences between search rankings and AI citations are the retrieval objective, the selection process, the visibility model, and the coupling to rankings. Search rankings evaluate and order full documents inside a SERP. AI citations retrieve and attach fragments inside synthesized answers. These structural differences redefine visibility, traffic, authority, and attribution. The key differences are listed below.

- Retrieval Objective: Document Discovery vs. Answer Construction

- Selection Process: Full Documents vs. Passages and Propositions

- Visibility Model: Ranked Listings vs. Embedded Attribution

- Coupling to Rankings: Competitive Ordering vs. the Decoupling Effect

1. Retrieval Objective: Document Discovery vs. Answer Construction

Retrieval objective is a key difference because search rankings prioritize document discovery, while AI citations prioritize answer construction. Search rankings retrieve and order full URLs inside a SERP. AI citations retrieve eligible fragments that enter a context window for synthesis. This shift changes visibility from page ordering to fragment eligibility.

How do retrieval objectives differ at the system level? Search rankings sort selected URLs by relevance, while AI citations determine whether a fragment qualifies for answer inclusion. Search rankings assign position values based on scoring models. AI citations evaluate whether a passage meets semantic confidence thresholds. A page ranking in the top 3 with a 31.2% CTR remains invisible to AI systems if fragment signals fail the retrieval criteria.

What is the structural difference between document discovery and answer construction? Document discovery evaluates entire URLs, while answer construction evaluates semantic fragments. The search rankings process rendered HTML that executes JavaScript and CSS. AI citations parse raw HTML at fetch time and extract high signal-to-noise segments. Keywords guide search rankings. Entities and vector relationships guide AI citations.

The feature differences are listed below.

| Feature | Search Rankings | AI Citations |

|---|---|---|

| Primary Objective | Ordering sorts selected URLs by relevance. | Eligibility determines if a fragment enters the context window. |

| Selection Unit | The entire URL evaluates full pages and domains. | Semantic fragments select isolated chunks or propositions. |

| Technical Payload | Rendered HTML processes JavaScript and CSS. | Raw HTML parses the initial fetch-time payload only. |

| Relevance Signal | Keywords use terms as intent proxies. | Entities use relationships and vector alignment. |

| Structural Signal | Broad markup navigates heavy code. | Signal-to-noise truncates buried content. |

| Header Role | Hierarchy organizes page flow for users. | Contextual anchor defines meaning for fragments. |

| Conflict Handling | Reconciliation consolidates signals over time. | Dilution conflict signals lower confidence scores. |

| Stability | Volatile positions fluctuate under competition. | Durable citations persist if signals remain consistent. |

When do search rankings take priority under this retrieval objective model? Search Rankings take priority when the objective is high-volume traffic acquisition through document discovery. Google processes over 8.5 billion searches per day. Position 1 generates approximately 10x more visibility than position 10. Ad-supported models depend on click-through traffic generated by ranked listings.

When do AI Citations take priority under this retrieval objective model? AI Citations take priority when the objective is answer-level authority inside LLM-generated outputs. Fragment retrieval requires high embedding strength and entity clarity. A fragment excluded during retrieval cannot appear in the synthesized answer. B2B documentation and technical specifications benefit from fragment-level inclusion.

How do performance metrics differ under these retrieval objectives? Search Rankings measure success by position, while AI Citations measure success by retrieval probability. Moving from rank 10 to rank 1 increases exposure incrementally. Falling below a vector confidence threshold of 0.7 or 0.8 removes fragment visibility entirely. Search visibility operates on a gradient scale. AI citation visibility operates on an inclusion threshold model.

How do cost structures differ under document discovery versus answer construction? Document discovery requires ongoing backlink and content investment, while answer construction requires structural optimization and entity precision. Competitive niches often demand $5,000 to $20,000 monthly for ranking maintenance. AI citation optimization demands raw HTML clarity and reduced signal noise. Entity consistency increases durability over a 3 to 5 year horizon.

What decision rule applies under this retrieval objective distinction? Choose Search Rankings for competitive ordering and click acquisition, and choose AI Citations for fragment eligibility and answer authority. Use Search Rankings for JavaScript-heavy experiences and broad topical domains. Use AI Citations for entity-rich, structurally clean, fragment-driven content.

2. Selection Process: Full Documents vs Passages and Propositions

The selection process is a key difference because traditional search rankings evaluate full documents for ordering, while AI citations evaluate passages and propositions for synthesis eligibility. Traditional search rankings apply ranking logic at the URL level. AI citations apply retrieval logic at the fragment level. Content excluded from the AI candidate set remains invisible even with a high organic rank.

How does the primary logic differ between ranking and citation systems? Traditional search rankings use ranking logic, while AI citations use retrieval logic. Ranking logic determines the linear order of results on a SERP. Retrieval logic determines whether a fragment qualifies for synthesis. Ranking assigns position values. Retrieval assigns eligibility status.

How does the process flow differ between ranking and citation systems? Traditional search rankings follow a Rank – Click – Evaluate flow, while AI citations follow a Retrieve – Evaluate – Generate – Cite flow. Ranking systems display ordered links before user interaction. Citation systems retrieve fragments before answer generation. Selection timing defines visibility boundaries in both systems.

How does the matching method differ between ranking and citation systems? Traditional search rankings rely on keyword overlap and backlink authority, while AI citations rely on semantic alignment and intent coverage. Ranking systems score keyword signals and link signals across entire pages. Citation systems measure vector similarity and entity coherence within passages. Page-level authority drives ranking. Fragment-level alignment drives citation.

The feature differences are listed below.

| Feature | Traditional Search Rankings | AI Citations |

|---|---|---|

| Primary Logic | Ranking determines linear order. | Retrieval determines synthesis eligibility. |

| Process Flow | Rank – Click – Evaluate. | Retrieve – Evaluate – Generate – Cite. |

| Selection Timing | Final output phase. | Selection layer before generation. |

| Matching Method | Keyword overlap and backlink authority. | Semantic alignment and intent coverage. |

| Data Structure | Page-level signals and URL authority. | Entity-based identity and internal consistency. |

| Content Sensitivity | General HTML and metadata optimization. | High sensitivity to scannable headings and FAQs. |

| E-E-A-T Stringency | Standard organic signals. | Higher stringency for YMYL topics. |

| Output Format | Ordered blue links. | Synthesized answer with in-line citations. |

When do Traditional Search Rankings take priority under this selection model? Traditional Search Rankings take priority for navigational and transactional queries that require full-page evaluation. Navigational queries demand direct URL access. Transactional queries benefit from side-by-side vendor comparison. Long-form guides retain context in ranking systems because ranking preserves full document integrity.

When do AI Citations take priority under this selection model? AI Citations take priority for synthesis-heavy queries that require fragment extraction across multiple sources. Research-driven queries require cohesive summaries. Structured content (FAQs, tables, scannable headings) increases extractability. YMYL topics demand strong entity validation and high E-E-A-T stringency for citation inclusion.

How do performance metrics differ under this selection process distinction? Traditional Search Rankings measure performance through positional stability and traffic volume, while AI Citations measure performance through selection frequency and citation presence. Ranking positions change gradually under competition. Citation patterns fluctuate with model recalibration. Ranking visibility scales incrementally. Citation visibility depends on fragment-level eligibility.

How do cost structures differ under this selection process distinction? Traditional Search Rankings require ongoing backlink investment, while AI Citations require structural clarity and entity reinforcement. Competitive SEO budgets range from $5,000 to $20,000 per month in high-intent niches. AI-ready formatting increases editorial workload by approximately 20% during initial optimization. Entity consistency reduces long-term recalibration requirements across platforms (Google AI Overviews, ChatGPT, Perplexity).

What decision rule applies under this selection process distinction? Prioritize Traditional Search Rankings for page-level dominance and traffic acquisition, and prioritize AI Citations for fragment-level eligibility and synthesis visibility. Combine both approaches when content maintains strong architecture and structured data alignment (Schema.org).

3. Visibility Model: Ranked Listings vs. Embedded Attribution

Visibility model is a key difference because search rankings generate visibility through ranked listings, while AI citations generate visibility through embedded attribution inside synthesized answers. Search rankings expose content through ordered blue links. AI citations expose content through inline references and source blocks. This shift replaces position-driven clicks with inclusion-driven mentions.

How do authority signals differ across these visibility models? Search rankings rely on hyperlink graphs, while AI citations rely on recurring brand patterns and entity mentions. Backlink profiles drive ranking authority. Brand mentions correlate 3x more strongly with AI citation visibility than backlinks. Authority in search depends on link equity. Authority in AI depends on entity recurrence and consistency.

How do visibility drivers differ between ranked listings and embedded attribution? Ranked listings depend on backlink strength, while embedded attribution depends on brand mention frequency and extractable structure. Backlinks remain central to Google ranking systems. Brand mentions influence AI systems at 3x the correlation rate of backlinks. Search rankings reward link acquisition. AI citations reward entity reinforcement.

The visibility differences are listed below.

| Feature | Search Rankings | AI Citations |

|---|---|---|

| Primary Signal | Hyperlink graphs and backlink profiles. | Recurring patterns and unlinked brand mentions. |

| Visibility Driver | Backlinks – 3x less influential in AI systems. | Brand mentions – 3x stronger correlation. |

| Source Preference | High domain rating authority sites. | Smaller, well-structured pages with direct answers. |

| Content Format | Long-form keyword-dense content. | Concise sections with logic layers. |

| Platform Overlap | High consistency across Google and Bing. | 11% domain overlap between ChatGPT and Perplexity. |

| Discovery Source | General web index (Google, Bing). | Niche databases (Wikipedia 47.9%, Reddit 46.7%). |

| Persistence | Stable over weeks or months. | Volatile, 70% turnover across prompts. |

| Functional Role | Traffic driver via clicks. | Evidence layer for AI-generated shortlists. |

When do Search Rankings take priority under this visibility model? Search Rankings take priority when the objective is direct traffic acquisition through ranked exposure. Google processes over 8.5 billion searches per day. Position stability enables predictable forecasting. Long-form educational content retains context in ranked environments.

When do AI Citations take priority under this visibility model? AI Citations take priority when the objective is shortlist inclusion and brand recommendation inside AI-generated responses. Bridging the 80% Mention-Source Divide increases repeat visibility by 40%. Reddit accounts for 46.7% of Perplexity sources. Citation presence influences buyer evaluation before click behavior.

How do performance metrics differ under this visibility distinction? Search Rankings measure visibility through keyword positions and organic traffic, while AI Citations measure visibility through generative mentions and source block inclusion. Ranking visibility fluctuates under competition. AI citation visibility shows 70% brand turnover across identical prompts.

How do cost structures differ under this visibility distinction? Search Rankings require link-building and long-form production investment, while AI Citations require structural formatting and entity reinforcement. AI citation tracking requires emerging KPI systems. Multi-platform optimization increases operational complexity because domain overlap across LLMs equals 11%.

What decision rule applies under this visibility model difference? Prioritize Search Rankings for stable click-based traffic and prioritize AI Citations for embedded brand authority inside generative answers. Combine both models when content achieves dual-signal status as both a ranked and cited authority.

4. Coupling to Rankings: Competitive Ordering vs. the Decoupling Effect

Coupling to rankings is a key difference because search rankings depend on competitive ordering of full pages, while AI citations operate under a decoupling effect where inclusion does not require top position. Search rankings tie visibility directly to page-level authority and backlink strength. AI citations detach visibility from numeric rank and evaluate fragment eligibility instead.

How does competitive ordering define coupling in search rankings? Competitive ordering defines coupling because higher page-level scores directly increase rank position and traffic. Search rankings optimize full pages through backlinks, keyword density, and site speed. Position determines CTR and organic traffic. A page must outperform competitors to move upward.

How does the decoupling effect redefine visibility in AI citations? The decoupling effect allows a page to receive citation without ranking in the top 10 organic results. AI citations evaluate extractability, specificity, and entity clarity. Only 12% overlap exists between AI citations and top search results. A page ranking #7 receive a citation if fragment alignment exceeds the top result.

The coupling differences are listed below.

| Feature | Search Rankings | AI Citations |

|---|---|---|

| Optimization Unit | Full page, authority, and backlinks. | Fragment passages, tables, lists. |

| Selection Signal | Page-level relevance and site speed. | Extractability, specificity, clarity. |

| Implementation Timeline | 3 to 6 months for measurable SEO impact. | 6 to 8 weeks for GEO impact. |

| Overlap Frequency | 100% base metric. | 12% overlap with top search results. |

| Technical Requirement | Keyword density and backlink profiles. | Semantic HTML and Schema markup. |

| Content Structure | Narrative flow is often 1,200+ words. | Inverted pyramid 40 to 80 word answers. |

| Success Metric | Position, CTR, organic traffic. | Brand share of answer, mentions. |

| Lead Generation | Dependent on the ranking of traffic. | 32% of new SQLeads within six weeks. |

When do Search Rankings take priority under this coupling model? Search Rankings take priority for long-term domain authority and high surface intent queries. Ranking systems reward sustained backlink acquisition over 3 to 6 months. Surface intent queries drive high impression volume. High-resolution imagery and PDF assets index more reliably in traditional search.

When do AI Citations take priority under this coupling model? AI Citations take priority for rapid lead generation and complex intent queries. Citation impact appears within 6 to 8 weeks. Data shows 32% of new sales-qualified leads originate from AI interfaces after citation optimization. Structured fragments outperform higher-ranked pages when sub-query precision increases.

How do performance metrics reflect this coupling distinction? Search Rankings measure performance through position and traffic, while AI Citations measure performance through influence on the generated answer. Ranking visibility increases incrementally from position 10 to position 1. Citation visibility follows an inclusion model where absence equals zero influence.

What implementation rule applies under this coupling difference? Optimize full-page authority for competitive ordering and optimize fragment clarity for decoupled citation visibility. Structure content with Semantic HTML and Schema markup to satisfy both ranking crawlers and AI retrieval systems.

Where do Search Rankings and AI Citations Overlap?

Rankings and AI citations overlap in 4 core areas, which are authority signals, content quality and structure, topical consistency, and factual accuracy and freshness. Search rankings and AI citations operate under different visibility models. Both systems evaluate trust, structure, relevance, and recency before granting visibility. The shared evaluation layers create measurable correlation across SERPs and AI Overviews. The core areas are listed below.

- Authority Signals

- Content Quality and Structure

- Topical Consistency

- Factual Accuracy and Freshness

Search rankings and AI citations overlap through shared evaluation layers. Authority, structure, consistency, and accuracy define eligibility in both ranked and generative environments. Aligning content with these four areas strengthens visibility across traditional search and AI-driven discovery.

1. Authority Signals

Authority signals are an overlap between search rankings and AI citations because both systems evaluate trust, entity identity, and cross-platform validation before granting visibility. Search rankings use backlink authority and domain trust to determine position. AI citations use entity consistency and external mentions to determine inclusion. Both systems filter content through credibility thresholds.

How does entity-based understanding create overlap between search rankings and AI citations? Entity-based understanding creates overlap because both systems evaluate connected brands, authors, and organizations rather than isolated URLs. Search engines map entities through link graphs and knowledge graphs. Large Language Models map entities through semantic embeddings. Entity clarity strengthens both ranking authority and citation eligibility.

Why do semantic and topical relevance reinforce authority in both systems? Semantic and topical relevance reinforce authority because both systems evaluate depth of expertise across related content clusters. Search rankings reward consistent topical coverage through internal linking structures. AI citations reward stable entity-topic relationships across multiple sources. Concentrated subject focus increases trust scoring in both environments.

What role does structured data play in overlapping authority signals? Structured data overlaps authority signals because both systems require machine-readable context for classification and validation. Schema markup (FAQ, Article, Organization) clarifies entity identity and factual relationships. Clean headings and logical formatting increase parse efficiency. Structured clarity strengthens ranking confidence and citation confidence simultaneously.

How does external validation strengthen authority across both systems? External validation strengthens authority because both systems evaluate third-party references outside the owned domain. Mentions on platforms (LinkedIn, G2, YouTube) reinforce brand legitimacy. YouTube comment volume correlates with AI mention frequency. Cross-platform name consistency reduces entity ambiguity.

Why does high domain trust create shared authority outcomes? High domain trust creates shared authority outcomes because AI systems retrieve from domains that already perform strongly in traditional search. Google controls over 90% of global search usage. High-ranking domains enter AI retrieval pipelines more frequently. Traditional authority increases AI citation probability, which reinforces brand recognition across both visibility layers.

2. Content Quality and Structure

Content quality and structure overlap because both systems prioritize well-structured, authoritative pages that align with user intent. 94% of AI Overviews include at least one URL from the top 20 organic results. 90% of AI citations overlap with the top 10 web results. Structured quality signals drive visibility across both ranking and citation systems.

How does the correlation between organic rankings and AI inclusion demonstrate this overlap? Correlation demonstrates overlap because high-ranking organic content frequently appears inside AI-generated summaries. 94% of AI Overviews cite at least one top 20 result. 90% of AI citations overlap with the top 10 results. Organic quality standards directly influence AI retrieval pipelines.

Why is rank position a measurable driver of AI citation likelihood? Rank position influences citation likelihood because higher organic authority increases retrieval probability. Position 1 URLs receive citations 43% of the time. Position 20 URLs receive citations 7% of the time. 32% of all AI citations originate from the top 3 organic positions. Authority concentration at the top ranks increases aggregation frequency.

What role do structural models play in reinforcing this overlap? Structural models reinforce overlap because semantic matching systems reward extractable and logically organized content. RankEmbed Model applies deep learning to match content formats with query expectations. FastSearch Algorithm prioritizes semantic matching speed over backlink signals. Clear headings, concise sections, and structured layouts increase eligibility in both systems.

How does vertical-specific convergence strengthen the overlap in high-authority sectors? Vertical-specific convergence strengthens overlap because regulated industries require stricter quality thresholds. Healthcare overlap increased to 75.3% from 63.3%. Education overlap increased to 72.6% with a +53.2 percentage point change. Insurance overlap increased to 68.6% with a +47.7 percentage point change. High-stakes sectors narrow source pools to well-structured authoritative domains.

Why does single-source AI selection highlight dependence on organic authority? Single-source AI selection highlights dependence because exclusive citations strongly align with top-ranking results. 89% overlap occurs when Google selects one AI Overview source. Even when 44% of citations originate outside the top 20, AI systems retrieve pages that mirror top-ranking structural patterns. Content quality and structure function as shared eligibility criteria across search rankings and AI citations.

3. Topical Consistency

Topical consistency is an overlap between search rankings and AI citations because both systems prioritize stable subject focus and concentrated expertise across a domain. Position 1 URLs receive AI citations 43% of the time. 94% of AI citations originate from the top 20 search results. Consistent publishing reduces semantic confusion and strengthens authority boundaries.

How does organic search positioning influence AI citation frequency? Organic search positioning influences AI citation frequency because higher-ranking URLs pass primary trust filters used in AI retrieval. Position 2 URLs receive citations 37% of the time. Position 3 URLs receive citations 31% of the time. Analysis of 5.1 million citations shows 56% of AI Overview citations originate from the top 20 bracket. Ranking signals function as an initial credibility layer.

Why does the elimination of semantic confusion strengthen authority? Elimination of semantic confusion strengthens authority because focused topic coverage creates clear classification boundaries. A site publishing 1,000 pages on one topic builds strong thematic coherence. A site publishing 100 pages across unrelated categories weakens topical authority. Mixed signals reduce model confidence and lower citation stability.

What makes internal semantic flow critical for ranking and citation stability? Internal semantic flow is critical because structured internal links create evaluation chains across related content. Internal links reinforce entity relationships within topic clusters. Consistent anchor patterns clarify subject hierarchy. Broken thematic flow introduces signal noise that disrupts both ranking consistency and citation eligibility.

How do shifting citation patterns increase the importance of topical consistency? Shifting citation patterns increase the importance of topical consistency because overlap between top 10 rankings and AI citations declined from 19% to 6% in 2025. The average number of cited URLs from the top 20 decreased from 5 to 3 between May 2025 and October 2025. 44% of AI citations now originate outside the top 20. High-stakes sectors such as Healthcare prioritize established topical authority over isolated ranking strength.

Topical consistency aligns organic ranking strength with AI citation eligibility. Concentrated expertise, stable subject focus, and internal semantic clarity strengthen visibility across both systems.

4. Factual Accuracy and Freshness

Factual accuracy and freshness are an overlap between search rankings and AI citations because both systems filter content through expertise thresholds and recency validation before granting visibility. Google reduces billions of documents to 117 final candidates through multi-stage filtering. AI systems retrieve from the same indexed environment through Retrieval-Augmented Generation RAG. Domain overlap between the top 10 organic results and AI citations ranges from 88% to 91%.

How does the multi-stage filtering process create factual overlap? Multi-stage filtering creates factual overlap because documents must survive progressive reranking stages based on expertise and depth. Ranking systems evaluate authoritativeness before exposure. Only high-confidence documents pass elimination layers. AI synthesis operates on this pre-filtered pool, which aligns ranking quality standards with citation eligibility.

Why does Retrieval-Augmented Generation RAG reinforce shared accuracy requirements? Retrieval-Augmented Generation RAG reinforces shared accuracy requirements because AI systems pull live data directly from the search index. RAG connects language models to indexed documents instead of relying on static training memory. Content must satisfy E-E-A-T signals to enter retrieval pipelines. Structured technical documentation maintains both ranking strength and citation eligibility through consistent updates.

Why is freshness a measurable requirement across both systems? Freshness is a measurable requirement because both systems prioritize recently verified information to reduce misinformation risk. Google infrastructure updates indexed content in under one minute. Sparse model routing activates 1% to 5% of parameters for time-sensitive queries. Older articles face reduced visibility when newer verified documents exist.

How do performance gains from search integration confirm the role of factual accuracy? Performance gains confirm the role of factual accuracy because search-enabled models outperform internal knowledge accuracy across multiple systems. The comparison is shown below.

| AI Model | Internal Knowledge Accuracy | Search-Enabled Accuracy |

|---|---|---|

| Gemini 3 Pro | 76.4% | 83.8% |

| GPT-5 | 55.8% | 77.7% |

| Claude 4.5 Opus | 30.6% | 73.2% |

Search-enabled accuracy increases reliability from 60% to 84%, depending on the model. Grounding accuracy ranges from 62% to 74% under live retrieval conditions. Factual accuracy and freshness operate as shared eligibility gates across both search rankings and AI citations.

Are AI Citations Better Than Traditional Search Rankings?

No, AI citations are not categorically better than traditional search rankings because each visibility model serves different performance objectives. AI citations influence answer-level visibility inside generative systems. Traditional search rankings drive click-based traffic through competitive ordering. “Better” depends on whether the objective is brand inclusion or traffic acquisition.

What factual data supports the strategic importance of AI citations? AI citations are strategically important because AI answer engines are projected to capture approximately 25% of search discovery within the next few years. Analysis of 6.8 million AI citations shows 86% originate from brand-managed sources (owned websites, local pages). Early citation presence creates persistent recall inside AI systems. Loss of citation share increases recovery costs over multiple months.

Why do traditional search rankings remain commercially dominant? Traditional search rankings remain commercially dominant because they generate significantly higher traffic volume. Traditional search drives 345x more traffic than AI engines. Local ranking systems still weigh backlinks and traditional citations more heavily than AI mentions. Ranking position directly correlates with CTR and session-based conversion.

Why does AI citation visibility not guarantee traffic growth? AI citation visibility does not guarantee traffic growth because citation inclusion does not require user clicks. Testing in ChatGPT and Bing showed minor increases in branded query visibility without significant traffic growth. AI visibility fluctuates based on ZIP code and phrasing. Citation presence influences awareness more than immediate sessions.

What strategic conclusion follows from this comparison? AI citations and traditional search rankings function as complementary visibility systems rather than replacements. AI citations increase the brand share of answers inside LLM interfaces. Traditional search rankings secure predictable traffic and conversion pathways. Strategic allocation aligns with measurable objectives – traffic volume, lead generation, or brand authority within AI-generated responses.

When Do Rankings Matter More Than AI Citations?

Search rankings matter more than AI citations when the primary objective is high-volume traffic acquisition and predictable session growth. Traditional search drives 345x more traffic than AI engines. Many practitioners maintain a 70/30 allocation favoring traditional links over branded mentions. 76% of AI Overview citations originate from pages ranking in the top 10 organic results, which makes rankings a foundational discovery layer.

How do traditional search rankings influence AI visibility? Traditional search rankings influence AI visibility through Query Fan Out QFO retrieval dependency. Large Language Models rely on traditional search infrastructure for document discovery. High-ranking pages enter AI retrieval pipelines more frequently. A page ranking #1 holds only a 25% probability of an AI Overview citation, which confirms influence without guarantee.

What data discrepancies define the gap between rankings and citations? Data discrepancies define an invisibility gap because 59.6% of AI citations originate from pages outside the top 20 results. Ranking metrics do not fully predict citation outcomes. Citation selection evaluates entity alignment beyond page position. Traditional ranking remains necessary for baseline authority even when citation patterns diverge.

How do discovery mechanics differ between traditional search and AI engines? Discovery mechanics differ because traditional search prioritizes PageRank, and AI engines prioritize entity relationships. The comparison is shown below.

| Factor | Traditional Search | AI Engines |

|---|---|---|

| Primary Metric | PageRank and link authority. | Entity relationships and authority. |

| Geographic Scope | National averages dominate. | Local citations influence ZIP-level visibility. |

| Conversion Value | High traffic volume. | 23x higher conversion rate than organic search. |

| Ranking Stability | Fluctuates after SEO updates. | Citation occurs without a ranking change. |

When entity authority increases without ranking movement, AI citation frequency increases. B2B content has received multiple AI citations with no change in organic position. Ranking strength influences discovery. Entity authority influences inclusion.

When Do AI Citations Matter More Than Rankings?

AI citations matter more than search rankings when the objective is category authority, shortlist inclusion, and long-term AI interface visibility. Gartner projects a 25% shift of search discovery toward AI answer engines. AI systems build persistent memory structures that reinforce cited sources. Exclusion from AI answer sets reduces brand presence in decision-stage queries.

Why do AI citations represent a board-level risk? AI citations represent a board-level risk because delayed visibility increases recovery cost and competitive displacement. 86% of AI citations originate from brand-managed sources. Early citation acquisition establishes recall dominance. Brands without AI presence face higher investment requirements to re-enter citation pools.

How do AI citations influence unbranded queries and local discovery? AI citations influence unbranded queries and local discovery because entity-based retrieval prioritizes contextual authority over page rank. ZIP-level phrasing alters citation visibility. Local citations affect geographic inclusion. AI visibility demonstrates higher volatility than traditional rankings.

What are the primary sources of AI citations for brand visibility? Primary sources of AI citations include brand-managed assets and third-party validation environments. The distribution is shown below.

| Source Category | Citation Share | Content Types | Visibility Impact |

|---|---|---|---|

| Brand-Managed Content | 86% | Owned websites, local pages, listings, and reviews. | Direct narrative control. |

| Third-Party Authority | Variable | Journalism, Reddit, Quora, LinkedIn. | External validation. |

| Social and Video | Variable | YouTube transcripts, YouTube comments. | Strong AI mentions correlation. |

How does a brand establish canonical authority through AI citations? A brand establishes canonical authority by producing citation-ready content that AI systems retrieve and reuse. Publish structured hubs, guides, and FAQs that define category terms. Reinforce entity clarity across platforms. Algorithmic education increases the probability of sustained citation inclusion across AI Overviews, ChatGPT, and Perplexity.

Can One Strategy Support Both Traditional Search Rankings and AI Citations?

Yes, one strategy supports both traditional search rankings and AI citations because both systems prioritize topical completeness, logical structure, and entity clarity. Google search sessions increased from 10.5 to 12.6 per week after users adopted ChatGPT. AI search visitors generate 4.4x higher conversion value than average organic visitors. ChatGPT shopping queries increased from 7.8% to 9.8% between January and June 2025.

Why does structural alignment enable dual visibility? Structural alignment enables dual visibility because ranking systems and AI systems both evaluate organized, entity-rich content. Traditional search evaluates keyword alignment and backlink authority. AI systems evaluate semantic clarity and extractable fragments. Structured content (Schema markup, logical headings, entity definitions) strengthens eligibility across both ranking and citation layers.

Why does AI success not automatically produce ranking success? AI success does not automatically produce ranking success because traditional search requires technical SEO signals beyond semantic clarity. A December 2025 case study recorded a 9 out of 10 AI Discoverability score but only 6.5 out of 10 for traditional SEO. Missing schema markup and meta tags reduced technical compliance to 5 out of 10. Traditional search still drives 43% of traffic and 23.6% of sales, which requires direct optimization.

What is the strategic conclusion for dual optimization? Dual optimization requires aligning entity clarity with technical SEO implementation. Maintain clean Semantic HTML for AI retrieval. Maintain keyword targeting and backlink acquisition for ranking stability. Balanced execution increases visibility across SERPs and AI-generated answers simultaneously.

How Is AI Citation Visibility Measured?

AI citation visibility is measured through mentions, citations, impressions, and user actions across AI interfaces. Mentions record brand references without links. Citations record clickable source attributions. Impressions record zero-click brand exposure inside AI responses. Traditional tools (Google Search Console) do not capture these metrics.

What are the core technical metrics for tracking AI visibility? There are 4 core technical metrics for tracking AI visibility – mentions, citations, impressions, and user actions. Mentions track non-linked brand references. Citations track source links. Impressions measure interface-level exposure. User actions record clicks, expansions, and copy events.

How is AI referral traffic attributed? AI referral traffic is attributed through referrer string analysis and landing page query identification. Platforms (Perplexity, Copilot, Gemini, paid ChatGPT tiers) pass visible referrer data to GA4 (perplexity.ai / referral). Free ChatGPT traffic appears as Direct. AI referral traffic represents 0.5% to 3% of total website traffic as of 2025.

What methodologies track cross-platform brand visibility? Cross-platform visibility tracking relies on multi-platform monitoring, sentiment analysis, and automated query workflows. Multi-platform monitoring evaluates presence across Gemini, ChatGPT, and Perplexity. Sentiment analysis measures positive, neutral, and negative portrayals. Automated workflows (n8n, Zapier APIs) collect prompt-level citation data at scale.

What indicators define Machine-Validated Authority MVA? Machine-Validated Authority MVA measures citation stability and retrieval frequency across assistants over time. Stable citation recurrence indicates high model confidence. Provenance signals and machine-readable structure increase retrieval probability. Direct answer formatting increases fragment selection likelihood.

How does the AI market scale influence measurement priorities? AI market scale influences measurement priorities because AI assistants process high query volumes with rapid growth trajectories. Google processes 3.5 billion searches per day. Perplexity processed 780 million queries in May 2025. AI assistants are projected to reach 1 billion daily active users by 2026.

What factors influence AI citation behavior and brand visibility? AI citation behavior depends on licensing agreements, structural formatting, and competitive benchmarking. Licensing partnerships (OpenAI – AP, Axel Springer) affect citation distribution. Manual logging records Date, Assistant, Prompt, Citation Status. Competitive gap analysis compares entity structure and authorship signals.

The measurement categories are listed below.

| Metric Category | Key Data Points | Strategic Focus |

|---|---|---|

| Traffic Volume | 0.5% to 3% of total traffic. | Identify the direct traffic gap. |

| Query Scale | 10 billion annual queries (Perplexity). | Reach 1 billion daily users by 2026. |

| Engagement | Clicks, expansions, copies. | Shift evaluation from ranking to retrieval. |

| Authority | MVA stability and frequency. | Reinforce machine-readable structure and provenance. |

Which Tools Track Traditional Search Rankings and AI Citation Visibility?

The primary tools that track traditional search rankings and AI citation visibility are Search Atlas, SE Ranking, Ahrefs Brand Radar, Writesonic GEO, SEMrush, and Morningscore. These platforms combine SERP position tracking with AI citation monitoring across Google AI Overviews and Large Language Models. Pricing ranges from $69 to $399 per month, depending on feature depth and enterprise requirements.

The tools are listed below.

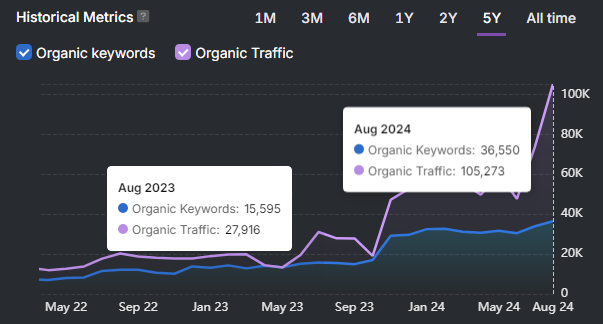

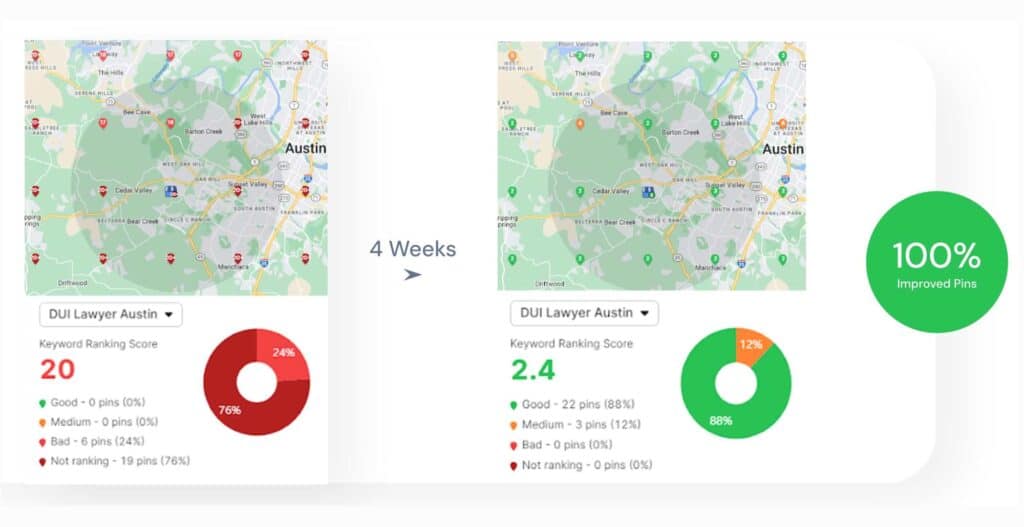

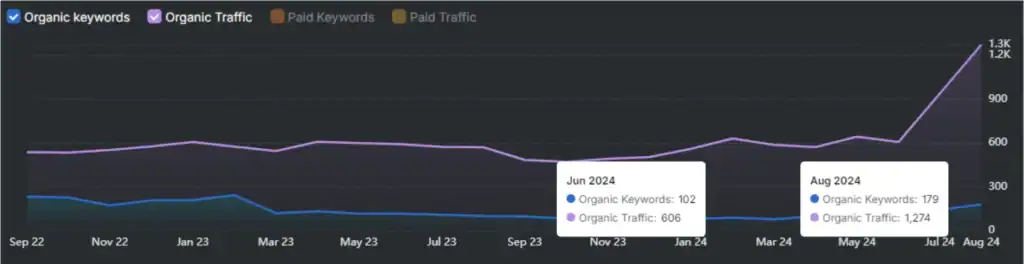

- Search Atlas. Search Atlas leads as an AI-powered SEO platform that centralizes keyword research, LLM visibility tracking, content optimization, technical audits, and backlink analysis in one unified system. Search Atlas tracks rankings across ChatGPT and Google AI Overviews while managing traditional keyword positions across 5.2 billion keywords. Search Atlas integrates directly with Google Search Console, Google Analytics 4, and Google Business Profile for real-time reporting accuracy. OTTO, the proprietary AI engine, automates technical, on-page, and off-page workflows to eliminate fragmented tool stacks. Pricing starts at $99 per month for 2,000 tracked keywords and 2 seats, with Growth at $199 and Pro at $399. A 7-day free trial provides full feature access without credit card requirements.

- SE Ranking (SE Visible). SE Ranking tracks ChatGPT, Perplexity, and Gemini citation visibility. SE Ranking combines historical ranking datasets with AI citation monitoring. The starting price is $189 per month.

- Ahrefs Brand Radar. Ahrefs Brand Radar monitors six prompt indexes and AI citation frequency. Ahrefs Brand Radar tracks Reddit mentions and YouTube mentions alongside backlink analysis. Starting price equals $129 per month with a potential $199 add-on.

- Writesonic (GEO). Writesonic GEO provides an AI Visitors dashboard that detects AI crawler traffic. Writesonic GEO integrates citation visibility with traditional SEO issue monitoring. The starting price is approximately $249 per month.

- SEMrush. SEMrush monitors Google AI Overviews and ChatGPT citation patterns. SEMrush integrates AI monitoring into organic SEO and PPC reporting stacks. Pricing varies by subscription tier.

- Morningscore. Morningscore tracks Google AI Overview triggers for small and mid-sized businesses. Morningscore integrates AI citation tracking into standard SEO performance dashboards. The starting price is $69 per month.

The platform comparison is shown below.

| Platform | Key AI Features | Traditional SEO Integration | Starting Price |

|---|---|---|---|

| Search Atlas | Tracks ChatGPT and AI Overviews, entity visibility scoring, and OTTO AI automation. | Keyword tracking (5.2B keywords), technical audits, backlink analysis, GSC, and GA4 integration. | $99/mo Starter, $199/mo Growth, $399/mo Pro. |

| SE Ranking | Tracks ChatGPT, Perplexity, and Gemini citations. | 13+ years of experience integrating historical ranking data. | $189/mo. |

| Ahrefs Brand Radar | Monitors six prompt indexes and citation frequency. | Backlink tracking, Reddit mentions, and YouTube mentions. | $129/mo (+$199 add-on possible). |

| Writesonic (GEO) | AI crawler detection dashboard. | SEO issue monitoring in Action Center. | ~$249/mo. |

| SEMrush | Tracks Google AI Overviews and ChatGPT citations. | Integrates SEO and PPC analytics. | Not specified. |

| Morningscore | Tracks Google AI Overview triggers. | AI metrics inside SEO dashboards. | $69/mo. |

These tools establish a unified measurement framework across traditional search rankings and AI citation visibility. Search Atlas leads for agencies and enterprises that require centralized automation, real-time Google data integration, and combined SERP plus LLM visibility tracking.

How To Optimize Content To Be Cited By AI?

The core methods to optimize content to be cited by AI are to write trophy content, place the answer upfront, align with vector chunks, implement Schema markup, reinforce entity authority, and remove extraction barriers. AI search optimization aligns structure and metadata with LLM ingestion patterns. AI referrals reached 1.13 billion monthly visits in 2025 and increased 357% year-over-year.

The methods are listed below.

- Write Trophy Content. Trophy Content is high-precision, entity-rich content that defines a topic better than competing pages. Trophy Content includes measurable facts, clear definitions, and structured explanations. Trophy Content increases retrieval probability because AI systems select the most authoritative fragment per query.

- Place the Answer Upfront. Place a direct factual answer within the first 50 to 70 words. The upfront answer increases first-pass retrieval eligibility. The upfront answer increases selection confidence during Query Fan Out processing.

- Align with Vector Chunk Size. Structure content into 150 to 300-word primary chunks. Chunk alignment improves embedding precision inside vector databases. Smaller logical sections increase fragment-level eligibility.

- Implement JSON-LD Schema Markup. Add FAQ, HowTo, Product, and Organization schema in JSON-LD format. Schema markup increases machine-readable clarity. Schema markup strengthens entity mapping and intent matching.

- Reinforce Entity Authority Signals. Define who, what, and where using explicit entity references. Include expert credentials and measurable data points (42 dB dishwasher, 25% traffic increase). Update statistics regularly to maintain freshness scoring.

- Remove Extraction Barriers. Replace walls of text with scannable headings and concise paragraphs. Remove unanchored claims that lack evidence. Ensure critical data appears in HTML instead of PDFs or images.

What measurable performance signals confirm optimization impact? Optimization impact appears in referral growth, citation frequency, and retrieval visibility metrics. The performance categories are shown below.

| Optimization Category | Key Technical Requirement | Measurable Impact Metric |

|---|---|---|

| Content Structure | 150-word primary vector chunks. | 357% year-over-year referral growth. |

| Technical SEO | JSON-LD Schema (FAQ, HowTo). | 1.13 billion monthly AI visits. |

| Data Precision | Measurable facts (42 dB, 25%). | Higher E-E-A-T confidence scoring. |

| Distribution | Reddit, LinkedIn seeding. | Increased LLM crawling frequency. |

Optimization for AI citation requires structural clarity, entity precision, and technical compliance. Structured execution increases the probability of inclusion across ChatGPT, Gemini, Microsoft Copilot, and Google AI Overviews.

Can a Page Get Cited Even If It Doesn’t Rank High?

Yes, a page gets cited even if it does not rank high because citation and ranking operate as independent evaluation systems. AI citation selection evaluates fragment relevance and entity alignment instead of page position. 59.6% of AI citations originate from pages outside the top 20 search results. Citation eligibility depends on semantic alignment, not numeric rank.

Why does ranking position not guarantee citation inclusion? Ranking position does not guarantee citation inclusion because AI systems retrieve passages rather than ordering full documents. A page ranking #1 holds only a 25% probability of an AI Overview citation. AI systems select fragments based on contextual alignment and authority validation. High rank increases discovery probability but does not control synthesis inclusion.

What technical condition must exist for citation eligibility? Citation eligibility requires indexation and crawl accessibility. Pages must appear in the search index to enter AI retrieval pipelines. XML sitemap inclusion and a clean HTML structure maintain accessibility. Fragment clarity increases retrieval probability even when domain authority remains lower than that of competitors.

Citation and ranking function as correlated but independent mechanisms. High rank improves exposure. Fragment precision determines citation inclusion.

Are Google AI Overviews the Same As Featured Snippets?

No, Google AI Overviews are not the same as Featured Snippets because AI Overviews synthesize multiple sources while Featured Snippets extract content from a single source. Featured Snippets display direct excerpts in a defined box. AI Overviews generate multi-source summaries using Gemini 2.0.

What factual performance differences separate AI Overviews and Featured Snippets? Featured Snippets maintain higher click-through rates than AI Overviews. Featured Snippets average a 42.9% CTR. AI Overviews generate approximately 1.08% organic CTR. Featured Snippets appear for 12.29% of queries. AI Overviews appear in over 50% of queries as of 2025.

How do source mechanics differ between the two formats? Featured Snippets extract verbatim text from one page, while AI Overviews cite multiple sources inside a synthesized response. AI Overviews display an average of 4 links when expanded. Featured Snippets rely on a single authoritative document.

What strategic implication follows from this difference? Featured Snippets prioritize traffic acquisition, while AI Overviews prioritize embedded attribution and brand presence. Featured Snippets remain critical for industries with high definition-based queries. AI Overviews dominate in research-heavy sectors such as Healthcare. Both formats operate differently in structure, visibility, and performance impact.

Do AI Citations Drive Traffic?

No, AI citations do not directly drive significant traffic volume because citation frequency shows almost no correlation with website visits. Statistical analysis reports an r-value of 0.02 between traffic and AI citation frequency. A domain with 16 visits generated 12,552 citations. A domain with 15 billion visits received fewer citations than that low-traffic source.

Why does traffic volume not predict AI citation visibility? LLM Traffic volume does not predict AI citation visibility because AI systems evaluate entity authority and fragment quality instead of session counts. Correlation between traffic and unique referencing domains equals r = 0.14. Citation selection depends on semantic relevance and primary source strength. AI systems prioritize original research and proprietary data over high-traffic aggregation pages.

What factor strongly influences AI citation frequency? Unique referring domains strongly influence AI citation frequency with a correlation of r = 0.71. Source diversity increases entity authority recognition. Structured content and factual precision increase retrieval probability. Citation inclusion reflects authority alignment rather than popularity metrics.

AI citations influence brand exposure inside AI answers. AI citations do not guarantee proportional referral traffic growth.

Will AI Citations Replace Traditional Search Rankings?

No, AI citations will not fully replace traditional search rankings because both systems depend on shared web infrastructure and authority signals. 44% of users identify AI-powered search as a primary insight source. 31% still rely on traditional search. 9% rely on brand websites directly.

Why does AI search represent structural change without full replacement? AI search represents a structural change because AI summaries are projected to appear in over 75% of Google searches by 2028. AI search influences purchasing decisions for 40% to 55% of consumers in electronics and wellness sectors. AI interfaces alter discovery pathways and reduce browse-and-click behavior.

Why does traditional search remain foundational? Traditional search remains foundational because AI models retrieve information from existing indexed web content. 76% of AI Overview citations originate from the top 10 organic results. High-quality backlinks remain credibility signals in ranking systems. Traditional search drives 43% of traffic and 23.6% of sales.

What risk exists for brands ignoring AI citations? Brands ignoring AI citations face potential traffic declines of 20% to 50% as decision-making shifts toward AI interfaces. Only 16% of brands track AI search performance systematically. AI citation inclusion determines visibility inside generative environments. Traditional ranking remains essential for traffic stability.

AI citations reshape visibility. Traditional search rankings remain the core traffic engine. Both systems operate in parallel rather than in replacement.