AI search engines are systems that apply artificial intelligence to understand intent, synthesize information, and deliver direct answers instead of ranked lists of links. An AI search engine uses large language models (LLMs), semantic search, and real-time retrieval to process natural language, long prompts, and multimodal inputs. AI search is primarily used to reduce friction in discovery, handle complex research, and guide decisions.

The importance of AI-powered search engines comes from how they reshape information delivery, user behavior, and visibility. Artificial intelligence in search engines consolidates answers, enables conversational follow-ups, and personalizes results based on context. As a result, AI searching increasingly acts as a primary source of insight for consumers, professionals, and enterprises.

Modern AI search combines distinct features with clear advantages and limitations. Key capabilities of AI search include retrieval-augmented generation, vector representations, real-time updates, and multimodal understanding. These features improve speed and comprehension, but limitations persist around hallucinations, citation reliability, data freshness, and reduced traffic to original sources.

Different types of AI search engines serve different purposes and signal where search AI is heading. General, enterprise, academic, privacy-first, and developer-focused AI systems differ from traditional search by prioritizing synthesis over navigation. Looking ahead, AI search engines are moving toward agentic, personalized, and multimodal experiences that will progressively redefine how search works.

What Is AI Search?

AI search is a discovery and retrieval model that uses artificial intelligence to interpret user intent, understand context, and generate direct, plain-language answers instead of returning ranked lists of URLs. AI search integrates Natural Language Processing (NLP), Machine Learning (ML), and Large Language Models (LLMs) to analyze semantics, nuance, and conversational meaning. AI search matters because modern users expect answers, summaries, and explanations within the interface rather than manual navigation across links. AI search functions by interpreting full queries as language problems and resolving them through synthesis rather than keyword matching.

How does AI search differ from keyword-based retrieval? AI search differs from traditional keyword-based retrieval because AI search evaluates conceptual meaning and context instead of matching literal text strings. AI search engines process entire sentences simultaneously using transformer models such as GPT and BERT, which allows AI search to understand word relationships across a query. AI search relies on vector embeddings that convert text into high-dimensional numerical representations, which enables retrieval based on semantic similarity. This mechanism allows AI search to return accurate answers even when exact keywords do not appear in source content.

What technical mechanisms define AI search behavior? AI search operates through architectures that combine retrieval and generation to produce grounded answers. AI search commonly uses Retrieval-Augmented Generation (RAG) to connect LLM generation with external knowledge bases, which improves factual accuracy and citation eligibility. AI search applies nearest neighbor algorithms to identify relevant information within vector spaces and distributed indexing to manage structured and unstructured data together. These mechanisms matter because they allow AI search to scale, remain context-aware, and enable real-time data integration through APIs for volatile information such as weather and financial markets.

What experience does AI search provide to users? AI search provides an answer-first, conversational user experience that reduces manual exploration and enables iterative questioning. AI search engines generate synthesized answers at the top of the results interface, maintain conversational context across follow-up queries, and enable multimodal inputs such as text, voice, and images. AI search emphasizes citations and verification by linking sources that validate generated summaries, which allows users to assess accuracy and reliability.

What Are AI Search Engines?

AI search engines are advanced retrieval platforms that combine Natural Language Processing (NLP), Machine Learning (ML), and Large Language Models (LLMs) to interpret user intent and generate direct, conversational answers instead of ranked link lists. AI search engines analyze context, semantics, and nuance to resolve questions in plain language. AI search engines matter because they shift discovery from navigation to answer consumption, which aligns with how users research, compare, and decide inside AI-mediated interfaces.

What foundational technologies define AI search engines? AI search engines operate on transformer-based language models and semantic retrieval systems that process full sentences to understand word relationships. Transformer models such as GPT and BERT enable parallel sentence processing, which allows AI search engines to capture contextual meaning across an entire query. Vector embeddings convert content into high-dimensional numerical arrays, which allows AI search engines to retrieve information based on conceptual similarity rather than literal keyword matches.

How do AI search engines retrieve and generate accurate answers? AI search engines use hybrid architectures that combine retrieval with generation to synthesize grounded responses. Retrieval-Augmented Generation (RAG) connects LLM output to external knowledge bases so AI search engines produce cited, up-to-date summaries. Nearest Neighbor Algorithms identify relevant information by measuring proximity inside vector spaces, while distributed indexing merges inverted indexes with vector search to manage structured and unstructured data at scale. These mechanisms matter because they increase precision, reduce hallucination risk, and enable real-time data integration through APIs.

What Are AI Search Engines Used For?

AI search engines are used to synthesize information, reduce research time, and deliver direct, cited answers to complex questions across professional and consumer contexts. AI search engines condense information from multiple sources into concise summaries, which reduces research time from over 30 minutes to 10–15 minutes per query. AI search engines matter because they replace manual link scanning with answer-first discovery that resolves intent inside a single interface.

How do AI search engines support deep research and information synthesis? AI search engines support deep research by generating long-form, multi-source reports that summarize and compare large information sets. AI search engines use Deep Research features to produce reports spanning dozens of pages and citing over 50 sources, which typically complete within 10 minutes. AI search engines answer multi-part questions that require structured outputs such as tables, comparisons, and time-based trend analysis, which improves decision speed and comprehension.

How are AI search engines used in academic and scientific investigation? AI search engines are used in academic research to identify scientific consensus, summarize studies, and rank evidence efficiently. Specialized AI search engines such as Consensus analyze databases exceeding 200 million research papers to extract one-sentence study summaries and display agreement levels using metadata such as citation count, citation velocity, and study design. AI search engines matter in research because vector-based semantic retrieval identifies relationships between studies beyond literal text matching.

How do developers and engineers use AI search engines? AI search engines are used in software development to accelerate coding, debugging, and system analysis. Developers use AI models such as Claude 3.5 to generate large code blocks, analyze hundreds of thousands of lines of code, and identify syntax or variable inconsistencies. AI search engines improve productivity by completing tasks that traditionally take 1–2 weeks within hours, although reliability varies, and human validation remains necessary.

How do AI search engines influence consumer behavior and e-commerce? AI search engines are used to guide purchasing decisions through comparison, recommendation, and zero-click discovery. Over 50% of consumers use AI search engines for product research and final buying decisions, with AI-influenced spending projected to exceed $750 billion by 2028. AI search engines perform complex product comparisons and shape brand perception through answer mentions, even when users do not visit external websites.

How are AI search engines applied in content creation and multimodal media? AI search engines are used for generating and refining text, images, audio, and video content. Platforms such as ChatGPT and Perplexity enable creative writing, image generation, and sentiment-aware editing at scale. AI search engines matter for creators because they understand full-document context and enable multimodal input, which expands creative workflows beyond text-only production.

How do professionals use AI search engines for real-time data and productivity? AI search engines are used to retrieve live information and integrate research directly into business workflows. AI search engines access real-time data such as sports schedules, news, and stock prices through APIs and integrate with productivity suites like Microsoft 365 and Google Workspace. Through automation platforms such as Zapier, AI search engines enable continuous research that routes insights into downstream business tools.

How are AI search engines used in healthcare and specialized fields? AI search engines are used for diagnosis, research acceleration, and assistive technologies in specialized domains. Healthcare applications include radiology image analysis, pharmaceutical compound testing, robotic surgery assistance, and accessibility tools for visually impaired users through vision models and text-to-speech systems. AI search engines matter in these fields because they accelerate pattern recognition and decision-making.

What risks affect how AI search engines are used? AI search engines are used cautiously when accuracy, bias, or hallucination risk carries high consequences. Documented errors, bias amplification, and outdated information generation limit use in scientific and medical decision-making without verification. AI search engines are therefore often paired with traditional search and human review, especially as they redirect an estimated 20–50% of web traffic away from traditional search engines.

Why Are AI Search Engines Important?

AI search engines are important because they reshape how information is discovered, how decisions are made, and how visibility is distributed across digital ecosystems. AI-driven search changes user behavior, reallocates traffic and revenue, and compresses research and evaluation into answer-first experiences. The importance of AI search engines is explained through the 6 key factors below.

The 6 factors that explain why AI search engines matter are listed below.

- Mass adoption and behavioral shift. AI-powered summaries already appear in approximately 50 percent of Google searches, with projections reaching 75 percent by 2028. Half of all consumers intentionally seek AI-powered search experiences, and usage as a primary search method is expected to grow from 13 million to 90 million United States adults between 2023 and 2027. Platforms such as ChatGPT process tens of millions of search-like prompts daily, confirming mainstream adoption across age groups.

- Influence on consumer decisions and commerce. AI-driven search now plays a central role in how users research and finalize purchases. More than 70 percent of users rely on AI search during early research, while 40 to 55 percent use it to complete buying decisions in industries such as electronics, travel, and financial services. An estimated 750 billion United States dollars in revenue is expected to flow through AI-powered search platforms by 2028, driven by consolidated recommendations and zero-click discovery.

- Productivity and efficiency gains. AI search significantly reduces time spent on research, programming, and analysis. Research tasks that previously required over 30 minutes can now be completed in approximately 10 minutes through synthesis and summarization. In technical workflows, development cycles that once took 1 to 2 weeks are compressed into hours, while deep research features generate multi-source reports with over 50 citations in minutes.

- Disruption of traditional search visibility. AI-driven search redirects an estimated 20 to 50 percent of traffic away from traditional search engines, which introduces measurable risk for brands that rely on organic rankings alone. Real-world cases such as Chegg demonstrate material traffic loss following AI search adoption. Visibility now depends on being cited across diverse sources, where owned websites represent only a small fraction of referenced material.

- Advanced retrieval and synthesis capabilities. AI-powered search systems understand intent and context through semantic and vector-based retrieval rather than keyword matching. These systems break complex questions into sub-queries, scan massive documents or datasets, and synthesize findings into cohesive answers. This shift transforms search from a reactive lookup mechanism into a proactive decision-support layer.

- Strategic advantage and organizational readiness. Only a small percentage of brands currently track or optimize their presence in AI-generated answers, despite widespread belief that AI visibility will determine business success within the next 5 years. AI systems prioritize trust, consistency, and recognizable brand signals when selecting sources. Early adopters gain a disproportionate advantage because most websites fail to update optimization signals shortly after launch.

These factors explain why AI search engines represent a structural shift rather than a feature upgrade. AI-driven search now functions as a control layer for visibility, authority, and demand in answer-first digital environments.

What Are the Core Features of AI Search Engines?

AI search engines rely on a defined set of technical and experiential features that enable intent understanding, real-time retrieval, and answer-first information delivery. These features replace keyword-based navigation with semantic interpretation, synthesis, and conversational interaction. The features listed below explain how AI search engines retrieve, process, and present information at scale.

The 5 core features of AI search engines are listed below.

- Vector representations and semantic search. AI search engines represent queries and documents as high-dimensional vectors that capture conceptual meaning. Semantic and vector-based retrieval allow AI search engines to match intent and context rather than exact phrasing. This capability enables accurate responses to multi-part questions and enables query fan-out across related subtopics.

- Transformer models and Large Language Models (LLMs). Transformer models such as GPT and BERT process entire sentences in parallel to identify relationships between words. Large Language Models generate coherent, plain-language responses that synthesize information across many sources. This feature enables conversational summaries, multi-turn dialogue, and complex prompts that are significantly longer than traditional keyword queries.

- Retrieval-Augmented Generation (RAG). Retrieval-Augmented Generation connects language model output to external documents, indexes, and live data sources. RAG grounds responses in verifiable material, which reduces hallucinations and enables footnoted citations. This feature allows AI search engines to analyze large documents, books, and repositories when answering specific questions.

- Real-time updates and live web access. AI search engines access live information through APIs, crawling systems, and data feeds. Real-time retrieval enables accurate answers for volatile topics such as news, financial markets, and live events. This capability distinguishes AI search engines from static chatbots that rely on training snapshots.

- Answer-first synthesis and zero-click delivery. AI search engines prioritize synthesized answers over ranked link lists. Conversational summaries condense information from multiple sources into a single response, which results in zero-click outcomes for a large share of searches. Source citations remain available for verification, even though most users do not click through to original pages.

These features explain why AI search engines function as synthesis-driven discovery systems rather than traditional retrieval tools. AI search engines combine semantic understanding, generative models, live retrieval, and direct answers to reshape how information is accessed and used.

What Are the Main Advantages of Using AI Search Engines?

AI search engines offer measurable advantages in speed, accuracy, personalization, and economic impact compared to traditional keyword-based search systems. These advantages emerge from AI-driven synthesis, intent understanding, and real-time retrieval that compress effort while expanding output quality. The main advantages are outlined below.

The key advantages of using AI search engines are listed below.

- Time efficiency and productivity recovery. AI-powered search completes complex research tasks in half the time required by traditional engines while achieving higher accuracy. Information retrieval that previously took 10 minutes or more is reduced to seconds or minutes through automated synthesis. Employees spend an average of 1.8 hours per day searching for information, and AI search consolidates this effort into a single interface that recovers lost productivity.

- Higher user satisfaction and adoption rates. User studies show that 82 percent of users find AI-generated search results more useful than keyword-based results. Preference data indicates that 44 percent of users identify AI search as their primary source of insight, exceeding traditional search, brand websites, and review platforms. Adoption continues to accelerate, with primary AI search usage projected to grow from 13 million users in 2023 to 90 million by 2027.

- Improved decision-making and purchase influence. AI search engines cover the full decision journey by aggregating comparisons, reviews, and specifications into unified answers. Over 70 percent of users rely on AI search for early-stage research, while 40 to 55 percent use AI-generated results to finalize purchases in sectors such as electronics, travel, grocery, and wellness. This consolidation reduces friction and increases confidence during high-impact decisions.

- Economic and financial impact. AI-driven search contributes directly to revenue growth and cost reduction. Projections estimate that AI-powered search will drive approximately 750 billion United States dollars in revenue by 2028 and contribute 15.7 trillion dollars to the global economy by 2030. Inefficient information retrieval costs a 1,000-employee organization up to 2.5 million dollars per year, and AI search mitigates these losses through faster access to answers.

- Accuracy through advanced retrieval methods. AI search engines use vector embeddings and semantic retrieval to match queries based on conceptual meaning rather than literal keywords. Retrieval-Augmented Generation connects language models with real-time data sources to provide coherent, data-backed answers. These systems handle vague, multi-faceted, or incomplete queries more effectively than traditional search mechanisms.

- Personalization and user experience improvements. AI search tailors results based on immediate conversational context, location, device type, and specific constraints such as budget or goals. Multimodal interaction allows users to search using text, voice, images, and video. Concise, ad-free summaries with citations improve clarity while preserving verification options.

- Strategic advantages across industries. AI search delivers sector-specific benefits by adapting retrieval and synthesis to specialized workflows. Financial services use AI search for fraud detection and risk analysis, healthcare organizations accelerate diagnosis and treatment planning, e-commerce platforms increase conversion through personalized recommendations, and cybersecurity teams identify threats through large-scale pattern monitoring.

These advantages explain why AI search engines outperform traditional search across efficiency, satisfaction, and economic value. AI-driven search systems compress time, expand insight, and influence outcomes across consumer and enterprise environments.

What Are the Key Challenges and Limitations of Using AI Search Engines?

AI search engines introduce accuracy, bias, privacy, technical, environmental, legal, and ethical challenges that limit reliability and safe adoption at scale. These limitations affect trust, governance, and long-term value realization. The key challenges below describe where AI search engines fall short and why human oversight remains necessary.

The key challenges and limitations of using AI search engines are listed below.

- Accuracy and hallucination risk. AI systems generate responses that appear confident even when facts are incorrect or fabricated. Breaking news scenarios amplify this risk because unverified content surfaces rapidly and propagates misinformation. Expert review of primary sources remains necessary because AI lacks judgment to identify false premises or missing evidence.

- Algorithmic bias and representation gaps. Training data reflects structural imbalances across language, geography, and demographics. Only a small fraction of the world’s languages appear in model training, and Western content dominates higher education materials. Ranking systems often favor large publishers, while historical bias in data reinforces unfair outcomes in areas such as healthcare diagnostics.

- Privacy and data security exposure. AI search engines collect granular behavioral data to enable personalization and monetization. Documented incidents include unauthorized indexing of private conversations and widespread insecurity across generative AI deployments. Human-like interfaces increase disclosure risk because users share more personal information than intended.

- Opacity and technical limitations. Deep learning systems operate as black boxes, which obscures the reasoning behind outputs even for developers. Narrow task specialization limits contextual awareness and adaptability in dynamic environments. Adversarial attacks manipulate inputs to mislead results and compromise system integrity.

- Cognitive dependence and skill erosion. Heavy reliance on AI-generated answers correlates with reduced critical thinking and decision-making capacity. Constant exposure to synthesized outputs degrades independent analysis and encourages passive consumption of information.

- Environmental and resource costs. Model training and inference consume significant energy and water resources. Large language model training emits substantial carbon dioxide, while routine query handling requires continuous cooling and infrastructure scaling. These costs challenge sustainability at global adoption levels.

- Legal, regulatory, and financial barriers. Regulation lags technological deployment, creating uncertainty around data protection and copyright ownership. High upfront costs restrict access for small and medium-sized enterprises and widen the global digital divide. Many organizations fail to realize value due to limited expertise and unclear strategy.

- Social and ethical concerns. Automation threatens job stability in clerical and customer service roles. Child safety, consent, and misuse risks persist across consumer-facing systems. Industry leaders continue to debate existential risk as system capabilities scale faster than governance frameworks.

These challenges show that AI search engines require governance, transparency, and human validation to operate safely and effectively. Responsible adoption depends on striking a balance between efficiency gains and considerations of accuracy, equity, security, and long-term societal impact.

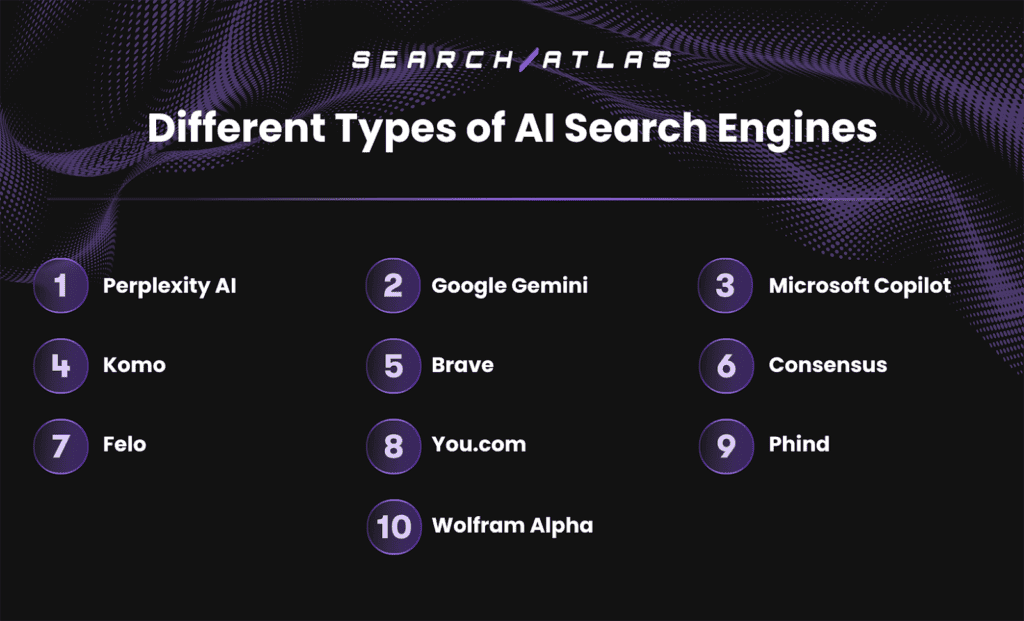

What Are the Different Types of AI Search Engines?

AI search engines are categorized by how they retrieve information, the data sources they prioritize, and the primary use cases they optimize for. These types range from general-purpose conversational engines to highly specialized research, privacy-first, developer-focused, or computational systems.

The 10 main types of AI search engines are listed below.

1. Perplexity AI

Perplexity AI is a generative AI-powered answer engine that delivers single, synthesized answers grounded in live web data, which matters because it replaces link navigation with verified research outputs. Perplexity AI positions itself as an answer engine rather than a traditional search engine and prioritizes accuracy, citations, and conversational clarity. Perplexity AI matters because users increasingly expect direct, trustworthy answers instead of ranked link lists.

What problem does Perplexity AI aim to solve? Perplexity AI aims to eliminate fragmented research caused by keyword-based search and manual source scanning. Perplexity AI functions as a digital research assistant that interprets intent and contextual nuance. Perplexity AI reduces time spent opening multiple pages and synthesizing information across sources.

How does Perplexity AI work at a technical level? Perplexity AI works through sub-document retrieval and large-context grounding to generate accurate answers. Perplexity AI indexes granular snippets of approximately 5–7 tokens and retrieves roughly 130,000 tokens per query to populate the language model context window. Perplexity AI uses this retrieval-first approach to reduce hallucinations and ensure responses reflect current web content.

What key features differentiate Perplexity AI from other AI search engines? Perplexity AI differentiates through automated research depth, focus-based retrieval, and vertical-specific tools, which are listed below.

- Deep Research, which executes dozens of searches and synthesizes hundreds of sources into reports within 2–4 minutes.

- Focus Modes, which constrain retrieval to Academic, Writing, Coding, or General contexts.

- Internal document search, which allows indexing and querying of up to 500 uploaded files.

- Vertical tools, including finance data access, shopping comparisons, and the Comet Chromium-based browser for integrated workflows.

How does Perplexity AI handle data sources, citations, and trust? Perplexity AI emphasizes transparency through numbered citations and a dedicated Sources panel. Perplexity AI averages 5.01 citations per standard query and exceeds 10 sources in Pro Search and Deep Research modes. Perplexity AI prioritizes authoritative domains with an average source age of 14 years and frequently references YouTube transcripts as structured evidence.

What are the main strengths and limitations of Perplexity AI? Perplexity AI delivers high-speed, citation-rich answers but faces legal and consistency challenges. Strengths include real-time retrieval, conversational continuity, and dense sourcing. Limitations include publisher lawsuits, conflicting disclosures about model usage, valuation discrepancies, and debate over Perplexity AI classification as an answer engine rather than a search engine.

How is Perplexity AI priced and accessed? Perplexity AI uses a tiered access model across free and paid plans. The different tiers of Perplexity AI are listed below.

- Free tier, which provides standard answers with citations.

- Pro plan, which unlocks advanced models and Deep Research.

- Enterprise Pro, which enables internal knowledge indexing and organizational search.

Perplexity AI is accessible through web apps, mobile apps, and the Comet browser.

Who uses Perplexity AI and for what use cases? Perplexity AI serves researchers, professionals, and power users who require fast, cited synthesis. Common use cases include academic research, competitive analysis, technical investigation, enterprise knowledge discovery, and shopping comparisons. Perplexity AI fits users who prioritize verification, follow-up exploration, and answer-first workflows.

2. Google Gemini

Google Gemini is Google’s multimodal AI system integrated directly into Google Search to generate synthesized answers at a massive global scale. Google Gemini powers AI Overviews, which reach over 2 billion users monthly, and Google Gemini matters because it embeds generative AI into the most dominant search infrastructure rather than operating as a standalone tool.

What is the main goal and target problem of Google Gemini? Google Gemini exists to shift Google Search from keyword-based retrieval to intent-based answer delivery while defending Google’s core search business. Google Gemini addresses the risk posed by generative competitors by reducing latency, improving answer quality, and keeping users inside Google-owned environments instead of external AI platforms.

What core mechanism powers Google Gemini search behavior? Google Gemini works as an answer engine layered on top of Google’s existing web index and retrieval systems. Google Gemini supports Retrieval-Augmented Generation pipelines and uses huge context windows, with Gemini 1.5 Pro supporting up to 2 million tokens, enabling synthesis across massive document sets and multimodal inputs.

What functions differentiate Google Gemini from other AI search engines? Google Gemini differentiates through scale, multimodality, and native ecosystem integration, including.

- Multimodal reasoning, which interprets text, images, charts, and video in a single query flow.

- Deep research workflows, which analyze large document sets and complex topics.

- Personalized responses, which incorporate location, device context, and interaction history.

- Ecosystem reach, which spans Search, Chrome, Ads, and Pixel devices under one assistant layer.

How does Google Gemini handle sources, reliability, and accuracy? Google Gemini relies on Google’s indexing infrastructure while introducing AI-specific publisher controls and ranking signals. Google Gemini uses semantic intent decoding, transformer models, and reinforcement learning to optimize answer quality. Google introduced the Google-Extended crawler to allow publishers to manage AI usage, although public messaging remains inconsistent on real-time data access.

Where is Google Gemini practically applied today? Google Gemini supports everyday search, research, visual lookup, and decision-making tasks within Google products. Users apply Google Gemini for product comparisons, explanations, travel planning, visual interpretation, and large-document analysis without leaving Search or Chrome. Enterprises apply Google Gemini within Ads and productivity workflows.

What are the strengths and limitations of Google Gemini? Google Gemini delivers benchmark-leading reasoning performance but faces transparency and definition challenges. Strengths include unmatched scale, multimodal capabilities, and deep integration with Google’s index. Limitations include conflicting statements about real-time access, publisher concerns, and internal disagreement over whether Google Gemini represents search or a collaborative AI assistant.

How do users access Google Gemini and at what cost? Google Gemini is primarily accessed through existing Google products rather than standalone pricing tiers. Google Gemini appears inside Google Search, Chrome, Ads, and as the default assistant on Pixel 9 and Pixel 9 Pro devices. Some advanced features are gated behind Google Labs or paid Google Workspace plans.

Which users benefit most from Google Gemini? Google Gemini is designed for mainstream consumers and professionals already embedded in the Google ecosystem. Google Gemini best serves users who want AI-powered answers, visual understanding, and research capabilities without changing platforms or workflows.

3. Microsoft Copilot

Microsoft Copilot is an AI-powered search and productivity system that combines real-time web search with organizational data across Microsoft products. Microsoft Copilot operates across Bing, Windows, Edge, and Microsoft 365. Microsoft Copilot matters because it transforms search into an enterprise-wide answer layer that connects public information with private work data.

What is the main goal and target problem of Microsoft Copilot? Microsoft Copilot aims to unify fragmented information retrieval across workplace tools and the web. Microsoft Copilot targets inefficiencies caused by switching between emails, files, meetings, and search engines. Microsoft Copilot focuses on delivering grounded answers using both internet data and internal organizational context.

What core mechanism powers Microsoft Copilot’s search behavior? Microsoft Copilot operates through the Prometheus framework, which merges real-time Bing search with OpenAI GPT models. The Prometheus orchestrator generates iterative queries to connect the Bing index with models such as GPT-4, GPT-4 Turbo, and GPT-4o. Microsoft Copilot grounds responses using semantic indexing and Microsoft Graph data, including files, meetings, and user activity.

What functions differentiate Microsoft Copilot from other AI search engines? Microsoft Copilot differentiates through universal search, multimodal discovery, and deep enterprise integration. Microsoft Copilot handles queries across Microsoft 365 apps and third-party platforms through over 100 connectors. Microsoft Copilot enables live synthesis from Teams transcripts, multi-turn discovery, and visual understanding through Copilot Vision. Some core differentiators between Microsoft Copilot and other AI search engines are listed below.

- Universal search across Microsoft 365 applications and external platforms

- Real-time synthesis of meetings, documents, and conversations

- Multimodal understanding through text, images, and live visual input

How does Microsoft Copilot handle sources, reliability, and accuracy? Microsoft Copilot emphasizes transparency through inline citations and sentence-level source links. Microsoft Copilot extracts information from documents, PDFs, and images while recognizing semantic similarity across files. Microsoft Copilot includes explicit disclaimers acknowledging potential errors in generative responses.

Where is Microsoft Copilot practically applied today? Microsoft Copilot handles enterprise search, meeting analysis, and document intelligence. Users apply Microsoft Copilot to retrieve emails, summarize reports, answer questions during live meetings, and search across internal systems. Microsoft Copilot provides consumer-facing AI answers through Bing search.

What are the strengths and limitations of Microsoft Copilot? Microsoft Copilot delivers strong enterprise grounding but introduces complexity and latency trade-offs. Strengths include deep access to organizational data, robust citations, and broad platform reach. Limitations include slower response times than traditional search, early hallucination concerns, and user confusion over search versus chat identity.

How do users access Microsoft Copilot and at what cost? Microsoft Copilot is available through Bing, Windows, Edge, and Microsoft 365 with tiered licensing. Consumer access is bundled with Bing and Windows, while enterprise capabilities require Microsoft 365 Copilot subscriptions. Availability excludes Russia and China, and some government cloud features remain delayed.

Which users benefit most from Microsoft Copilot? Microsoft Copilot is designed for professionals and organizations embedded in the Microsoft ecosystem. Microsoft Copilot best serves teams that need AI-powered search across emails, documents, meetings, and the web within governed environments.

4. Komo

Komo is a privacy-first generative AI search engine that delivers synthesized answers instead of traditional link-based results, which matters because it prioritizes relevance and trust over advertising influence. Komo operates as an AI answer engine using Retrieval-Augmented Generation to interpret intent and produce concise overviews. Komo matters because it offers an ad-free, non-tracking alternative to commercial AI search models.

What is the main goal and target problem of Komo? Komo aims to reduce research friction caused by keyword-based search and manual information scanning. Komo targets users overwhelmed by fragmented sources and long-form content. Komo focuses on turning complex questions into a structured understanding with minimal effort.

How does Komo work at a technical level? Komo operates using Retrieval-Augmented Generation that combines Large Language Model reasoning with real-time web retrieval. Komo applies Natural Language Processing and optimized vector search to interpret semantic intent rather than literal keywords. Komo analyzes live web data to avoid reliance on static knowledge snapshots.

What key features differentiate Komo from other AI search engines? Komo differentiates through multi-mode search, visual reasoning tools, and interactive research workflows. Core feature groups of Komo are listed below.

- Search mode, which delivers concise, synthesized answers.

- Chat mode, which enables conversational follow-up and refinement.

- Research mode, which produces structured reports from multiple sources.

- Explore mode, which surfaces social and community-driven perspectives.

Komo provides Mind Maps for visual topic mapping and Perspective Pulse for summarizing consensus.

How does Komo handle data sources, reliability, and accuracy? Komo grounds responses through source attribution and domain-specific ranking to reduce hallucinations. Komo provides citations in specialized modes to enable verification. Komo reports strong early performance, with accuracy rated at 4.7/5 and functionality at 4.8/5.

Where is Komo practically used today? Komo is used for research synthesis, exploratory learning, and trend analysis. Users apply Komo to summarize long articles, explore complex topics, and analyze community discussions from platforms such as Reddit. Komo enables non-linear discovery through iterative follow-up exploration.

What are the strengths and limitations of Komo? Komo delivers strong privacy protection and advanced exploration tools, but shows inconsistencies in public documentation. Strengths include an ad-free model, no user tracking, flexible modes, and visual analytics. Limitations include conflicting pricing reports, naming discrepancies, and future-dated or projected source data.

How is Komo priced and accessed? Komo offers free access with paid tiers for advanced features and model selection. Pricing sources vary, with entry plans reported between $15 and $20 per month and premium tiers up to $30. Paid users switch between models such as Claude, ChatGPT, DeepSeek, and Llama 3.3.

Who is Komo designed for? Komo is designed for privacy-conscious researchers, analysts, and knowledge workers who value synthesis over traditional search volume. Komo best serves users who want ad-free discovery, conversational research, and visual understanding without tracking or profiling.

5. Brave

Brave is a privacy-first AI search engine that delivers generative answers directly inside the search results page, which matters because it combines AI synthesis with an independent, non-tracking search infrastructure. Brave integrates AI summaries into SERPs through Ask Brave. Brave matters because it reduces reliance on third-party indexes while enforcing strict user privacy.

What is the main goal and target problem of Brave? Brave aims to provide AI-powered answers without compromising user privacy or data ownership. Brave targets concerns around tracking, profiling, and ad-driven ranking models. Brave focuses on delivering concise, grounded answers without storing queries or building user profiles.

How does Brave work at a technical level? Brave operates using Retrieval-Augmented Generation grounded in its independent search index. Brave synthesizes content from tens of billions of indexed pages to generate answers at the top of results. Brave offers Deep Research for complex queries and enables AI summaries through query triggers such as “???” or URL summarization.

What key features differentiate Brave from other AI search engines? Brave differentiates through native SERP integration, independent indexing, and browser-level AI capabilities. Brave integrates contextual enrichments such as news, videos, products, and business listings into report-style answers. Brave Leo extends AI functionality into the browser, supporting webpage, PDF, and document analysis, agentic browsing, and keyboard-driven AI skills.

How does Brave handle data sources, reliability, and accuracy? Brave grounds AI responses in verifiable web sources and emphasizes transparency through citations. Brave uses its own index of over 20–30 billion pages and includes AI Grounding to reduce hallucinations. Brave acknowledges mixed user feedback, with strengths in factual grounding and weaknesses in extended conversational context retention.

Where is Brave practically used today? Brave is used for privacy-safe search, AI summarization, and browser-based research workflows. Users apply Brave to obtain quick answers, summarize webpages, and conduct deeper research without leaving the SERP. Developers use the Brave Search API to build RAG pipelines and train models.

What are the strengths and limitations of Brave? Brave offers strong privacy guarantees and infrastructure independence, but faces performance perception trade-offs. Strengths include zero data retention, encrypted chats, independent indexing, and browser-native AI. Limitations include inconsistent query volume reporting, mixed accuracy perceptions, and less robust multi-turn reasoning than some competitors.

How is Brave priced and accessed? Brave Search and Ask Brave are available for free, with additional AI features offered through Brave Leo subscriptions. Brave Leo offers bring-your-own-model flexibility and enterprise access via APIs available in the AWS Marketplace. Brave services are accessible globally through the Brave browser and web search.

Who is Brave designed for? Brave is designed for privacy-conscious users, developers, and organizations that require AI search without data tracking. Brave best serves users who want generative answers, independent indexing, and browser-integrated AI within a privacy-first environment.

6. Consensus

Consensus is an AI-powered scientific search engine that synthesizes answers exclusively from peer-reviewed research, which matters because it replaces generic web search with evidence-based discovery. Consensus indexes over 200–220 million scientific documents across all research domains. Consensus matters because it functions as an AI-native alternative to Google Scholar while prioritizing accuracy, transparency, and scientific grounding.

What is the main goal and target problem of Consensus? Consensus aims to help users quickly understand what scientific research actually agrees on. Consensus targets the problem of information overload in academic literature. Consensus focuses on reducing the time required to locate, evaluate, and synthesize high-quality evidence from large research corpora.

How does Consensus work at a technical level? Consensus operates through a multi-step hybrid retrieval and ranking architecture optimized for scientific precision. The system combines semantic embeddings with BM25 keyword search to retrieve relevant papers. Consensus then re-ranks results using research quality signals such as citation count, recency, and journal reputation before applying a final AI-driven precision ranking.

What key features differentiate Consensus from other AI search engines? Consensus differentiates through evidence-only synthesis and specialized scientific reasoning tools. Consensus uses Retrieval-Augmented Generation only after retrieving verified papers. Consensus provides tools such as the Consensus Meter for visualizing agreement on yes-or-no questions, Pro Analysis for structured synthesis, Deep Search for iterative literature reviews, and Study Snapshots that extract methods, populations, and outcomes.

How does Consensus handle data sources, reliability, and accuracy? Consensus enforces strict grounding by guaranteeing that all cited sources exist within its scholarly database. Consensus eliminates fabricated citations through checker models that validate relevance before summarization. Consensus provides direct hyperlinks to peer-reviewed sources and does not train models on user search data, preserving privacy and trust.

Where is Consensus practically used today? Consensus is used for academic research, evidence-based decision-making, and scientific validation. Researchers apply Consensus to review literature efficiently. Clinicians, policy analysts, and students use Consensus to assess scientific agreement, extract study-level details, and access open-access research through DOI links.

What are the strengths and limitations of Consensus? Consensus delivers high scientific accuracy but is limited to scholarly content. Strengths include a massive curated corpus, hallucination-resistant design, advanced filtering by study type and quality, and measurable performance gains in accuracy and latency. Limitations include corpus size discrepancies across sources and constrained usefulness outside academic or scientific contexts.

How is Consensus priced and accessed? Consensus offers free access with paid tiers for advanced research capabilities. Pro and Deep Search features unlock expanded analysis depth, including synthesis across up to 50 papers. Consensus is accessible via a web-based platform with integrations for citation managers such as Zotero and EndNote.

Who is Consensus designed for? Consensus is designed for researchers, students, clinicians, and professionals who require evidence-based answers. Consensus best serves users who prioritize peer-reviewed science, transparent citations, and methodological rigor over general web search convenience.

7. Felo

Felo is a chatbot-style AI search engine that aggregates and synthesizes information from multiple global sources, which matters because it enables cross-language, real-time research beyond traditional link-based search. Felo was developed by Sparticle Inc. and launched in September 2024. Felo matters because it positions AI search as a multilingual research assistant rather than a regional or language-bound engine.

What is the main goal and target problem of Felo? Felo aims to remove language, regional, and content-quality barriers from information discovery. Felo targets the inefficiency of keyword search, ad-heavy results, and language silos. Felo focuses on delivering clean, reliable answers by filtering low-quality content and synthesizing data across platforms.

How does Felo work at a technical level? Felo operates through agent-based AI systems that perform real-time web retrieval and synthesis. Felo uses multiple large language models, including OpenAI o3, GPT-4, Claude 3.7 Sonnet, and DeepSeek R1, to interpret intent and integrate live data. Felo retrieves information dynamically rather than relying on static training datasets.

What key features differentiate Felo from other AI search engines? Felo differentiates through cross-language retrieval, knowledge organization, and automated outputs. Felo enables Cross-Language Information Retrieval, allowing users to query in one language and receive synthesized results from global sources. Felo offers Topic Collections, mind maps, and one-click generation of structured PPT slides for professional use.

How does Felo handle data sources, reliability, and accuracy? Felo emphasizes source transparency by providing citations for every response. Felo filters advertisements and content farms to prioritize reliable information. Felo acknowledges potential translation inaccuracies as a limitation of multilingual synthesis, which affect precision in some cross-language queries.

Where is Felo practically used today? Felo is used for multilingual research, academic summarization, and market intelligence. Users apply Felo to summarize over 245 million academic papers, monitor global market trends, and analyze social platforms such as X, Reddit, and Xiaohongshu. Felo performs media summarization, including thematic analysis of YouTube videos.

What are the strengths and limitations of Felo? Felo offers strong multilingual reach and synthesis capabilities but has functional constraints. Strengths include ad-free search, real-time data retrieval, cross-language research, and automated knowledge management. Limitations include weak image and video search capability, no offline functionality, and dependency on internet speed and translation accuracy.

How is Felo priced and accessed? Felo provides free access with optional paid plans for higher usage and advanced models. Some sources describe Felo as fully free, while others cite Pro plans starting at $14.99 per month. Felo is accessible via web, iOS, Android, browser extensions, and is set as a default search engine.

Who is Felo designed for? Felo is designed for global researchers, analysts, and professionals working across languages and regions. Felo best serves users who require multilingual synthesis, academic summaries, and real-time global insights without advertising interference.

8. You. com

You.com is an AI-powered answer engine that replaces traditional link aggregation with synthesized, citation-backed responses, which matters because it redefines search as an evidence-driven reasoning system. You.com functions as an LLM-native search platform designed for complex queries. You.com matters because it prioritizes grounded answers, transparency, and multi-model flexibility over ranked link lists.

What is the main goal and target problem of You.com? You.com aims to solve the inefficiency and cognitive overload caused by keyword-based search results. You.com targets users who need direct, well-cited answers rather than fragmented snippets. You.com focuses on delivering factual synthesis while reducing hallucinations and misinformation.

How does You.com work at a technical level? You.com operates through a Retrieval-Augmented Generation architecture enabled by an orchestration layer of specialized AI modules. The system retrieves relevant documents from a custom web index and feeds long-context snippets into a Large Language Model. You.com uses semantic vectors, transformers, and a classifier that determines whether a query requires web retrieval or pure generation.

What key features differentiate You.com from other AI search engines? You.com differentiates through modular AI modes and model-agnostic architecture. Users select from 21 AI models, including GPT-4o, Claude 3.5 Sonnet, Llama 3.1 405B, and Gemini 1.5 Flash. You.com offers ARI for deep research, Genius Mode for complex reasoning, Research Mode for multi-source synthesis, YouCode for programming queries, and YouImagine for image generation.

How does You.com handle data sources, reliability, and accuracy? You.com enforces strict citation logic to ensure transparency and verification. Every claim includes live citations linked to original sources. You.com runs vector search and keyword filters in parallel and presents multiple viewpoints when topics involve conflicting information. Research Mode generates a single answer with up to 30 distinct citations.

Where is You.com practically used today? You.com is used for research, coding, data analysis, and professional knowledge work. Developers use YouCode for targeted programming help. Analysts use ARI to scan hundreds of sources for reports. Third-party tools such as DuckDuckGo AI Chat rely on the You.com Web Search API.

What are the strengths and limitations of You.com? You.com delivers advanced reasoning and flexibility but incurs higher computational costs. Strengths include multi-model choice, deep research capabilities, enterprise-grade uptime, and fast indexing of breaking information. Limitations include slower response times than traditional search and occasional factual errors, as shown in comparative testing.

How is You.com priced and accessed? You.com offers free access with paid plans for advanced features and higher usage limits. The platform provides both consumer and enterprise access through its web interface and APIs. Running costs remain high due to the computational intensity of LLM-based search.

Who is You.com designed for? You.com is designed for researchers, developers, analysts, and professionals who require cited, multi-step reasoning. You.com best serves users who value transparency, model choice, and deep synthesis over speed or traditional search simplicity.

9. Phind

Phind is a developer-focused AI search engine that combines live web retrieval with generative reasoning, which matters because it delivers verified, up-to-date technical answers instead of static link lists. Phind positions itself as a hybrid between search and AI reasoning. Phind matters because it prioritizes speed, accuracy, and source-backed responses for technical problem solving.

What is the main goal and target problem of Phind? Phind aims to reduce the time developers spend searching, validating, and stitching together technical information. Phind targets inefficiencies caused by outdated documentation, fragmented forum posts, and unreliable AI hallucinations. Phind focuses on delivering actionable answers grounded in current sources.

How does Phind work at a technical level? Phind operates through live internet querying combined with a multi-layered AI reasoning framework. Phind retrieves real-time data from sources such as official documentation, Stack Overflow, and GitHub. Phind uses distinct Research, Implementation, and Maximum Reasoning layers to discover, interpret, and apply information.

What key features differentiate Phind from other AI search engines? Phind differentiates through speed, developer-centric design, and integrated workflows. Phind uses the proprietary Phind-70B model and benchmarks up to 5× faster than GPT-4. Phind enables model switching for Pro users, provides conversational refinement through follow-up prompts, and integrates directly into developer environments through a VS Code extension.

How does Phind handle data sources, reliability, and accuracy? Phind enforces transparency through explicit citations and live source linking. Phind grounds answers in real-time web data to reduce hallucinations. Phind enables shareable, cached result links to preserve exact AI-generated solutions for verification and collaboration.

Where is Phind practically used today? Phind is used for software development, debugging, and system architecture research. Developers apply Phind to generate code snippets, troubleshoot errors, and explore complex topics such as micro frontends. Teams using integrated search workflows report 30–40% faster feature delivery and fewer post-release defects.

What are the strengths and limitations of Phind? Phind delivers high-speed, verified technical answers but shows feature and interface constraints. Strengths include real-time accuracy, deep technical focus, long context windows for Pro users, and strong IDE integration. Limitations include missing file upload, output cutoff issues, UI polish gaps, and conflicting reports on comparative context window advantages.

How is Phind priced and accessed? Phind offers tiered pricing based on usage and model access. Plans include Plus at $15 per month, Pro at $30 per month, and Business at $40 per user per month. Phind requires an active internet connection and enforces usage limits typical of compute-intensive AI platforms.

Who is Phind designed for? Phind is designed for software engineers, technical teams, and developers who need fast, source-verified answers. Phind best serves users who prioritize real-time accuracy, code-centric workflows, and integrated development environments over general-purpose AI search.

10. Wolfram Alpha

Wolfram Alpha is a computational knowledge engine that produces exact, deterministic answers through symbolic reasoning, which matters because it computes results from first principles instead of retrieving documents. Wolfram Alpha operates on the Wolfram Language and Mathematica. Wolfram Alpha matters because it provides traceable, verifiable outputs rather than probabilistic text generation.

What is the main goal and target problem of Wolfram Alpha? Wolfram Alpha aims to answer factual, mathematical, and scientific questions with absolute precision. Wolfram Alpha targets problems where probabilistic AI introduces ambiguity or hallucinations. Wolfram Alpha focuses on delivering results that users are able to audit, reproduce, and validate step by step.

How does Wolfram Alpha work at a technical level? Wolfram Alpha works by translating natural language queries into formal symbolic computations. The system uses Natural Language Processing to interpret intent and maps queries into executable code shown in an Input Interpretation box. Wolfram Alpha computes answers in real time using curated data, equations, and logic rules rather than cached web content.

What key features differentiate Wolfram Alpha from other AI search engines? Wolfram Alpha differentiates through symbolic computation, explainable outputs, and advanced analytics. The platform provides Show Steps for mathematical derivations, automatic unit normalization, predictive modeling, and dynamic visualizations such as charts, diagrams, and family trees. Wolfram Alpha generates new knowledge through computation instead of summarization.

How does Wolfram Alpha handle data sources, reliability, and accuracy? Wolfram Alpha ensures accuracy through expert-curated datasets and transparent computation. Over 150 specialists manually structure and validate data from verified providers such as the CIA World Factbook and academic sources. Wolfram Alpha acts as a glass-box system where every output links back to formulas or curated datasets.

Where is Wolfram Alpha practically used today? Wolfram Alpha is used for mathematics, science, engineering, education, and data analysis. Users apply Wolfram Alpha for calculus, physics modeling, chemistry equations, statistics, and real-time data synthesis, such as weather forecasting. Wolfram Alpha powers factual computations in Bing, DuckDuckGo, Siri, Alexa, Excel, and ChatGPT integrations.

What are the strengths and limitations of Wolfram Alpha? Wolfram Alpha delivers unmatched precision but limited open-ended reasoning. Strengths include deterministic accuracy, explainability, expert-curated data, and complex computation. Limitations include processor-intensive response times, reliance on structured domains, and documented historical edge-case errors such as calendar inconsistencies.

How is Wolfram Alpha priced and accessed? Wolfram Alpha offers free access with paid Pro tiers for advanced features. Pro plans unlock step-by-step solutions, extended computation time, and higher precision outputs. Wolfram Alpha is available via web, mobile apps, and embedded integrations across platforms.

Who is Wolfram Alpha designed for? Wolfram Alpha is designed for students, researchers, engineers, scientists, and professionals who require exact answers. Wolfram Alpha best serves users who prioritize mathematical rigor, traceability, and deterministic computation over conversational or generative search.

Does AI Search Differ from Traditional Search Engines?

Yes, AI search differs fundamentally from traditional search engines because AI search acts as an answer engine that synthesizes information, while traditional search engines act as index-based retrieval systems. Traditional search relies on crawling, indexing, and keyword matching to return ranked links that users must evaluate manually. AI search uses Large Language Models, semantic vectors, and Retrieval-Augmented Generation to interpret intent, expand queries, and produce direct answers. AI search enables conversational, multi-turn queries and multimodal input, but introduces hallucination and trust risks, which makes traditional search more reliable for source evaluation and verification.

How Do AI Search Engines Actually Work and What Technologies Power Them?

AI search engines work by interpreting user intent, expanding queries, retrieving relevant data in real time, and synthesizing information into direct answers using layered AI architectures. AI search replaces keyword matching with semantic understanding, paragraph-level evaluation, and Retrieval-Augmented Generation to produce grounded, conversational responses at scale. The process relies on tightly integrated models and infrastructure that operate across retrieval, reasoning, and personalization layers.

Core technologies that power AI search engines include the following.

- Natural Language Processing (NLP). NLP enables AI search engines to interpret intent, context, and meaning from natural language queries. NLP converts unstructured text into structured signals by identifying entities, relationships, and goals. NLP allows different phrasings of the same question to return equivalent answers and enables multi-turn conversational search.

- Machine Learning (ML). Machine learning models detect patterns in large, unlabeled datasets to improve ranking, relevance, and intent prediction. ML systems learn from user interactions such as clicks, refinements, and dwell time to continuously adjust result prioritization. ML enables query fan-out, where one prompt expands into multiple sub-queries for broader coverage.

- Large Language Models (LLMs). Large Language Models generate fluent, human-readable answers and synthesize information across sources. LLMs operate on transformer architectures and use vector embeddings to represent meaning numerically. LLMs power platforms such as Gemini, GPT-4, Claude, and Grok, forming the reasoning layer of AI search engines.

- Retrieval-Augmented Generation (RAG). RAG combines search and generation in a two-step process that retrieves relevant documents before generating an answer. RAG grounds AI responses in real data, reduces hallucinations, and enables live citations. RAG architectures often rely on Bing, proprietary crawlers, or hybrid indexes for retrieval.

- Predictive Analytics and Personalization. Predictive systems adjust results based on location, device, time, and behavioral signals. Predictive analytics enable intent forecasting, autocomplete suggestions, and contextual result refinement. These systems operate in real time to adapt answers dynamically.

These technologies form a multi-layer architecture that transforms search from document discovery into real-time answer synthesis.

Can AI Search Be Considered Reliable?

No, AI search cannot be considered fully reliable because its outputs frequently contain unsupported claims, misleading citations, and probabilistic errors that resemble confidence rather than truth. Large-scale studies show that only about 51.5% of AI-generated sentences are fully supported by citations, while up to 47% of statements from leading models lack source backing.

AI systems hallucinate facts, invent sources, and reflect historical bias embedded in training data. Citations often create a false sense of credibility, reducing user verification. As a result, AI search works best as a starting point that requires independent validation through trusted sources.

Can AI Search Improve the Accuracy of Search Results?

Yes, AI search improves search accuracy in specific situations, but it does not do so consistently across all queries. Techniques such as semantic search, vector embeddings, passage ranking, multimodal retrieval, and retrieval-augmented generation improve relevance, speed, and task completion, as shown by higher conversions and user satisfaction. However, large-scale studies show generative search still produces incorrect or unsupported answers in more than 60 percent of cases, with frequent citation errors. As a result, AI search improves efficiency and relevance more than factual reliability, making verification essential.

Which Industries Are Currently Using AI Search Solutions?

AI search is already embedded across multiple sectors where fast access to complex, distributed, or high-volume data directly impacts productivity, risk management, and decision quality.

The 7 industries most actively using AI search today, along with how it is applied are listed below.

- Enterprise Software and Knowledge Management. Organizations use AI search to unify internal knowledge across tools like Atlassian, Notion, AWS, Oracle, and Credal. These systems enable cross-platform search, internal document retrieval, and agentic assistants grounded in proprietary data with governance and audit controls.

- Legal, Finance, and Compliance. Law firms and financial institutions deploy AI search for document intelligence, legal research, contract analysis, fraud detection, regulatory monitoring, and market sentiment analysis. Platforms like Hebbia and Kensho help surface concepts, risks, and precedents across massive document sets.

- Healthcare and Life Sciences. AI search is used to interpret medical images, extract insights from clinical notes, enable virtual health assistants, and accelerate drug discovery. NLP-driven search improves access to patient data and research, with hundreds of AI-enabled medical tools now FDA-approved.

- Retail, E-commerce, and Marketing. Retailers apply AI search for visual product discovery, personalized recommendations, conversational commerce, and social sentiment tracking. These systems help customers find products faster and allow brands to optimize messaging and inventory decisions.

- IT, Software Development, and Cybersecurity. AI search enables debugging, log analysis, network troubleshooting, and threat detection. Developer-focused tools surface relevant code, identify UX issues, and assist with programming tasks, where AI performance gains have rapidly increased.

- Transportation, Logistics, and Manufacturing. Companies use AI search for route optimization, warehouse automation, autonomous vehicle operations, and product feedback analysis. These systems search real-time sensor, traffic, and customer data to improve efficiency and safety.

- Government, National Security, and Education. Public-sector organizations rely on AI search for data consolidation, intelligence analysis, exploitation detection, and personalized education. Tools like Palantir enable large-scale analysis, while AI tutors assist students and educators.

Across industries, AI search adoption is accelerating as organizations move beyond link-based retrieval toward systems that synthesize, interpret, and act on information. While use cases differ, the common driver is the need to extract reliable insights from complex data at scale.

How Do AI Search Engines Enhance Personalization in Search Results?

AI search engines enhance personalization by adapting search results to individual user intent, behavior, and contextual signals rather than applying uniform rankings. AI search engines analyze semantic meaning, break complex queries into sub-queries, and re-rank results in real time using signals such as location, device type, time of day, and interaction patterns.

AI search engines build session-based and persistent user models to tailor outputs to specific personas, preferences, and goals. In enterprise and consumer environments, AI search engines integrate cross-platform data and predictive heuristics to anticipate needs, increasing relevance, engagement, and measurable business outcomes.

Could AI Search Engines Eventually Replace Traditional Search Engines?

No, AI search engines are unlikely to fully replace traditional search engines, but they are fundamentally changing how search works. AI excels at long, complex, multi-parameter queries, synthesis, and assisted purchasing, and user adoption is accelerating rapidly. However, traditional search engines remain dominant for navigational intent, real-time information, local discovery, and trust-based source evaluation.

Structural constraints such as Google’s data advantage, link-based authority signals, and the ad-driven web economy, combined with the dependence of AI on high-quality human-created content, point toward a hybrid future where AI search augments traditional search rather than replacing it.

What Does the Future of AI Search Engines Look Like?

The future of AI search engines points toward conversational, agent-driven answer systems that replace link navigation with synthesized, task-completing responses. AI search engines are shifting from open-web discovery to consolidated answers delivered directly on results pages, reducing clicks and increasing zero-click behavior. Market data shows rapid adoption, with primary AI search users projected to grow from 13 million in 2023 to 90 million by 2027. This shift matters because AI search engines increasingly function as the first and final point of information access, shaping how users research, decide, and act.

How will technology and economics reshape AI search behavior and visibility? AI search engines are evolving into agentic systems capable of executing actions such as purchases, reservations, and form completion, while maintaining persistent personalization across sessions. Revenue flows are consolidating, with $750 billion projected to move through AI-powered search by 2028, while traditional search traffic declines by 20% to 50%.

These changes drive the transition from Search Engine Optimization to Generative Engine Optimization, where visibility depends on entity clarity, citation inclusion, and AI comprehension rather than keyword rank. Accuracy limits, data freshness conflicts, and monetization models remain unresolved constraints, but AI-driven summaries, multimodality, and real-time synthesis define the dominant trajectory.