Brand AI visibility is a marketing performance metric that measures how often and how accurately a brand appears in AI-generated answers across platforms (ChatGPT, Gemini, Perplexity, Google AI Overviews). Brand AI visibility defines AI visibility as inclusion, citation, and sentiment framing inside synthesized responses instead of blue-link rankings. Brand AI visibility matters because 25% of organic search traffic migrates to AI assistants by 2026. Brand AI visibility matters because 60% of searches end without a click, and AI-referred sessions increased by 527% in early 2025. AI visibility shifts authority from keyword rank to conversational inclusion because only 2 to 7 domains appear per response.

How to measure brand AI visibility? Measure brand AI visibility through structured prompt testing, AI visibility tools, and composite scoring models that track share of AI voice, AI citation frequency, position in AI overviews, brand sentiment in AI responses, traffic from AI search, and AI visibility index. Manual prompt testing establishes Inclusion Rate baselines. Tool for AI visibility measurement (Profound, Otterly.AI, Brand Radar by Ahrefs, Peec AI, Clearscope, AI Visibility Toolkit by Semrush) executes prompt libraries at scale. API-based monitoring applies rolling averages and model consensus validation to control volatility. AI strategic visibility requires benchmarking inclusion against 3 to 5 competitors, targeting 40 to 60% Inclusion Rates for dominance, and flagging values below 20% as invisibility.

What are the core components of AI visibility? The core components of AI visibility are LLM parsability and structure, semantic density and relevance, trust and authority (E-E-A-T), entity consistency, recency, and brand mentions and citations. LLM parsability and structure increase extractability through schema markup, server-side rendering, and answer-first architecture. Semantic density and relevance strengthen contextual authority through topic clusters and entity alignment. Trust and authority reinforce citation eligibility through verified authorship and third-party validation. Entity consistency maintains unified brand identifiers across knowledge graphs. Recency increases citation probability because AI Overviews derive 85% of citations from 2023 to 2025 content. Brand mentions and citations determine the share of voice within compressed answer sets. Align AI strategic visibility with organic KPIs through GA4 referral segmentation, branded query growth tracking, sentiment audits, and AI citation share correlation with pipeline impact.

What Is Brand AI Visibility?

Brand AI Visibility is a marketing performance metric that measures the frequency, positioning, and sentiment of a brand entity within generative artificial intelligence responses across large language models. Brand AI Visibility measures inclusion inside conversational answers generated by platforms (ChatGPT, Google Gemini, Microsoft Copilot, Perplexity). Brand AI Visibility signals authority, relevance, and market recognition through entity presence inside synthesized responses.

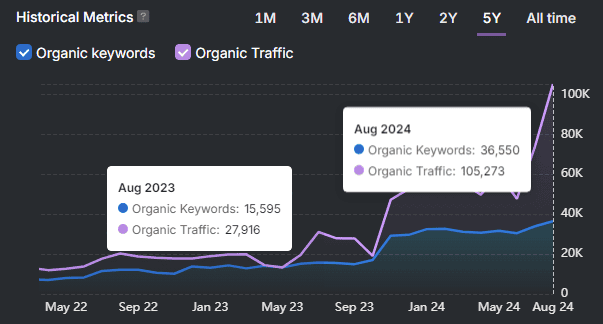

When did Brand AI Visibility emerge, and why does Brand AI Visibility matter? Brand AI Visibility emerged in 2023 after the mass adoption of ChatGPT shifted search behavior toward AI-driven answer engines. ChatGPT reached 100 million users within 2 months of its November 2022 launch. AI-referred sessions increased by 527% by 2025, which required a structured framework to track how AI systems cite and recommend commercial entities. Brand AI Visibility matters because 25% of organic traffic migrates to AI chatbots by 2026. Brand AI Visibility transforms conversational inclusion into a primary performance indicator.

How does Brand AI Visibility differ from traditional SEO metrics? Brand AI Visibility differs from traditional SEO metrics because Brand AI Visibility measures conversational inclusion instead of blue-link rankings and click-through rates. Brand AI Visibility belongs to SEO performance measurement but focuses on AI-generated summaries rather than the top 10 search results. Brand AI Visibility determines influence in zero-click environments because 60% of Google searches end without a click.

What are the major components of Brand AI Visibility? The major components of Brand AI Visibility are Mentions, Citations, and Entity Recognition. The major components are listed below.

- Mentions (2023) are occurrences of a brand name inside an AI-generated response without a hyperlink. Mentions establish awareness in zero-click environments.

- Citations (2023) are clickable source links inside AI responses. Large language models cite 2 to 7 domains per answer instead of 10 traditional results.

- Entity Recognition (2024) maps a brand to a defined industry or expertise cluster through structured data. 81% of AI-cited pages use schema markup to define organizational identity.

What determines Brand AI Visibility performance? Brand AI Visibility performance depends on technical optimization, authority signals, and accuracy and sentiment metrics. Extractable architecture and E-E-A-T increase inclusion probability. Answer-First formatting improves visibility by 30 to 40%. Monitoring Entity Correctness remains critical because hallucinated errors cause 75% more reputational damage than omission.

How does Brand AI Visibility function within digital strategy? Brand AI Visibility functions as both a dependent and enabling metric inside modern digital strategy. Brand AI Visibility requires structured data, Server-Side Rendering, and authoritative content to ensure crawlability. Brand AI Visibility enables zero-click conversions for the 80% of consumers who rely on instant AI answers. Brand AI Visibility prevents competitor narrative dominance inside AI-generated summaries.

Why Measuring Brand AI Visibility Matters?

Measuring Brand AI Visibility matters because consumer brand discovery shifts from keyword-based search to conversational interactions within Large Language Models. Platforms (ChatGPT, Claude) replace blue-link navigation with synthesized answers. This shift changes how visibility functions inside digital ecosystems. The AI visibility market expanded to 35+ specialized platforms that track brand presence in AI-generated conversations.

Why is the growth of the AI visibility market evidence of strategic importance? The growth of the AI visibility market proves strategic importance because Brand AI Visibility functions as a competitive benchmarking requirement. Brand AI Visibility replaces experimental tracking with structured Share of Voice measurement across defined prompt sets. Tool for AI visibility benchmarking (Profound, Otterly.AI, Peec AI) calculates Inclusion Rate against direct competitors. This shift quantifies the presence of AI-generated answers instead of relying on ranking reports.

How do brands optimize visibility within LLM conversational interactions? Brands optimize visibility within LLM conversational interactions through data-driven adjustments that raise the Inclusion Rate from 20 to 30% to 80%. A documented case reached measurable inclusion within 2 weeks after adding a specific use case that already drove 30% of competitor recommendations. Model Consensus validates authority when a brand appears in 3 out of 4 models for the same prompt. Model Consensus confirms durable inclusion instead of stochastic variance.

What technical factors influence brand visibility in the LLM source layer? Technical factors influence brand visibility in the LLM source layer through landing page prioritization, citation URL tracking, and semantic alignment. Large Language Models prioritize top-level landing page copy over PDFs or buried FAQ sections. Citation URL tracking identifies whether AI engines reference owned pages or third-party reviews. Semantic alignment prevents Language Mismatch when a brand uses “enterprise data protection” while models categorize the sector as “cloud backup solutions.”

How does visibility tracking inform PR and earned media strategy? Visibility tracking informs PR and earned media strategy through domain-level influence analysis. Domain analysis identifies which platforms (Reddit, review platforms, editorial outlets) drive brand mentions. Domain influence determines whether to strengthen owned content architecture or expand third-party authority signals. Query-cluster tracking reduces variance and isolates structural authority patterns that affect AI synthesis consistency.

What metrics determine the reliability of AI visibility tools? The reliability of AI visibility tools depends on testing frequency, prompt scale, and repeated statistical validation. Platforms executing thousands of prompts daily produce stable trend signals. Running 50 prompts once per month produces statistically unstable estimates. LLMs conceal real prompt logs and maintain personalization layers. Measurement produces directional performance indicators instead of absolute certainty.

How AI Changes What Visibility Means?

AI changes what visibility means by shifting the primary metric from ranking position to conversational authority within AI-generated answers. Conversational authority measures a brand’s inclusion in synthesized responses produced by answer engines (ChatGPT, Perplexity, Google Gemini). Visibility no longer depends on blue-link placement. Visibility depends on direct participation in AI-generated summaries where mentions and citations function as authority signals.

How does conversational authority redefine brand presence? Conversational authority redefines brand presence by measuring how frequently and how credibly a brand appears inside AI-generated responses. AI models define brand presence through mentions and citations that act as inclusion thresholds in compressed answer sets. AI systems speak on behalf of brands in summaries. Influence over training data, citations, and narrative framing determines whether a brand becomes a recommended authority or remains absent.

How does conversational authority impact web traffic and consumer behavior? Conversational authority impacts web traffic and consumer behavior by accelerating zero-click consumption and reducing direct site visits. As of December 2024, 80% of consumers use AI summaries at least 40% of the time. 44% prefer AI summaries over visiting brand websites. Organic web traffic declines by 15 to 25% because AI answers satisfy intent without a click. ChatGPT referral traffic converts at lower rates than Google Search traffic, which alters attribution and funnel measurement models.

What are the new determinants of visibility in Generative Engine Optimization (GEO)? The new determinants of visibility in Generative Engine Optimization are earned media, third-party editorial coverage, and branded search volume. Visibility correlates with hyperlinked mentions on editorial domains instead of being isolated on-site SEO. Branded search volume functions as authority validation because repeated brand queries signal trust to AI systems. GEO prioritizes editorial authority and structured entity signals instead of single-keyword optimization.

How has the measurement and monitoring of visibility evolved? Measurement and monitoring of visibility evolved from keyword rank tracking to sentiment analysis and persona-based model tracking. Visibility measurement now evaluates whether AI models portray a brand positively, neutrally, or negatively across multiple platforms. Monitoring accounts for personal context and cross-model variance. Sentiment scoring and Inclusion Rate replace keyword position as primary indicators.

How does AI visibility affect paid search and market share? AI visibility affects paid search and market share by compressing ad exposure and concentrating control within dominant platforms. AI Overviews push paid ads downward on search result pages. Search ad spend increases by 10% to $253.2 billion, while Google captures 86% of total investment. Google’s market share drops below 90% in October 2024. Non-Google AI engines hold less than 1% of the market.

What are the key behavioral and market metrics that demonstrate this shift? The key behavioral and market metrics quantify AI usage, traffic impact, and advertising concentration. The metrics are listed below.

- Consumer AI Usage. 80% use AI summaries at least 40% of the time.

- User Preference. 44% prefer AI summaries over visiting brand websites.

- Traffic Impact. 15 to 25% projected loss in organic web traffic.

- Ad Market Growth. 10% increase to $253.2 billion in total spend.

- Google Ad Share. 86% percentage of search ad investment captured.

- Google Search Share. Below 90% market share recorded in October 2024.

- Competitor Share. Below 1% market share of non-Google AI engines.

AI visibility redefines visibility as inclusion inside synthesized answers instead of position inside rankings. Brands that fail to measure conversational authority risk exclusion from AI-native discovery environments where compressed answer sets determine market influence.

How to Measure Brand AI Visibility?

Organizations measure Brand AI Visibility by systematically testing brand inclusion, citation frequency, sentiment positioning, and competitive Share of Voice across Large Language Models (LLMs). Measuring Brand AI Visibility requires structured prompt testing, statistical sampling, cross-platform tracking, and longitudinal benchmarking because LLM outputs are probabilistic and non-deterministic. The primary methods used to measure Brand AI Visibility are listed below.

- Manual Prompt Testing

- Using Third-Party Visibility Trackers

- API Integration for Continuous Tracking

- Benchmarking AI Visibility Over Time

Measuring Brand AI Visibility requires combining manual validation, automated tracking, API-based scaling, and longitudinal benchmarking to produce statistically defensible insights. Organizations that implement multi-layered measurement frameworks maintain consistent inclusion within AI-generated answers and reduce the risk of narrative displacement by competitors.

1. Manual Prompt Testing

Manual Prompt Testing is a foundational measurement method where teams manually execute predefined prompts across Large Language Models (LLMs) to observe brand inclusion, citation presence, and narrative framing. Manual prompt testing serves as the recommended starting point for understanding LLM response patterns and establishing a baseline for brand AI visibility improvement. Manual prompt testing identifies brand appearance frequency, positioning context, and alignment with current messaging while exposing risks, outdated pricing, or inaccurate product claims that require correction at the source layer.

What are the key metrics for manual prompt testing of LLMs? The key metrics for manual prompt testing are brand association, accuracy and context, sentiment and bias, and response format. Brand Association measures whether a brand appears as a leader, example, alternative, or footnote within generated answers. Accuracy and Context verify factual correctness of products, pricing, positioning, and use cases. Sentiment and Bias compares how models favor established incumbents versus niche competitors. Response Format evaluates whether the brand appears in list-style recommendations, summaries, or extended explanations.

How do analysts use strategic prompt variation in manual prompt testing? Analysts use strategic prompt variation by running controlled variations of high-intent or brand-specific queries to observe output shifts. Small wording adjustments, replacing “small team tools” with “enterprise-grade software,” surface entirely different brand sets due to probabilistic model behavior. This variation maps inclusion triggers and reveals how prompt structure influences brand visibility across models. Strategic prompt variation exposes how list formatting, qualifiers like “best,” and category framing impact inclusion probability.

What are the methodological limitations of manual prompt testing? The methodological limitations of manual prompt testing are statistical invalidity, probabilistic variance, scalability constraints, and limited data quality. The limitations are listed below.

- Statistical Invalidity produces results that measure noise rather than stable visibility patterns.

- Probabilistic Variance causes ranking inversions between identical runs due to model temperature and hardware variability.

- Scalability Issues make manual tracking unsustainable as prompt libraries expand.

- Data Quality Constraints generate anecdotal screenshots instead of decision-grade datasets.

How does manual prompt testing compare to distributional approaches? Manual prompt testing provides an initial benchmark, whereas distributional approaches establish statistically defensible baselines. Manual snapshots capture a single state of visibility but fail to account for model inconsistency documented in peer-reviewed research from ACL and NeurIPS. Distributional sampling executes prompts repeatedly and calculates confidence intervals at the topic-cluster level, which separates structural authority signals from stochastic noise.

How does the transition from manual prompt testing to advanced measurement frameworks look? The transition from manual prompt testing to advanced measurement frameworks looks like a shift from manual snapshots to automated and distributional tracking systems. Manual Prompt Testing captures isolated inclusion rates across limited prompts. Automated measurement executes hundreds or thousands of prompts across multiple Large Language Models. Automation reduces bias introduced by personalization layers and hardware variance.

2. Using Third-Party Visibility Trackers

Third-Party visibility trackers measure brand AI visibility by calculating share of voice across structured prompt libraries and separating brand mentions from clickable citations inside generative AI responses. Third-Party visibility trackers define success as appearing in 40 to 60% of relevant AI-generated answers. Third-Party visibility trackers classify brands with an inclusion rate below 20% inclusion rate as invisible. GPTBot requires 1,500 crawled pages for 1 visitor click, which proves that inclusion matters more than traffic volume.

How do advanced visibility trackers categorize brand positioning? Advanced visibility trackers categorize brand positioning by labeling appearances as leader, alternative, or niche option inside AI-generated recommendations. The tool for AI visibility categorization (Profound) evaluates framing context and mentions frequency. Framing context measures narrative authority instead of raw mention counts. Structured categorization converts AI visibility from binary inclusion into a ranked authority hierarchy.

How to implement third-party visibility tracking operationally? Implement third-party visibility tracking through a 3-step operational framework. Firstly, map 15 to 20 conversational queries derived from sales calls, support tickets, and review platforms. Secondly, execute daily or weekly structured prompt runs through the Tool for AI visibility tracking (Ahrefs, Semrush, Profound). Thirdly, cross-reference the inclusion rate and citation share with traditional search metrics and CRM pipeline data. Source mapping identifies influential domains (G2, Capterra). Accuracy audits detect misinformation that distorts buyer research.

What are the primary ranking factors influencing AI visibility in tracking data? The primary ranking factors influencing AI visibility prioritize stable entity signals and third-party authority over page-level SEO metrics. LLMs prioritize authoritative aggregators (Wikipedia, Crunchbase, G2, GitHub, Reddit, TikTok). Extraction optimization increases inclusion probability because AI systems prioritize structurally consistent and semantically aligned content. Entity stability and citation density determine recurring inclusion.

What are the limitations of current AI visibility tracking tools? The limitations of current AI visibility tracking tools include statistical variance, cross-platform inconsistency, and weak revenue correlation. Inclusion rate varies across platforms even for identical prompts. A tool for AI visibility tracking often reports conflicting results due to sampling differences. High AI visibility scores lose strategic value when pipeline growth, demo requests, and qualified lead volume remain unchanged.

3. API Integration For Continuous Tracking

API Integration for continuous tracking enables continuous measurement of brand AI visibility through programmatic execution of structured prompt libraries across LLM APIs. API Integration replaces manual interface interaction with direct API requests. API Integration connects custom Python scripts to platforms (OpenAI GPT, Google Gemini). API Integration executes thousands of queries across locations and timeframes. API Integration parses text responses and Tool_calls metadata to detect brand mentions and separate grounded outputs from hallucinations.

What are the core technical components of AI visibility metrics implementation? The core technical components of AI visibility metrics implementation are scripting, data management, and model control. Scripting replaces fragile UI scraping with direct API calls that prevent CAPTCHA failures. Data management stores parsed outputs inside structured databases (Google BigQuery) for historical trend analysis and Business Intelligence reporting. Model control tests defined LLM variants (“instant”, “reasoning”) instead of default session outputs that vary by context.

How are AI visibility metrics calculated and scored in API-based systems? AI visibility metrics are calculated through a composite weighted scoring model called the AI Visibility Index. AI visibility index applies the formula (SIR × 0.5) + (AI Mention Score × 0.3) + (Entity Frequency Score × 0.2). Entity frequency score measures cross-platform inclusion consistency across engines (ChatGPT, Perplexity). Composite scoring standardizes evaluation across models and reduces interpretive variance.

What operational advantages does API-based tracking provide for enterprise monitoring?

API-based tracking provides scalability, data integrity, and cross-platform uniformity for enterprise monitoring. Scheduled batch execution monitors product lines and competitive landscapes through recurring prompt runs. Active retrieval bypasses static training cutoffs (GPT-4 October 2023 limit) to validate current pricing and brand references. Unified architecture maintains consistent evaluation across engines (ChatGPT, Claude, Gemini, Perplexity).

How does API Integration ensure compliance and risk mitigation? API Integration ensures compliance and risk mitigation through official Terms of Service alignment and elimination of unauthorized scraping. Direct API access prevents IP blocking and account suspension linked to UI automation. Structured logging generates reproducible audit trails for verification and regulatory review under frameworks (Computer Fraud and Abuse Act).

What are the performance differences between API-based tracking and UI scraping methods? API-based tracking differs from UI scraping in stability, accuracy, cost structure, data format, and bias management. The performance differences are listed below.

| Feature | API-Based Integration | UI Scraper Methods |

|---|---|---|

| Architecture Stability | Resistant to UI updates. | Breaks under CAPTCHA changes. |

| Behavioral Accuracy | Executes defined prompt environments. | Retrieves downgraded web segments. |

| Cost-Effectiveness | Scales after upfront development. | Requires recurring manual or vendor costs. |

| Data Format | Returns structured JSON. | Produces unstructured text requiring cleaning. |

| Bias Management | Removes personalization bias. | Introduces personalization variance. |

How do API-based AI visibility metrics improve data reliability and reduce bias? API-based AI visibility metrics improve reliability and reduce bias through standardized response collection across controlled environments. Systems execute prompts across devices, geographies, and configurations to mirror real usage patterns. Structured JSON outputs enable validation, aggregation, and anomaly detection at scale. Built-in validation detects hallucinations and inconsistent entity references, which preserves measurement integrity across competitive datasets.

4. Benchmarking AI Visibility Over Time

Organizations benchmark AI Visibility over time by tracking brand mention frequency, share of voice, citation accuracy, and sentiment trends across a fixed prompt library and defined competitor set. AI Visibility benchmarking measures brand presence inside LLM responses to determine relative authority. Brand mention frequency between 40 to 60% indicates a strong presence. Brand mention frequency below 20% indicates functional invisibility inside AI-generated answers.

What are the core metrics for measuring AI visibility and performance? The core metrics for measuring AI visibility and performance are brand mention frequency, share of voice, citation accuracy, sentiment analysis, and branded search volume. Brand mention frequency tracks appearance frequency across structured prompts. Share of voice compares inclusion against 3 to 5 competitors to define market position. Citation verifies correctness because 99.3% of LLM citations originate from open-access sources. Inaccurate citations reduce sales velocity because sales teams correct misinformation. Sentiment analysis classifies portrayal as positive, neutral, or negative. Branded search volume tracks growth in brand-specific queries and correlates with increased AI visibility. High ideal customer profile segments generate 5.1x higher lifetime value than generic segments, which increases benchmarking precision.

How do organizations establish an AI visibility benchmarking methodology? Organizations establish an AI visibility benchmarking methodology by defining a baseline and executing controlled conversational query sets. Firstly, map 15 to 20 full conversational queries derived from sales calls, support tickets, and Reddit discussions. Secondly, audit brand positioning and citation presence across platforms (ChatGPT, Perplexity) before optimization. Thirdly, apply monthly reporting cycles to isolate directional trends and reduce short-term model variance.

What is the relationship between AI search queries and click-through rates? The relationship between AI search queries and click-through rates reflects a zero-click environment where 73% of AI queries produce no external clicks. GPTBot generates 1 click per 1,500 crawled pages, which confirms that traffic volume fails to represent authority. AI-originated users convert at higher rates than traditional organic visitors because entry occurs at later funnel stages. CRM attribution captures AI-assisted conversions and reveals non-linear discovery paths.

What are the critical risks and strategic shifts in AI visibility management? The critical risks in AI visibility management are ranking fallacy, vanity metrics, and pipeline leakage. AI ranking position generates noise because LLM outputs vary by session context. High AI visibility scores lack value when revenue, demo requests, and qualified pipeline remain unchanged. Poor AI visibility causes silent disqualification during research phases when prospects encounter inaccurate or missing brand data. 71% of SEO professionals adapt to AI environments, yet many prioritize legacy rankings instead of conversational authority and citation accuracy.

Tools for Measuring Brand AI Visibility

The primary tools for measuring Brand AI Visibility are specialized AI visibility platforms that track brand mentions, citations, sentiment, and share of voice across generative AI systems. These tools execute structured prompt libraries, calculate AI visibility scores, and benchmark brand inclusion against competitors in platforms (ChatGPT, Gemini, Perplexity, and Claude). The primary tools for measuring Brand AI Visibility are listed below.

- LLM Visibility Tool by Search Atlas

- Profound

- Otterly.AI

- AI Visibility Toolkit by Semrush

- Brand Radar by Ahrefs

- Clearscope

- Peec AI

These tools collectively enable structured AI visibility monitoring, competitive benchmarking, and sentiment analysis across generative AI platforms. Selecting the appropriate tool depends on the required prompt volume, enterprise scalability, competitive depth, and integration with existing analytics infrastructure.

1. LLM Visibility Tool by Search Atlas

The LLM Visibility Tool by Search Atlas measures Brand AI Visibility by tracking brand, product, and domain mentions across LLM platforms (ChatGPT, Claude, and Gemini). The LLM Visibility Tool executes structured prompt libraries based on industry keywords and buying-intent queries to monitor how brands appear inside AI-generated answers. The LLM Visibility Tool quantifies brand presence through proprietary AI visibility scores and share of Model (SoM) percentages, which reflect inclusion within AI training data influence and Retrieval-Augmented Generation (RAG) outputs.

What are the primary quantitative metrics inside the LLM Visibility Tool? The primary quantitative metrics inside the LLM Visibility Tool are AI Visibility Score, Share of Model (SoM), Citation Frequency, and Rank Tracking. These metrics standardize performance comparison across multiple LLM environments and establish competitive baselines. The primary quantitative metrics are listed below.

| Metric | Definition | Measurement Unit | Primary Function |

|---|---|---|---|

| AI Visibility Score | Proprietary brand presence value | Numerical Score | Quantifies brand presence relative to competitors |

| Share of Model (SoM) | Total brand mention volume across tracked prompts | Percentage (%) | Calculates proportional inclusion within industry queries |

| Citation Frequency | Direct source attribution count | Integer Count | Tracks links to brand domains as authoritative sources |

| Rank Tracking | Recommendation list placement | Numerical Position | Monitors whether the brand appears 1st vs. 5th in AI responses |

How does qualitative analysis enhance measurement accuracy in the LLM Visibility Tool? Qualitative analysis enhances measurement accuracy by evaluating sentiment framing and source attribution within AI-generated answers. Sentiment analysis classifies brand mentions as positive, neutral, or negative to assess narrative positioning. Source attribution identifies specific URLs or third-party domains that LLMs reference when generating brand information. Competitor benchmarking compares AI Visibility Scores against rival brands to detect market share gaps in conversational authority.

What is the platform-specific methodology for optimization using the LLM Visibility Tool? The platform-specific optimization methodology within the LLM Visibility Tool involves content gap identification, trend monitoring, and prompt-level performance tracking. Content gap identification isolates prompts where brand visibility equals 0% to prioritize targeted SEO or PR initiatives. Trend monitoring tracks fluctuations in the AI Visibility Score over weekly or monthly intervals to evaluate campaign influence. Prompt tracking determines which brands occupy top recommendation positions, enabling optimization for first-position inclusion within AI-generated lists.

How do organizations implement the LLM Visibility Tool operationally? Organizations implement the LLM Visibility Tool by defining a structured prompt set aligned with high-intent industry queries and tracking performance on a recurring schedule. Teams upload or configure prompt libraries that reflect customer buying language, monitor AI Visibility Score and SoM weekly, and export citation data for source-layer audits. Continuous benchmarking ensures the brand maintains 40 to 60% inclusion rates and identifies declines below 20%, which signal competitive displacement within AI-generated answer environments.

2. Profound

Profound measures Brand AI Visibility by tracking Share of Voice, sentiment framing, citation frequency, and competitive positioning across major Large Language Models (ChatGPT, Perplexity, Google Gemini, Microsoft Copilot, Claude, Meta AI, Grok, DeepSeek, Google AI Overviews). Profound benchmarks brand presence inside conversational search environments. Profound analyzes how frequently and how favorably a brand appears inside AI-generated answers viewed by over 100 million daily AI searchers.

What are the core visibility and sentiment metrics provided by Profound? The core metrics provided by Profound are Share of Voice, Sentiment Analysis, Citation Tracking, and Shopping Insights. Share of Voice measures total brand inclusion across tracked prompts relative to competitors. Sentiment Analysis classifies AI-generated framing as positive, neutral, or negative. Citation Tracking identifies URLs referenced by Large Language Models during answer generation. Shopping Insights evaluates product visibility inside ChatGPT shopping responses to compare retail positioning across categories.

How does Profound use technical and search analytics for LLM coverage? Profound uses multi-model coverage, query fanout analysis, agent analytics, and international segmentation to measure Brand AI Visibility at scale. Multi-model coverage tracks performance across more than 10 AI engines. Query fanout analysis maps related sub-queries generated during response construction. Agent analytics detect crawlability barriers and structured data gaps that limit indexing. International segmentation measures performance across countries and languages. The core analytic categories are listed below.

| Analytic Category | Specific Capabilities | Primary Objective |

|---|---|---|

| LLM Coverage | Tracks 10+ models (Meta AI, Grok, DeepSeek) | Measure visibility across the AI ecosystem. |

| Query Analysis | Prompt tracking and fanout mapping | Identify discovery pathways for inclusion. |

| Agent Analytics | Crawlability and structured data audits | Improve indexing and retrieval accuracy. |

| International Data | Multi-country and multi-language tracking | Measure geographic performance trends. |

What channel-specific tracking features does Profound provide? Profound provides channel-specific tracking for Answer Engine Optimization, demand analytics, and PR influence measurement. Answer Engine Optimization tracking measures inclusion inside zero-click answer sets. Demand analytics separates bot-driven traffic from human-driven engagement. PR influence measurement evaluates how earned media placements affect AI-generated narrative framing.

What platform access options and pricing tiers does Profound offer? Profound offers four pricing tiers that scale measurement capacity from entry-level analysis to enterprise compliance. The available plans are listed below.

| Plan Level | Pricing and Access | Key Features |

|---|---|---|

| AEO Report | Free | AI search visibility analysis and optimization. |

| Starter Plan | $99 per month | ChatGPT-only tracking. |

| Growth Plan | 7-day free trial | Multi-LLM tracking and advanced analytics. |

| Enterprise Plan | Custom pricing | Full reporting with SOC 2 Type II and HIPAA compliance. |

How does Profound’s audience scale influence benchmarking strategy? Profound benchmarks Brand AI Visibility against a global AI search ecosystem serving over 100 million daily users. Benchmark targets align with high-volume conversational environments. Benchmark alignment ensures competitive positioning inside AI-native discovery systems, where inclusion frequency determines authority hierarchy.

3. Otterly.AI

Otterly.AI measures Brand AI Visibility by tracking brand mentions, sentiment framing, and citation presence across generative AI platforms (ChatGPT, Perplexity, Google AI Overviews). Otterly.AI replaces manual workflows and Puppeteer scripts with automated tracking. Otterly.AI evaluates how AI models describe and position a brand inside synthesized answers. Otterly.AI centralizes analytics inside the Brand Report interface, where content gaps appear when competitors gain inclusion, and the primary brand remains absent.

What are the core metrics within the Otterly.AI Brand Visibility Index? The core metrics within the Otterly.AI Brand Visibility Index are Brand Mention Frequency, Brand Position Context, Competitive Positioning, and AI Reputation Index. Brand Mention Frequency measures total appearances across tracked prompts. Brand Position Context identifies ranking placement from 1st to 10th position. Competitive Positioning compares the Inclusion Rate against defined rivals. AI Reputation Index classifies mentions as favorable or general to quantify narrative tone.

How does Otterly.AI track the AI search funnel and success KPIs? Otterly.AI tracks AI visibility performance through Brand Coverage, AI Bot Traffic, Branded and Direct Traffic, and Product Signups. Firstly, Brand Coverage measures inclusion frequency across prompts. Secondly, the AI Bot Traffic records crawler and referral activity. Thirdly, Branded and Direct Traffic capture recall-driven visits. Fourthly, Product Signups measure conversion outcomes. Share of AI Citations compares brand inclusion against answer competitors (Reddit, Wikipedia, Quora). Funnel analysis aligns AI inclusion with Net Promoter Score metrics.

What technical features does Otterly.AI provide for diagnosing visibility shifts? Otterly.AI provides time-lapse replay and diagnostic causation tools to detect visibility fluctuations across platforms. Time-lapse replay visualizes week-over-week Inclusion Rate movement. Diagnostic panels identify crawlability barriers and backlink loss that reduce authority signals. Diagnostic analysis separates structural authority decline from short-term model variance.

How do organizations implement the Otterly.AI strategic cycle? Organizations implement the Otterly.AI strategic cycle through tracking, diagnosing, and adapting. Firstly, monitor cross-platform movement in Brand Visibility Index scores. Secondly, analyze the technical causation behind inclusion changes inside diagnostic panels. Thirdly, adjust marketing, content, and PR execution to restore or increase the Inclusion Rate. KPI definitions remain documented at help.otterly.ai/brand-report-kpi-definition to maintain methodological consistency.

4. AI Visibility Toolkit by Semrush

The AI Visibility Toolkit by Semrush measures Brand AI Visibility through AI Visibility Score, Share of Voice, Sentiment, and Perception across AI-generated responses. AI Visibility Score compares brand mentions against the median mention volume of top competitors. Share of Voice quantifies proportional inclusion within tracked prompts. Sentiment and Perception classify qualitative tone and narrative framing. These metrics standardize authority evaluation inside conversational AI search environments.

What specialized tools are included in the AI Visibility Toolkit by Semrush? The AI Visibility Toolkit by Semrush includes six specialized tools that diagnose gaps and track inclusion performance. The specialized tools are listed below.

- Visibility Overview provides global or regional benchmarks of overall AI presence.

- Competitor Research identifies prompts where competitors appear, but the target brand does not.

- Prompt Research functions as AI keyword research by measuring topic volume, difficulty, and intent.

- Brand Performance Reports analyze LLM data from ChatGPT and Google AI Mode to generate strategic recommendations.

- Prompt Tracking enables daily monitoring of high-value prompts to detect drops or gains in inclusion.

- AI Search Site Audit uses the AI Search Health widget to identify technical blockers that prevent AI bots from crawling content, with a cap of 100 pages per audit.

What is the data frequency and geographic scope of the AI Visibility Toolkit? The AI Visibility Toolkit refreshes most modules every 24 hours and provides broad international coverage. Visibility Overview, Competitor Research, Prompt Research, and Prompt Tracking update daily. Brand Performance Reports update weekly. Site Audits run on demand. Coverage spans 15 core countries (the United States, the United Kingdom, Canada, and India). Brand Performance supports 68,000 location and language combinations. Prompt Tracking extends to 220+ countries and territories for global benchmarking.

What are the subscription limits and scalability options of the AI Visibility Toolkit? The AI Visibility Toolkit operates under defined subscription limits with structured scalability options. The standard tier costs $99 per month. The capacity limits are listed below.

| Feature | Standard Limit ($99/mo) | Scalability Options |

|---|---|---|

| Domains Tracked | 1 Domain | $99 per additional domain |

| Prompt Tracking | 25 Prompts | $60 per 50 additional prompts |

| Daily AI Analysis | 300 Queries | Included in standard tier |

| Prompt Research | 1,000 Queries | Included in standard tier |

| Site Audit Pages | 100 Pages | Capped per audit |

| CSV Exports | 10 Daily | 1,000 rows per export |

What are the technical requirements for operating the AI Visibility Toolkit? Operating the AI Visibility Toolkit requires updated browser software and compatible operating systems for accurate rendering and interface stability. Outdated browsers distort dashboard outputs and restrict reporting access. Technical compatibility enables daily refresh cycles, export functionality, and structured reporting features. Measurement interpretation depends on transparent primary data sources and defined platform parameters.

5. Brand Radar by Ahrefs

Brand Radar by Ahrefs measures Brand AI Visibility by quantifying AI Share of Voice, Mentions, Citations, Impressions, Search Query Count, and Branded Search Interest across AI chat interactions. Brand Radar by Ahrefs tracks inclusion inside AI-generated answers and compares inclusion against defined competitors. Brand Radar by Ahrefs converts conversational presence into structured quantitative metrics for competitive benchmarking and authority evaluation.

What are the core quantitative metrics inside Brand Radar by Ahrefs? The core quantitative metrics inside Brand Radar by Ahrefs are AI Share of Voice, Mentions, Citations, Impressions, Search Query Count, and Branded Search Interest. These metrics standardize performance measurement across AI platforms. The core metrics are listed below.

| Metric | Definition | Purpose |

|---|---|---|

| AI Share of Voice | Percentage of mentions versus competitors. | Competitive benchmarking. |

| Mentions | Count of brand name generations. | Volume tracking. |

| Citations | Frequency of explicit website links. | Source authority measurement. |

| Impressions | Estimated exposure value from response frequency. | Reach estimation. |

| Search Query Count | Diversity of tracked questions. | Topic breadth analysis. |

| Branded Search Interest | Longitudinal search volume growth. | Awareness correlation. |

How does Brand Radar by Ahrefs provide granular filtering and analysis? Brand Radar by Ahrefs provides granular filtering through platform index selection, query structure segmentation, and citation source isolation. Platform segmentation refines datasets across indexes (Google AI Overviews, ChatGPT, Perplexity). Query filters track brand presence in queries that contain, exclude, or mention a brand name. Citation filter “Citation contains {domain}” isolates responses where owned domains function as primary cited sources.

What comparative reporting modes does Brand Radar by Ahrefs offer? Brand Radar by Ahrefs offers three reporting modes (Only Brand, With Others, and Others Only). Only Brand isolates exclusive brand mentions. Others identify shared inclusion alongside competitors in recommendation lists. Others only highlight prompts where competitors appear, and the target brand remains absent. These modes clarify the relative Share of Voice inside compressed AI answer sets.

How does cross-platform tracking expand AI visibility monitoring in Brand Radar by Ahrefs? Brand Radar by Ahrefs expands AI visibility monitoring through web, video, and community signal tracking. Web tracking measures citations from editorial sites and forums. Video tracking analyzes YouTube thumbnails, titles, descriptions, and transcripts. Reddit tracking identifies brand mentions within Reddit threads that rank in Google Search. The Cited Domains Report highlights external platforms (Reddit, Zapier) frequently referenced by AI engines.

What qualitative audit capabilities support Brand AI Visibility assessment in Brand Radar by Ahrefs? Brand Radar by Ahrefs supports qualitative audits that evaluate accuracy, sentiment, differentiation, and authority framing inside AI-generated answers. Audit scope covers Main Brand, Product Brands, Proprietary Features, and Executive Personal Brands. Performance indicators measure accuracy, positive framing, uniqueness, and lead mention status. Monthly audits maintain relevance amid rapid model updates.

What are the technical access requirements for using Brand Radar by Ahrefs? Brand Radar by Ahrefs requires prompt-based data sourcing and defined Ahrefs subscription tiers. Custom prompt packages start at $50 per month and use real prompt databases to reduce personalization bias. Advanced Search Demand and Web Visibility reports require paid Ahrefs plans. Enterprise plans and “All indexes” add-ons enable unlimited project tracking.

How is traffic data integrated with Brand Radar by Ahrefs reports? Traffic data integrates with Brand Radar by Ahrefs through cross-referencing the Cited Pages Report with Ahrefs Web Analytics or Google Analytics 4. Integration measures whether AI citations generate measurable click-through traffic. Correlation analysis between Mentions, Citations, and session-level data quantifies behavioral impact beyond conversational inclusion.

6. Clearscope

Clearscope measures Brand AI Visibility by evaluating semantic authority, topical coverage, and content depth that influence inclusion within AI-generated answers. Brand AI Visibility refers to a brand’s integration into the foundational understanding of Large Language Models (ChatGPT, Gemini, and Perplexity). Clearscope supports this integration by optimizing content so that AI systems treat the brand as a trusted topical authority rather than as a single blue link in search results.

How do visibility metrics shift from clicks to authority when using Clearscope? Visibility metrics shift from clicks to authority by prioritizing topical integration and entity relevance over keyword rankings and click-through rates. AI systems evaluate how deeply a brand is embedded within a subject cluster, which determines whether the brand appears in synthesized outputs. Clearscope improves authority signals by expanding semantic coverage so that AI engines reference the brand during summarization rather than treating it as an isolated search input.

What sophisticated user engagement signals influence AI visibility success? Sophisticated user engagement signals that influence AI visibility success include dwell time, bounce rate, click-through rate, and repeat visits. AI systems interpret these engagement indicators as proxies for content relevance and trustworthiness. High dwell time and repeat visitation patterns signal sustained authority, which strengthens the probability that AI models include the brand in topic-level synthesis.

What strategic content frameworks increase Brand AI Visibility with Clearscope? Strategic frameworks that increase Brand AI Visibility with Clearscope rely on topic clusters, semantic density expansion, and content hub architecture. Clearscope encourages replacing single-keyword targeting with comprehensive topic clusters that cover adjacent subtopics and long-tail variations. Expanding coverage across related concepts strengthens entity categorization and signals subject-matter expertise, which increases inclusion probability within AI summaries.

How does the E-E-A-T framework influence AI discovery when optimizing with Clearscope? The E-E-A-T framework influences AI discovery by reinforcing Experience, Expertise, Authoritativeness, and Trustworthiness signals across structured content. AI models evaluate consistency of messaging, authoritative citations, and external validation signals when determining source reliability. Clearscope optimization improves topical clarity and structured authority, which strengthens machine confidence in content extraction and inclusion.

What technical requirements enable AI discovery of Clearscope-optimized content? The technical requirements that enable AI discovery of Clearscope-optimized content include structured data implementation, Answer Engine Optimization formatting, and performance standards. The technical requirements are listed below.

| Requirement | Technical Implementation | Primary Function |

|---|---|---|

| Structured Data | Schema Markup | Makes content machine-readable for products, FAQs, and entities |

| AEO | Conversational Q&A formatting | Structures content to answer explicit user questions directly |

| Formatting | Clear heading hierarchy | Improves digestibility and extraction clarity |

| Core Web Vitals | Speed and stability optimization | Ensures crawl efficiency and usability |

| Mobile-First | Mobile indexing compliance | Aligns with primary indexing standards |

How do summarization techniques and metadata enhance AI synthesis? Summarization techniques and metadata enhance AI synthesis by improving extractability and contextual clarity. Concise summaries provide structured takeaways that AI models lift into generated answers. Descriptive alt text and contextual metadata supply additional entity signals that assist AI in understanding visual and structural components. These enhancements increase the likelihood that AI systems attribute authoritative statements directly to the brand during response generation.

7. Peec AI

Peec AI measures Brand AI Visibility using Visibility Score, which represents the percentage of AI-generated responses that mention a specific brand. Visibility Score is calculated by dividing total brand mentions by total tracked responses and multiplying by 100. Visibility Score functions as the foundational metric for quantifying how frequently a brand appears within AI interfaces relative to a defined prompt set.

What are the core metrics used by Peec AI to measure brand visibility? The core metrics used by Peec AI are Visibility Score, Brand Visibility, and Source Visibility. Visibility Score measures proportional inclusion across prompts. Brand Visibility tracks explicit brand name mentions within AI responses. Source Visibility measures instances where an AI cites a domain or URL as a reference, even if the brand name does not appear directly. Together, these metrics provide a dual-layer view of recognition and authority.

What key performance indicators structure brand visibility analysis in Peec AI? The key performance indicators in Peec AI differentiate between quantitative inclusion and qualitative authority strength. These indicators identify whether a brand functions as a trusted source or merely as a recognized entity. The key performance indicators are listed below.

| Key Performance Indicator | Measurement Type | Definition |

|---|---|---|

| Mention Frequency | Quantitative | Tracks how often the brand name appears in AI outputs |

| Citation Volume | Quantitative | Measures how frequently the domain is used as a foundational source |

| Source-to-Brand Gap | Qualitative | Differentiates trusted references from recognized entities |

| Brand Recognition | Qualitative | Evaluates the AI association of the brand with industry topics |

| Competitive Positioning | Comparative | Indicates relative advantage versus competitors in AI interfaces |

What analytical tools inside Peec AI support brand visibility tracking? Peec AI supports visibility tracking through centralized dashboards, prompt-level analytics, source-level analysis, and thematic filtering. The Dashboard aggregates overall performance metrics across prompts and competitors. The Prompts Page provides granular longitudinal data for each tracked query. The Sources Page analyzes citation behavior at the domain and URL level. Tag-Based Filtering groups prompts into thematic categories to identify topical strengths and weaknesses.

How does gap analysis within Peec AI improve the Brand AI Visibility strategy? Gap analysis within Peec AI improves the Brand AI Visibility strategy by identifying discrepancies between recognition and authority signals. High Source Visibility without Brand Mentions indicates that AI models trust the content but do not associate the brand entity with the topic. High Brand Mentions without Citation Volume indicates name familiarity without authoritative trust. These diagnostic insights allow organizations to close semantic gaps, strengthen entity consistency, and benchmark progress in AI model familiarity over time.

What Metrics Are Important for Measuring Brand AI Visibility?

The metrics that are important for measuring Brand AI Visibility are AI Share of Voice (SOV), AI Citation Frequency, Position in AI Overviews, Brand Sentiment in AI Responses, Traffic from AI Search, and AI Visibility Index. These metrics quantify brand inclusion, authority, positioning, perception, and downstream behavioral impact within AI-generated answers. The key metrics for measuring Brand AI Visibility are listed below.

- AI Share of Voice (SOV)

- AI Citation Frequency

- Position in AI Overviews

- Brand Sentiment in AI Responses

- Traffic from AI Search

- AI Visibility Index

These six metrics collectively measure inclusion, authority, hierarchy, perception, behavioral impact, and composite performance within AI-generated search ecosystems. Tracking them together ensures that Brand AI Visibility reflects both conversational presence and measurable business influence rather than isolated mention counts.

AI Share of Voice (SOV)

AI Share of Voice is important for Brand AI Visibility because AI Share of Voice quantifies proportional inclusion inside AI-generated answers relative to competitors. AI Share of Voice measures how often a brand appears across a defined prompt set against the top 3 to 5 competitors. AI Share of Voice functions as a competitive authority indicator because inclusion inside conversational answer sets determines entry or exclusion from AI-driven consideration lists.

How does Excess Share of Voice predict future market dominance? Excess Share of Voice predicts future market dominance when AI Share of Voice exceeds Share of Market. Growth accelerates if AI Share of Voice remains greater than Share of Market. Decline accelerates if AI Share of Voice falls below Share of Market because competitors dominate AI-native discovery channels. AI Share of Voice operates as a leading indicator while AI adoption increases.

Why is mental availability critical for appearing in AI recommendation sets? Mental availability is critical because AI systems return only 3 to 5 brand recommendations per query. High AI Share of Voice increases the probability of occupying one of these limited positions. Low AI Share of Voice removes the brand from the AI-generated consideration set during high-intent evaluation prompts.

What role does narrative control play in AI-native discovery environments? Narrative control determines which brands AI systems reference during product comparisons and category definitions. AI systems synthesize answers from reviews, editorial coverage, and product descriptions instead of repeating marketing slogans. Measuring AI Share of Voice ensures presence in high-intent prompts (“best [category] under $25”) rather than competitor-dominated narratives.

How does relative performance benchmarking reveal competitive vulnerabilities? Relative performance benchmarking reveals competitive vulnerabilities by comparing AI Share of Voice across identical prompt datasets. A brand with 100 mentions underperforms if a competitor records 400 mentions within the same prompt library. AI Share of Voice analysis identifies visibility gaps where traditional ranking exists, but AI inclusion does not, which exposes conversational authority weaknesses.

Why is identifying platform variance essential for strategic resource allocation? Identifying platform variance is essential because AI Share of Voice fluctuates across models (ChatGPT, Perplexity). A brand holding 40% AI Share of Voice in ChatGPT and 15% in Perplexity exhibits uneven authority distribution. Platform-specific AI Share of Voice measurement directs allocation toward defense of strong positions and correction of weak positions.

How do AI Share of Voice trends function as an early warning system? AI Share of Voice trends function as an early warning system by detecting competitive shifts before revenue metrics change. Month-over-month AI Share of Voice analysis reveals emerging competitors and declining narrative dominance. Benchmarking against Narrative Owners (media outlets, authoritative directories) exposes structural changes in citation sources that influence AI recommendations. Structured AI Share of Voice monitoring preserves authority inside compressed AI answer environments.

AI Citation Frequency

AI Citation Frequency is important for Brand AI Visibility because AI Citation Frequency measures how often a brand’s domain appears as a cited source inside AI-generated answers. AI Citation Frequency quantifies source authority within compressed answer environments. AI Citation Frequency determines whether a brand functions as a foundational reference or remains excluded from AI synthesis layers.

How does the rise of zero-click searches increase the importance of AI Citation Frequency? The rise of zero-click searches increases the importance of AI Citation Frequency because 60% of Google queries end without a click. 80% of consumers rely on AI summaries instead of visiting websites. Loss of citation removes influence at the decision stage, even if the brand ranks in traditional search.

Why is the 527% surge in AI-referred sessions a strategic signal for citation tracking? The 527% surge in AI-referred sessions between January and May 2025 makes AI Citation Frequency a growth-critical metric. Platforms (ChatGPT 4.5 billion monthly visits, Perplexity 500 million monthly searches) operate at a large scale. Tracking AI Citation Frequency captures authority exposure inside high-volume AI discovery channels.

Why does result compression create competitive risk that requires citation monitoring? Result compression creates competitive risk because Large Language Models reduce 10 blue links to 2 to 7 cited domains per answer. Limited citation slots increase exclusion probability. Monitoring AI Citation Frequency alongside AI Share of Voice reveals Share of Authority across defined prompt libraries.

How does structured data influence AI Citation Frequency? Structured data influences AI Citation Frequency because 81% of AI-cited pages implement schema markup. Schema markup provides machine-readable entity clarity that satisfies grounding requirements. Measuring URL Citation Rate evaluates whether structured architecture supports authoritative inclusion.

Why does answer-first content architecture increase AI Citation Frequency? Answer-first content architecture increases AI Citation Frequency by 30 to 40% because answer-first formatting prioritizes extractable facts and direct statements. AI engines prioritize Information Gain expressed through unique statistics and structured explanations. Citation tracking indicates content extractability and informational depth.

How does the virtuous cycle of AI authority reinforce citation dominance? The virtuous cycle of AI authority reinforces citation dominance because frequently cited domains become preferred reference points in future AI responses. Early authoritative inclusion compounds citation frequency over time. Continuous AI Citation Frequency monitoring protects long-term competitive positioning as 25% of organic traffic shifts to AI chatbots by 2026.

Position in AI Overviews

Position in AI Overviews is important for Brand AI Visibility because it determines whether a brand appears prominently inside AI-generated summaries or becomes functionally invisible. Position in AI Overviews measures ranking placement within synthesized answers, not traditional blue-link lists. Position in AI Overviews directly affects narrative authority, engagement probability, and competitive inclusion inside Generative Engine Optimization (GEO) environments.

How does direct brand placement inside AI-generated answers impact authority? Direct brand placement inside AI-generated answers establishes narrative authority that exceeds the value of a simple citation link. In GEO environments, the highest outcome is integration of the brand name into the narrative response itself. Because OpenAI and Anthropic operate at crawl-to-visitor ratios of 1,500:1 and 60,000:1 compared to Google’s 18:1, direct mentions ensure recognition even when click-through rates remain low.

Why is the 0.664 correlation between off-site mentions and AI Overview visibility significant? The 0.664 correlation coefficient between off-site brand mentions and AI Overview visibility demonstrates that third-party context strongly influences AI positioning. This correlation indicates that editorial coverage, reviews, and external citations contribute to inclusion probability within AI summaries. Monitoring Position in AI Overviews allows brands to determine whether owned content or third-party references drive authority within model training data or retrieval layers.

Why is the fourth or fifth position a critical engagement threshold? The fourth or fifth position in AI Overviews represents a critical engagement drop-off point where user attention declines sharply. Although top placements in AI Overviews perform similarly to Position 6 in traditional search, placement beyond the third slot significantly reduces cognitive prominence. Measuring exact Position in AI Overviews ensures the brand remains inside the active engagement zone of AI-generated responses.

How does the 62% overlap between traditional SEO rankings and AI visibility justify separate measurement? The 62% overlap between high Google rankings and LLM visibility confirms that traditional SEO metrics do not reliably predict AI inclusion. Brands that rank in the top organic positions remain absent from AI summaries. Over-optimizing for LLM extraction without balancing traditional SEO signals reduces organic traffic, as demonstrated in cases where traffic declined from 2,000 to 200 monthly visitors after AI-focused restructuring. Position in AI Overviews must be tracked independently of legacy ranking systems.

Why is high-frequency testing required to measure Position in AI Overviews accurately? High-frequency testing is required because Large Language Models are probabilistic and non-deterministic, which causes ranking variability across sessions. Daily testing identifies stable inclusion patterns rather than relying on monthly snapshots that capture noise. Since 99% of organizations rely on context-blind sampling models, structured multi-model tracking across ChatGPT, Gemini, and Perplexity provides a statistically defensible measurement of true brand standing in AI-generated answer hierarchies.

Brand Sentiment in AI Responses

Brand Sentiment in AI Responses is important for Brand AI Visibility because sentiment scoring determines whether a brand is recommended, neutrally cited, or excluded from AI-generated answers. Brand Sentiment in AI Responses measures the qualitative tone assigned to a brand within conversational outputs. Brand Sentiment in AI Responses directly influences recommendation probability, competitive displacement, and narrative authority inside compressed AI decision environments.

How does sentiment scoring influence the transition from citation to recommendation? Sentiment scoring influences the transition from citation to recommendation by evaluating tone and authority framing beyond simple mention frequency. AI engines classify brands as leaders, viable alternatives, or baseline entries based on contextual language patterns. Optimization programs typically establish a sentiment baseline between weeks 5 to 12 to ensure content aligns with model perception and triggers recommendation inclusion rather than passive citation.

Why is favorability critical during the compressed AI-driven decision journey? Favorability is critical because AI engines increasingly deliver direct recommendations instead of broad comparison lists. High positive sentiment increases the probability that a brand replaces a competitor inside the primary response slot. In a compressed journey where AI systems surface only a few options, favorable positioning directly influences market share capture.

How does sentiment prevent early-stage exclusion in B2B procurement cycles? Sentiment prevents early-stage exclusion because AI engines filter out brands perceived as unreliable or negatively framed during research-phase queries. Negative or weak sentiment removes brands from consideration before traditional demand generation begins. Systematic monitoring of Brand Sentiment in AI Responses protects revenue by ensuring the brand remains visible during high-value procurement evaluations.

How do E-E-A-T signals and machine confidence interact with sentiment? E-E-A-T signals and machine confidence interact with sentiment by determining whether AI systems trust a brand as a reliable source. AI engines evaluate Experience, Expertise, Authoritativeness, and Trustworthiness signals when constructing answer sets. If sentiment signals unreliability or structured data produces low parsing confidence, AI systems exclude the brand entirely to avoid propagating misinformation.

Why is accurate entity recognition essential for maintaining positive sentiment visibility? Accurate entity recognition is essential because incorrect clustering distorts sentiment and misclassifies brand authority. Monitoring entity correctness ensures AI engines associate the brand with the correct industry and expertise domain. Clear, structured, and helpful content reinforces positive sentiment signals and prevents placement in irrelevant or low-value search clusters, which protects long-term Brand AI Visibility stability.

Traffic from AI Search

Traffic from AI Search is important for Brand AI Visibility because it quantifies behavioral impact from AI-generated discovery environments where users increasingly bypass traditional websites. Traffic from AI Search measures referral sessions, assisted conversions, and branded query growth originating from platforms (ChatGPT and Perplexity). Traffic from AI Search connects conversational inclusion with measurable business outcomes in an ecosystem where 44% of users prefer AI summaries over visiting brand websites.

How does shifting user behavior increase the necessity of measuring AI-driven traffic? Shifting user behavior increases the necessity of measuring AI-driven traffic because 80% of consumers use AI summaries at least 40% of the time. This zero-click behavior reduces reliance on traditional click-through metrics and increases dependence on AI-synthesized responses. Measuring Traffic from AI Search allows brands to quantify influence within environments where users no longer rely on organic listings or review platforms.

Why is Traffic from AI Search critical for managing organic traffic loss? Traffic from AI Search is critical because brands face an estimated 15 to 25% decline in organic web traffic due to AI-generated summaries satisfying search intent. Tracking AI referrals enables organizations to move from volume-based metrics toward quality-based performance indicators. Although AI referral sessions are lower in volume, AI-originated users often enter at late funnel stages and convert at higher rates.

How does strategic resource allocation depend on AI traffic measurement? Strategic resource allocation depends on AI traffic measurement because 32% of digital leaders identify Generative Engine Optimization (GEO) as the primary growth challenge by 2026. Organizations allocate an average of 12% of 2025 marketing budgets to GEO initiatives, and high-maturity companies invest 2x more than peers. Measuring Traffic from AI Search enables benchmarking of AI search market share and informs investment prioritization across conversational platforms.

How does measuring AI traffic support operational necessity and narrative control? Measuring AI traffic supports operational necessity and narrative control because 97% of digital leaders report positive impact from GEO initiatives. Furthermore, 93% of leaders are internalizing GEO capabilities to protect narrative accuracy and sentiment framing. API-based tracking replaces legacy scraping approaches to capture structured visibility data from closed LLM environments, which ensures defensible measurement standards.

Why is the correlation between AI traffic and external mentions strategically significant? The correlation between AI traffic and external mentions is significant because AI inclusion aligns strongly with editorial citations, hyperlinked references, and branded search growth. Measuring Traffic from AI Search validates the impact of earned media and third-party authority signals that feed AI training and retrieval systems. Monitoring traffic changes alongside Citation Frequency identifies whether increased conversational authority translates into measurable brand engagement.

How does evolving a paid search strategy require AI traffic tracking? Evolving paid search strategy requires AI traffic tracking because AI Overviews displace traditional advertisements while search ad spend grows 10% to $253.2 billion. Google captures 86% of ad investment despite its market share dropping below 90% in late 2024. Measuring Traffic from AI Search reveals which high-value queries trigger AI summaries, enabling marketers to adjust bidding strategies before AI-generated content reduces paid ad exposure.

AI Visibility Index

The AI Visibility Index is important for Brand AI Visibility because it consolidates inclusion rate, citation share, sentiment, and authority signals into a single composite benchmark that reflects competitive presence across AI-generated answers. The AI Visibility Index functions as a 0 to 100 scaled metric that standardizes cross-platform performance tracking. The AI Visibility Index enables brands to detect authority shifts in conversational environments where traditional ranking signals no longer provide sufficient insight.

How does traffic migration to AI platforms increase the necessity of an AI Visibility Index? Traffic migration increases the necessity of an AI Visibility Index because 25% of organic search traffic is projected to move to AI chatbots by 2026. Platforms process 4.5 billion monthly visits, which creates a parallel discovery ecosystem outside traditional analytics frameworks. Without an AI Visibility Index, brands cannot quantify presence during early-stage B2B research where AI responses define “leading manufacturers” and category authorities.

Why does the dominance of zero-click behavior make the AI Visibility Index essential? The dominance of zero-click behavior makes the AI Visibility Index essential because 60% of Google searches conclude without a click. Consumers increasingly rely on AI-generated summaries rather than website visits, which reduces the reliability of click-through rate (CTR) as a performance metric. The AI Visibility Index provides modeled estimates of inclusion and authority in environments where LLM providers do not disclose private interaction logs.

How does the 527% surge in AI-referred sessions justify composite visibility scoring? The 527% surge in AI-referred sessions between January and May 2025 justifies composite visibility scoring because rapid platform growth amplifies competitive displacement risk. Platforms now process 500 million monthly searches, which accelerates authority consolidation. Early optimization measured through an AI Visibility Index produces compounding citation gains, which create long-term entrenchment effects.

How does citation compression change SEO measurement requirements? Citation compression changes SEO measurement requirements because AI engines reduce 10 traditional results to 2 to 7 cited domains per answer. Traditional rankings remain stable while AI inclusion declines. The AI Visibility Index tracks signal divergence by measuring extractability, citation share, and entity frequency rather than relying on backlink volume or domain authority alone.

Why are structured data and content validation integral to the AI Visibility Index? Structured data and content validation are integral to the AI Visibility Index because 81% of AI-cited pages implement schema markup, and answer-first formatting increases visibility by 30 to 40%. The AI Visibility Index validates whether structured data reaches AI crawlers, whether content architecture supports extraction, and whether crawlability barriers (JavaScript limitations) prevent inclusion. Monitoring these components prevents technical displacement.

What role does the AI Visibility Index play in reputation and sentiment governance? The AI Visibility Index plays a governance role by identifying attribution errors, sentiment shifts, and competitive Share of Voice changes before revenue impact occurs. Factual inaccuracies in AI summaries damage brand credibility more than omission. The AI Visibility Index functions as a directional control metric for Answer Engine Optimization (AIO), allowing brands to monitor whether they are positioned as market leaders or secondary alternatives across defined prompt clusters.

What are the Core Components of Brand AI Visibility?

The core components of Brand AI Visibility are LLM parsability and structure, semantic density and relevance, trust and authority (E-E-A-T), entity consistency, recency, and brand mentions and citations. These components define how Large Language Models (LLMs) extract, interpret, rank, and reference brand information within AI-generated answers. The core components of Brand AI Visibility are listed below.

LLM Parsability & Structure

LLM parsability and structure are the core components of AI Visibility because Large Language Models prioritize extractable, structured, and machine-readable content over high-volume brand mentions. LLM parsability and structure determine whether AI systems reliably interpret, index, and synthesize brand content during real-time response generation. Fewer than 25% of highly mentioned brands achieve strong citation rates without factual structuring, which confirms that structure outweighs mention frequency.

How does the discrepancy between mentions and citations prove the importance of structure?

The discrepancy between mentions and citations proves that unstructured popularity does not translate into AI authority. Large Language Models cite structured repositories of verifiable facts rather than general brand discussions. Research shows that less than 25% of brands with high mention frequency are actually cited by AI engines because models prefer content that functions as a semantic map of entities and relationships.

Why is the Zapier case study significant for understanding LLM parsability and structure? The Zapier case study is significant because Zapier ranks #1 in AI citations for digital technology despite ranking #44 in overall brand mentions. Zapier achieves this performance through structured technical documentation and integration guides formatted with Semantic HTML5 and clear H1 to H6 hierarchies. These structural anchors allow LLMs to identify “safe-to-lift” factual statements with minimal processing friction, which increases citation probability.

Why are Server-Side Rendering (SSR) and crawler accessibility mandatory for AI inclusion? Server-Side Rendering (SSR) and crawler accessibility are mandatory because AI bots cannot reliably interpret client-side JavaScript during real-time indexing. Crawlers (GPTBot, CCBot, and Claude-Web) require access to fully rendered HTML content. Content must be explicitly allowed in robots.txt and organized with structured schema types (FAQ, HowTo, and Product) to ensure indexability. Without SSR and structured markup, even authoritative content remains invisible to AI systems.

What component-specific actions improve LLM parsability and structure? LLM Parsability and structure improve through Server-Side Rendering, structured schema markup, semantic HTML hierarchy, and answer-first formatting. Firstly, implement Server-Side Rendering to expose full HTML content to AI crawlers. Secondly, embed structured schema markup (Organization, Product) with unique entity identifiers to create machine-readable entity clarity. Thirdly, structure content with a clear H1 to H6 hierarchy to define topic relationships and section priority. Fourthly, apply answer-first formatting by placing factual summaries at the top of each section. Robots.txt must permit AI crawlers to access content. These architectural controls convert brand content into machine-readable assets that strengthen sustained AI Visibility.

Semantic Density & Relevance

Semantic density and relevance are the core components of AI Visibility because Large Language Models prioritize intent alignment, contextual depth, and entity relationships over isolated keyword presence. Semantic density and relevance ensure that content forms a cohesive semantic layer that AI systems interpret as authoritative. Semantic Density and Relevance strengthen extraction probability, reduce hallucination risk, and increase model confidence during answer synthesis.