LLM traffic refers to website visits, brand exposure, and conversion influence generated by Large Language Models such as ChatGPT, Gemini, Claude, Perplexity, and Copilot when they surface, cite, or recommend content inside AI-generated responses. LLM traffic represents a shift in search optimization away from pure optimization for search engines toward AI-mediated discovery, where models synthesize answers and shape decisions before a click occurs. This traffic is typically lower in volume than organic search but higher in intent, because users arrive after the AI has already summarized options, filtered relevance, and established trust.

LLM traffic differs fundamentally from traditional SEO traffic. Organic search traffic is retrieval-based and driven by rankings and clicks, while AI traffic is synthesis-based and often zero-click. This creates a separation between AI visibility and LLM traffic. AI visibility measures brand mentions, citations, and trust signals inside AI answers, even without a visit. LLM traffic measures the attributable sessions, engagement, and conversions that occur when users click through. Visibility grows without traffic, yet still increases branded search, direct visits, and conversion readiness over time.

Tracking LLM traffic requires methods beyond standard analytics. Core approaches include Google Analytics segmentation to isolate AI referrers, UTM parameters to recover attribution lost through copy-paste behavior, and specialized LLM SEO tracker tools that monitor brand mentions and citations directly inside AI outputs. Measurement focuses on sessions, engagement, and conversion efficiency rather than raw volume. Optimizing for AI visibility and traffic requires technical accessibility, structured and semantic content, topical authority, and strong E-E-A-T signals, because success now depends less on ranking higher and more on being the source an AI chooses to explain, recommend, and trust.

What Is LLM Traffic?

LLM traffic refers to website visitors who arrive via links, citations, or brand references generated inside responses from Large Language Models (LLMs) and AI chatbots such as ChatGPT, Perplexity, Gemini, Claude, and Microsoft Copilot.

LLM traffic is a discovery and referral channel created by AI-generated answers that synthesize information, cite sources, and surface links directly within conversational interfaces, shifting visibility away from traditional search engine results pages (SERPs) toward answer-first environments.

What defines the core nature of LLM traffic? LLM traffic is characterized by buyer journey compression, as AI systems bundle research steps, such as feature comparison, reviews, pricing context, and recommendations, into a single response. Buyer journey compression matters because users arrive after the evaluation phase, which increases intent density compared to exploratory organic search traffic.

How is LLM traffic categorized by interaction type? LLM traffic is categorized into 3 components based on how exposure and attribution occur.

- Attributable clicks refer to sessions where users click a visible link or citation inside an AI response and arrive with a trackable referrer.

- Assisted influence refers to indirect conversions where initial brand exposure occurs inside an LLM answer, but the final visit happens later through organic search, direct navigation, or another channel.

- Zero-click exposure refers to brand mentions inside AI-generated answers where no website visit occurs, but brand perception and recall are influenced.

What is the current volume and market composition of LLM traffic? LLM traffic represents a small but rapidly growing share of total web traffic. Average LLM traffic accounts for approximately 0.24% of total sessions, compared to 31.9% for organic search, which shows scale disparity. Despite low volume, growth rates exceed 300% year over year, with some datasets reporting a 527% increase in the first half of 2025. ChatGPT drives approximately 82% of identifiable LLM sessions, followed by Perplexity at 12.1% and Gemini at 4.9%. Nearly 90% of websites receive less than 0.6% of total traffic from LLM referrals, which highlights concentration risk.

What intent and funnel behavior define LLM traffic users? LLM traffic users typically exhibit middle-to-bottom funnel behavior. Users arrive after the AI system has pre-qualified requirements, such as confirming integrations, use cases, or constraints. Data shows that 86% of hand-raisers from LLM sources are classified as high-intent leads. LLM users often land mid-conversation on deep pages such as pricing tables, integration guides, or comparison sections rather than homepage-level entry points. Research patterns show that 44% of users follow a hybrid journey using both AI and traditional search, while only 2% rely exclusively on AI.

How is LLM traffic identified and attributed technically? LLM traffic is frequently misattributed as Direct or generic Referral traffic because many AI platforms strip or inconsistently pass referrer data. Analysts identify LLM traffic using regex-based filters in Google Analytics 4 (GA4) for sources such as chat.openai.com, perplexity.ai, and gemini.google.com. LLMs commonly link to passage-level content such as pricing tables or FAQ blocks rather than top-level URLs. Emerging standards such as llms.txt and ai.txt are used to guide AI crawlers toward preferred content and context.

Why Track LLM Traffic?

Tracking LLM traffic is necessary to measure AI-mediated discoverability, intent quality, and brand visibility that traditional analytics and SEO tools do not capture. LLM traffic tracking matters because discovery increasingly begins inside AI systems that generate answers rather than display ranked links, which creates visibility without guaranteed clicks.

What market signals justify tracking LLM traffic now? LLM traffic growth signals justify measurement investment. Traffic from ChatGPT and Perplexity increased by over 300% year over year. ChatGPT receives approximately 5.72 billion monthly visits and exceeds 400 million weekly active users. Approximately 25% of Google searches now trigger AI Overviews, and some case studies show 25× session growth from LLM referrals within 3 months. These signals confirm that user discovery behavior increasingly bypasses traditional search engines.

Why does LLM traffic quality require separate analysis? LLM traffic quality differs from organic traffic because users ask focused, highly specific questions. Traffic typically enters at mid-funnel or bottom-of-funnel stages, which increases lead value despite lower volume. Engagement metrics frequently exceed organic benchmarks, which indicates higher relevance and preparedness.

How does tracking LLM traffic improve data integrity? LLM traffic is often misclassified, which leads to underreported reach and undervaluation of high-performing content. Isolating AI traffic prevents skewed conversion rates caused by automated fetches or misattributed sessions. GA4 lacks a native AI channel, which requires manual segmentation to avoid burying AI-driven performance inside Direct or Referral buckets.

What strategic visibility insights does LLM traffic tracking provide? Tracking reveals how a brand appears inside AI-generated answers, not just whether it ranks. Monitoring citations identifies which competitors are surfaced instead of the brand. Experiments show that 40–50% of the same domains appear across Google AI Overviews, ChatGPT, and Perplexity, which exposes authority clustering. Tracking identifies data vacuums where insufficient content forces AI systems to cite third-party sources.

How does LLM traffic tracking support content optimization? Tracking identifies which URLs AI systems cite, which enables teams to improve pages with high visibility but low action. LLMs favor unique statistics, expert quotes, and structured data, which makes tracking essential for validating information gain. Content freshness emerges as a critical factor because LLMs use live search data and frequently surface legacy content that no longer ranks organically.

What behavioral insights are unique to LLM traffic? LLM interactions produce distinctive patterns, such as extremely fast navigation between deep pages. AI agents often execute limited JavaScript, which fragments analytics trails. Sudden spikes in direct traffic to deep informational URLs frequently indicate hidden LLM referrals.

Why is LLM traffic tracking debated? Conflicting perspectives exist. Most datasets report rapid growth, while some analysts argue LLM traffic is unstable and unreliable. Disagreement exists on citation mechanics, crawler capabilities, and attribution accuracy. Despite these conflicts, tracking remains the only way to measure AI visibility and prevent blind spots in modern discoverability.

What Are the Differences Between AI Traffic vs. Organic Search?

AI traffic and organic search traffic differ fundamentally in click behavior, intent structure, visibility mechanics, and performance measurement. AI traffic originates from answer-first systems that synthesize information, while organic search traffic originates from ranked result lists that require user navigation.

How do click-through rates differ between AI traffic and organic search? AI Overviews suppress traditional organic CTR. Position 1 links inside AI Overviews receive less than half the clicks of Position 2. Position 2 achieves approximately 5.76% CTR versus 2.51% for Position 1. Overall, organic CTR declines by 30–50% when AI summaries appear. Users click organic results only 8% of the time with AI Overviews present, compared to 15% without them. B2B software companies report 25–35% organic traffic declines after AI Overview rollout.

How does user intent differ across AI traffic and organic search? Organic search functions primarily as a conversion channel where users demonstrate direct purchase intent. AI traffic functions as a research and discovery channel that dominates top and middle funnel stages. Direct conversions from AI search remain near zero, while organic search continues to drive final transactions. Users typically begin research in AI systems and complete purchases through organic or direct channels.

How do search mechanics and SERP layout change behavior? AI Overviews occupy the top of the SERP and push organic results below the fold, disrupting traditional F-pattern scanning. Generative engines apply query fan-out, producing 20–50 impressions per query compared to 1–5 in traditional search. As of 2025, 13% of Google queries trigger AI Overviews, and 47% of global searches include AI-enhanced elements. AI-driven rankings fluctuate up to 30% weekly, while organic rankings depend on long-term authority.

What role does zero-click behavior play? Zero-click searches increased from 24.4% in 2024 to 27.2% in 2025, with projections up to 69% by year-end. For every 1,000 U.S. Google searches, only 374 clicks reach the open web. AI platforms still account for less than 1% of referral traffic, but they increasingly control attention without clicks.

How do content and optimization strategies differ? Organic search optimization prioritizes technical SEO, keywords, and backlinks. AI traffic optimization prioritizes E-E-A-T, structured data, extractable content formats, and proprietary information. Wikipedia accounts for more than 40% of LLM citations, while PR and media coverage contribute approximately 34%. Reddit and YouTube act as primary human-context sources for AI systems.

How do measurement models between LLM traffic and organic search diverge? Google Search Console does not segment AI Overviews, which hides AI-driven impressions and clicks. Referral masking complicates attribution, which forces reliance on alternative KPIs such as AI share of voice, brand mentions, and incrementality. Some brands report stable or rising impressions despite declining clicks, which confirms that visibility and traffic acquisition no longer move together.

Why do reported outcomes conflict? CTR reduction estimates range from 34.5% to 50%. Zero-click rates vary between 27.2% and 60% depending on methodology. These conflicts reflect the transition from ranking-based discovery to answer-based visibility, where exposure persists even as measurable traffic declines.

What Are the Key Methods to Track LLM Traffic?

The key methods to track LLM traffic are analytics segmentation, technical signal detection, third-party AI visibility tools, infrastructure-level monitoring, and behavioral experimentation. These methods exist because LLM traffic does not follow standard referrer, click, or attribution rules, which makes traditional SEO and analytics setups insufficient for measuring AI-driven discovery and influence.

1. Google Analytics (GA4) Reports

Google Analytics 4 (GA4) reports are a key method to track LLM traffic because GA4 provides the most reliable infrastructure to isolate, classify, and analyze AI-originated sessions that traditional SEO tools cannot measure. Google Analytics 4 matters because AI-driven discovery has increased by more than 300% to 527% year over year, yet most of this traffic is misclassified unless it is explicitly filtered and reattributed.

How does GA4 filter traffic sources to identify LLM traffic? Google Analytics 4 filters traffic sources by using the Session source medium and First user source medium dimensions. These dimensions allow analysts to separate AI referrers from organic search and paid channels. This separation matters because AI platforms route users through distinct domains that behave differently from search engines.

Why is regex filtering required inside GA4? Regex filtering is required because LLM platforms use inconsistent and frequently changing referral domains. Google Analytics 4 supports regular expressions that capture broad AI identifiers such as GPT ChatGPT, OpenAI, Gemini, Copilot, Claude, Perplexity, and Mistral. Regex filtering consolidates fragmented AI sessions that would otherwise be split across Direct Referral or Unassigned traffic.

How do custom channels improve LLM traffic analysis? Custom Channel Groups improve LLM traffic analysis by grouping all AI sources into a single LLM or AI channel. This configuration enables direct performance comparison with Organic Search, Paid Search, and Social channels using consistent metrics. Custom channels are necessary because Google Analytics 4 does not provide a native AI traffic category.

Why are Looker Studio and GA4 Library reports used? Looker Studio dashboards and GA4 Library reports provide persistent access to LLM traffic insights beyond the sixty-day limitation of Explorations. These reports allow stakeholders to monitor AI driven sessions engagement and conversions over time. Google Analytics 4 functions as the central repository for UTM parameters, which enables cross-validation of AI attribution.

What strategic insights does GA4 provide for LLM traffic? Google Analytics 4 shows that AI-referred users stay approximately two point three times longer than traditional search visitors and are 40% more likely to engage with downloadable resources. GA4 identifies which landing pages receive AI traffic, which validates which content structures are extractable and citation-ready for Generative Engine Optimization. Consistent LLM traffic in GA4 reports acts as a proxy signal of AI-recognized brand authority.

How does GA4 address misclassification and dark AI traffic? Google Analytics 4 supports reclassification of dark AI traffic by analyzing landing page depth source patterns and parameter signals that indicate referrer stripping. Although GA4 cannot measure zero-click exposure or AI Overview impressions, it remains the only scalable method to recover attributable AI sessions.

2. Use UTM Parameters Features

UTM parameters are a key method to track LLM traffic because they provide persistent and self-identifying attribution when referrer data is stripped or never passed by AI platforms. UTM parameters matter because baseline GA4 accuracy for LLM traffic is only 60% to70% without them, which creates a 30% to 40% attribution gap.

How do UTM parameters mitigate dark LLM traffic? UTM parameters prevent misclassification by explicitly labeling traffic with source identifiers such as ChatGPT or perplexity. This labeling ensures AI-driven visits are not bucketed as Direct Unassigned or Not set. UTMs persist even when users copy and paste URLs, which occurs in approximately 77.97% of ChatGPT interactions.

Why are UTMs more reliable than referrer headers? UTM parameters persist across secure and non-secure transitions, mobile applications, and privacy-restricted browsers. In Google Analytics, UTMs override referrer data, which gives analysts deterministic attribution control. This persistence makes UTMs the most stable signal when AI platforms enforce strict origin policies.

How do UTMs identify specific LLM platforms? UTM parameters expose platform-specific signatures such as utm source equals chatgpt or utm source equals perplexity. When present, these parameters allow analysts to distinguish citation-driven clicks from conversational traffic.

How do UTMs support reporting and audience segmentation? UTM data enables the creation of dedicated LLM channels, AI-specific audiences, and multi-session attribution paths inside Google Analytics and Customer Journey Analytics. This segmentation supports analysis of high-intent AI users whose engagement levels are 68% higher on average and whose conversion rates can exceed organic traffic by more than four times.

What limitations apply to UTM tracking? UTMs are not consistently applied to inline AI links and are sometimes removed in sensitive topic contexts such as healthcare or finance. Google AI Overviews do not pass UTM parameters by default. Despite these constraints, UTMs remain the most effective method to close attribution gaps created by referrer stripping.

3. Use Specialized LLM Tracking Features

Specialized LLM tracking features are a key method to track LLM traffic because they measure AI visibility citations and brand influence that never generate website sessions. These features matter because modern discovery increasingly occurs inside AI-generated answers, where users consume information without clicking.

Why do traditional analytics fail to capture LLM visibility? Traditional analytics platforms lack a dedicated AI referral classification and rely on referrer-based sessions that LLMs frequently suppress. Google Analytics captures only 60% to 70% of attributable AI traffic and cannot observe zero-click exposure, AI Overview impressions, or conversational brand mentions.

What metrics do specialized LLM tracking features measure? Specialized tracking features measure AI Share of Voice citation frequency, brand mention rate, sentiment, and competitive displacement across AI-generated responses. These metrics reflect selection and trust rather than click behavior.

How do specialized features analyze prompts and intent? Specialized tracking systems analyze natural language prompts that trigger brand mentions. This analysis distinguishes informational, comparative, and decision stage intent. Prompt-level data shows that queries containing terms such as best produce brand mentions at significantly higher rates.

How do specialized features validate AI output and accessibility? Specialized tracking validates AI output using response capture, headless browser monitoring, and accessibility checks. These methods detect robot file blocks, CDN restrictions, and rendering failures that prevent AI citation eligibility.

What business outcomes justify specialized LLM tracking? Organizations using specialized AI visibility tracking report improved narrative control, earlier detection of misinformation, and measurable revenue impact. Enterprises implementing Answer Engine Optimization strategies report average revenue increases of approximately 11% within six months, even as click-through rates decline.

Why is specialized tracking now mandatory? Since impressions have increased while clicks declined by approximately 30%, visibility has replaced traffic as the primary KPI. Specialized LLM tracking is mandatory because it is the only method that measures exposure authority and competitive position inside AI-generated answers.

What Are LLM Visibility Tools?

LLM visibility tools are analytics and monitoring systems that track how brands, products, and services appear inside generative AI answers and conversational responses across Large Language Models (LLMs). LLM visibility tools matter because users increasingly consume answers inside AI interfaces, which creates zero-click exposure where brand influence happens before any website visit.

The four most important tools are listed below.

1. LLM Visibility by Search Atlas

LLM Visibility by Search Atlas is an enterprise-ready analytics platform that tracks brand performance, discovery, and sentiment inside generative AI environments across multiple Large Language Models. LLM Visibility by Search Atlas matters because it centralizes answer-level measurement for models such as ChatGPT, Gemini Perplexity, Claude SearchGPT, Microsoft Copilot, and Grok using daily live data via API calls.

What does LLM Visibility by Search Atlas measure for AI visibility performance? LLM Visibility by Search Atlas measures Share of Voice or Share of Model, a Visibility Score, ranking, and placement inside responses, sentiment analysis, citation frequency, and topic clustering. These measurements matter because they reveal whether a brand appears first, appears last, or is missing entirely from AI-generated answers.

How should teams use the dashboard modules for platform-specific tracking? Use the Summary Dashboard to review total citations, average sentiment, and visibility trend lines by model. Use the Visibility Dashboard to identify underperforming queries within date ranges. Use the Sentiment Dashboard to isolate sentiment by topic and source. Use the Topics and Queries Dashboard to map prompts that trigger mentions and to prioritize prompts with low visibility scores.

How does LLM Visibility by Search Atlas support execution beyond monitoring? LLM Visibility by Search Atlas integrates with automation systems, including OTTO Automation, Domain Knowledge Network, a universal CMS connector, and a chat-based interface inside Content Genius. This integration matters because visibility insights can trigger content optimizations and internal linking deployments when competitive gaps exceed defined thresholds.

What pricing and data constraints shape implementation decisions? LLM Visibility by Search Atlas is included in Search Atlas plans at $99 per month, $199 per month, and $399 per month. The platform provides approximately 2 to 3 years of historical data, and reported sources describe database size differences compared with larger enterprise systems.

2. AI Visibility Toolkist by Semrush

AI Visibility Toolkist by Semrush is a specialized add-on designed for Generative Engine Optimization that monitors brand presence sentiment and market share inside AI-driven search platforms and Large Language Models.

AI Visibility Toolkist by Semrush matters because it translates AI-generated perceptions into reporting and competitive insights for teams that need visibility measurement beyond blue link SEO.

What does the AI Visibility Toolkist by Semrush measure and report? AI Visibility Toolkist by Semrush measures an AI Visibility Score from 0 to 100, mentions versus citations, sentiment visualization, AI volume estimates, and Share of Voice. These outputs matter because they separate brand naming from source linking, which clarifies whether AI systems recommend a brand or cite owned content.

How should teams use prompt research and gap analysis in the toolkit? Use Prompt Research to analyze AI topic volume, topic difficulty, and intent mix across a prompt database described as 100 million to 130 million or more prompts, depending on the source. Use Gap Analysis to identify missing prompts where competitors appear, but the brand does not appear. This workflow matters because it reveals visibility gaps that keyword rank tracking does not detect.

How should teams apply the technical monitoring features for AI readiness? Use the AI Search Health audit to detect missing llms.txt files, robots rules that block AI crawlers, and pages that lack last-modified headers. Use Prompt Tracking to monitor daily visibility and average position inside supported AI interfaces. This approach matters because technical blockers prevent retrieval and citation even when content quality is high.

What cost and coverage constraints affect adoption? AI Visibility Toolkist by Semrush costs $99 per month per domain with bundled plans starting at $199 per month. Sources report regional limits and language limits, and sources report conflicting statements about which LLMs are supported and which are coming soon.

3. Profound

Profound refers to multiple entities, including a linguistic term, a medical technology product, and an enterprise AI visibility platform focused on brand citation and ranking inside AI-generated responses. Profound matters in LLM visibility discussions because the Profound enterprise AI platform positions itself as a monitoring system for AI visibility and answer presence.

What is the Profound enterprise AI platform in an AI visibility context? Profound enterprise AI platform is an enterprise-level platform focused on AI visibility that aims to ensure brands are cited and ranked inside AI-generated answers and search engines. The platform matters because it supports enterprise requirements such as SOC 2 Type II compliance and Single Sign On using SAML or OIDC.

How should teams interpret scope when the Profound name appears in research? Interpret Profound references by confirming whether the context describes the AI visibility platform, Profound RF medical technology, or the word’s meaning itself. This check matters because the Profound entity name is used across unrelated domains, which can create tracking and reporting ambiguity.

What operational constraints appear in the reported platform details? Reported platform details include automated daily backups with a one-week retention period and access through customized enterprise pricing with a review process. Sources report conflicting funding and scalability statements, which indicate variance across published descriptions.

4. Brand Radar by Ahrefs

Brand Radar by Ahrefs is an AI-driven monitoring system that tracks brand visibility across AI Overviews, chatbots, and Large Language Models while connecting visibility signals to broader web demand. Brand Radar by Ahrefs matters because it measures the discovery layer where brands are recommended and discussed across conversational AI and close attention platforms.

What does Brand Radar by Ahrefs track across AI and web surfaces? Brand Radar by Ahrefs tracks mentions and citations across multiple AI indexes, including ChatGPT, Perplexity, Google AI Overviews, Gemini, and Microsoft Copilot, and it scans visibility signals from platforms such as YouTube, TikTok, and Reddit. This coverage matters because AI answers often rely on multi-platform source ecosystems rather than web pages alone.

How does Brand Radar by Ahrefs calculate visibility metrics? Brand Radar by Ahrefs uses mentions, citations, impressions weighted by Google search volume, AI Share of Voice, search demand, and a web visibility metric that excludes AI Overview data. These metrics matter because they quantify exposure even when users do not click through to a site.

How should teams use filtering and narrative protection features? Use logic filters such as contains and does not contain to isolate branded gaps where a query contains the brand, but citations do not include owned content. Use the AI responses view to detect inaccurate or outdated messaging and negative sentiment. This method matters because AI outputs vary and can drift away from current positioning.

What data and availability constraints affect interpretation? Brand Radar by Ahrefs updates data monthly and is positioned for trend analysis rather than real-time monitoring. Sources report conflicting database size figures and describe sampling constraints during beta, and sources describe pricing as included in paid subscriptions with an intent to become a separate add-on later.

What Are the Key Metrics to Monitor LLM-Driven Traffic?

The key metrics to monitor LLM-driven traffic are sessions, engagement rate, engagement time, and conversion rate because these metrics reveal whether AI mediated discovery produces measurable business impact beyond zero-click exposure. These metrics matter because LLM-driven traffic behaves differently from traditional organic search traffic, requiring evaluation based on intent density, depth of interaction, and downstream outcomes rather than rankings or impressions alone.

Why are Sessions a Key Metric for LLM Traffic?

Sessions are a key metric for LLM traffic because sessions represent the only reliable confirmation that AI visibility translated into an actual website visit. Sessions matter because 88% of AI citations originate from pages ranking outside the top 10 organic results, which makes traditional ranking reports insufficient for validating AI-driven discoverability.

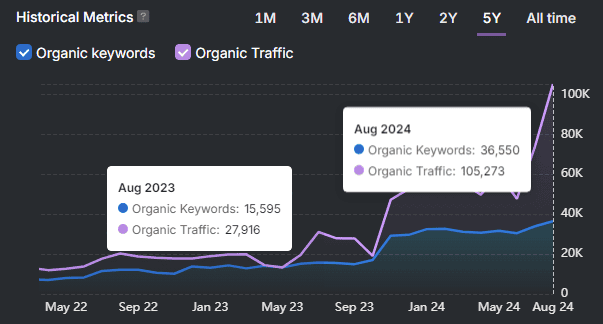

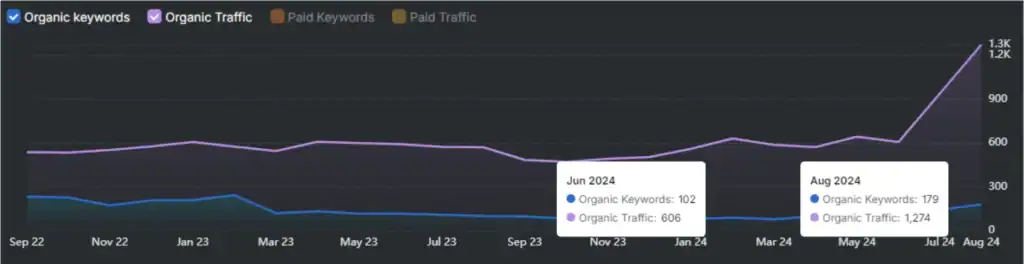

How do sessions support growth benchmarking for LLM traffic? Session tracking enables direct comparison between LLM-driven sessions and Google organic sessions. AI-driven traffic increased by 527% in the first half of 2025, and ChatGPT reached nearly 700 million weekly active users by August 2025. Tracking sessions allows teams to determine whether AI platforms are beginning to rival traditional search engines, which are projected to be overtaken by AI search by 2028.

Why do sessions indicate intent and funnel position? LLM-driven sessions typically represent high intent mid-funnel behavior. Users arrive after the LLM has already synthesized definitions, comparisons, and reviews. Average session duration for LLM traffic reaches 7 minutes and 35 seconds compared to 4 minutes and 41 seconds for organic search, which confirms buyer intent compression. One documented case showed a 2000% increase in qualified leads driven by ChatGPT citations.

How should sessions guide prioritization and resource allocation? Session share functions as a prioritization threshold. Teams should prioritize LLM traffic when it reaches 2%or more of total organic sessions, monitor when between 0.5 and 2%, and deprioritize when below 0.%. If session volume grows without conversion growth, optimization should focus on calls to action or form friction rather than additional content production.

How do sessions support attribution and content validation? Sessions are the functional unit used in GA4 to identify AI origins through source and regex analysis. Session-level analysis reveals distinctive behavioral patterns such as extremely fast navigation, which helps separate human users from automated fetches. Session data identifies which landing pages LLMs surface, enabling targeted optimization of high-visibility content.

What limitations affect session-based measurement?

Some analysts argue that sessions undervalue AI influence in zero-click environments where brand authority forms without visits. Despite this limitation, sessions remain the only measurable proof that AI visibility produced direct site interaction.

Why are Engagement Rate and Engagement Time the Key Metrics for LLM Traffic?

Engagement rate and engagement time are key metrics for LLM traffic because they validate traffic quality and confirm whether AI-referred users found the information they expected. These metrics matter because referrer stripping frequently hides AI sessions inside Direct traffic, which requires behavioral validation rather than source labels alone.

How do engagement metrics validate user intent? LLM-driven traffic shows an average bounce rate of 27% compared to 42% for Direct traffic. Higher engagement confirms that AI users arrive pre-qualified after completing research inside the LLM interface. Engagement functions as a quality filter that distinguishes human sessions from non-human fetches.

How does engagement measure task complexity and depth? Engagement metrics reveal differences across AI platforms. ChatGPT accounts for over 80% of LLM sessions but shows lower average engagement, which indicates broad discovery behavior. Claude users demonstrate deeper engagement with average session durations exceeding 6 minutes. Microsoft Copilot maintains strong engagement due to integration with productivity workflows, while Perplexity shows high traffic efficiency relative to its market share.

Why are engagement metrics critical when attribution fails? LLM-driven clicks are frequently undercounted because referrer data is stripped by mobile apps and privacy controls. Engagement metrics confirm the value of hidden AI referrals by showing time spent, scroll depth, and progression to high-intent pages such as pricing or demos. Average engagement time is a more reliable indicator than time on page because it filters out tab switching and inactivity.

How does engagement diagnose content and experience alignment? Low engagement or high bounce rates indicate a mismatch between the AI summary and the landing page content. If engagement is significantly lower than that of other cohorts, the AI response may have already satisfied the user intent. Monitoring engagement trends helps teams detect misalignment and refine content clarity.

What strategic thresholds define success for engagement metrics? A downstream engagement lift of 10 to 20% over 28 days is commonly used as the benchmark for validating LLM channel prioritization. Engagement growth functions as a leading indicator of future conversion gains.

Why is Conversion Rate a Key Metric for LLM Traffic?

Conversion rate is a key metric for LLM traffic because conversion rate determines whether AI-driven sessions produce measurable revenue or qualified outcomes rather than superficial engagement. Conversion rate matters because LLM traffic volume remains low while intent density remains high.

How does LLM traffic compare in terms of conversion performance? AI-referred visitors convert at an average rate of 13.8% compared to 9.3% for organic search. AI-driven conversions grew by more than 9700 year over year, while organic conversions grew by 121%. Platform-specific performance shows Copilot converting many times higher than direct traffic for subscriptions, with Perplexity and Gemini outperforming traditional channels in specific contexts.

Why does conversion rate reflect funnel compression? LLMs handle early research and comparison stages before a user clicks. By the time a user visits a website, they are typically near the final decision stage. Conversion rate reflects this filtering effect because LLM interfaces create friction that screens out low-intent users.

How should the conversion rate guide strategic decisions? Teams should prioritize LLM traffic when downstream conversion lift exceeds 15% over a 28-day window. Conversion rate serves as the primary filter to determine whether LLM traffic is meaningful or noise. Many organizations set success targets at a 10 to 20% increase in conversion completion within 60 days of LLM optimization.

What sector-specific patterns appear in conversion data? LLM conversion rates outperform organic search in sectors such as careers, health, publishing, and real estate. SaaS shows near parity between LLM and organic conversion rates, which confirms LLM’s effectiveness for complex products. Some sectors, such as B2B e-commerce, show lower direct conversion rates, which indicates that AI traffic may assist rather than close transactions.

Why is conversion rate a leading indicator of future value? Although AI referrals account for less than 1% of total sessions, their high conversion efficiency signals long-term channel value. Conversion rate validates whether AI-driven discoverability contributes to revenue even as click volume remains limited.

What data quality risks affect conversion measurement? Reported conversion rates vary widely due to attribution challenges and GA4 configuration errors. Some datasets show outliers exceeding 100% conversion, which highlights the importance of clean event definitions and consistent tracking when evaluating this metric.

What are the Challenges and Limitations in AI Traffic Tracking?

The challenges and limitations in AI traffic tracking include attribution loss, missing standards, low measurable volume, zero-click behavior, and inconsistent platform signals that prevent analytics systems from measuring AI-driven discovery with the same precision as traditional search traffic. These limitations matter because AI systems increasingly influence brand perception and decision-making inside AI interfaces without producing a measurable website session.

What technical attribution and measurement barriers limit AI traffic tracking? AI traffic tracking breaks at the attribution layer because most AI tools do not send referrer headers when users click links. There is no universal identifier, such as utm_source=chatgpt, applied automatically to AI-generated links. Some AI systems fetch and cache content on their own servers, which causes analytics platforms to record crawler requests such as GPTBot or AnthropicBot instead of the actual end-user interaction. AI referrals currently represent less than 1% of total referral traffic, which makes ROI difficult to justify using traditional volume-based metrics.

How does zero-click behavior obscure AI traffic measurement? Zero-click behavior obscures AI traffic measurement because users trust and consume AI-generated summaries without visiting the source website. Data shows that 42% of consumers rely on AI summaries without clicking a link, which removes sessions from analytics while still creating brand exposure. Consistent link tagging remains rare and is typically limited to publishers in formal partnerships with AI vendors.

What data accuracy and behavioral challenges distort AI traffic tracking? Direct traffic obfuscation occurs when users copy and paste URLs from AI interfaces, which causes traffic to appear as direct or unknown in analytics systems. AI-generated traffic can mimic human behavior patterns, which makes it difficult to distinguish genuine users from automated systems. Output volatility further complicates tracking because a brand mention visible at 9 AM may disappear or change by 3 PM. Cross-platform discrepancies reduce reliability because a brand may appear in Google AI Overview but remain absent in Perplexity or ChatGPT for the same topic.

Why do rapid adoption pressures amplify these limitations? Adoption pressures increase urgency because AI summaries already appear in approximately 50% of U.S. Google searches, with projections indicating this figure may reach 75% by 2028. This shift expands zero-click exposure while shrinking the proportion of discovery that results in trackable website sessions.

What operational and environmental constraints affect AI traffic systems in physical environments? AI traffic systems operating in physical environments face stochastic performance because AI and machine learning outputs include inherent failure probability that is harder to quantify than traditional software errors. Out-of-distribution risks occur when AI systems encounter scenarios not present in training data, such as unrecognized roadwork or uncommon vehicle designs. Environmental complexity reduces tracking accuracy in dense urban areas where road use is unpredictable. Recognition failures can include missed pedestrians, incorrect lane identification, or localization errors that lead to stop-of-service events or accidents. Connectivity dependencies add additional risk because many systems require stable internet access and fail in low-connectivity environments.

What financial and infrastructure limitations restrict AI traffic tracking? AI traffic system implementation often requires high upfront costs ranging from $20,000 to more than $200,000 per project. Infrastructure gaps limit deployment because older systems cannot support advanced AI sensors without phased rollouts and additional hardware or cloud investment. Data quality standards act as a strict constraint because AI effectiveness depends on clean, labeled, and standardized data. Poor data quality leads to inaccurate routing decisions and unreliable outcomes.

How do regulatory, privacy, and ethical factors limit AI traffic tracking? Regulatory frameworks such as GDPR and CCPA impose strict data collection requirements, creating a privacy versus tracking trade-off for businesses. Regulatory fragmentation in the United States forces organizations to navigate state-level rules without a unified federal standard. Algorithmic bias introduces fairness risks when AI systems underperform in low-income or rural areas. The black-box nature of complex AI models reduces explainability, which complicates liability assessment and increases public mistrust after failures.

What human and labor risks affect AI traffic system reliability? Human-AI interaction challenges emerge when trust calibration fails. Excessive trust leads to operator complacency, while insufficient trust leads to underutilization of AI systems. Workload transition risk arises because human operators struggle to shift from low-intensity monitoring to high-stress intervention when AI systems fail. Job displacement concerns further complicate adoption, as projections suggest AI may affect nearly 40% of jobs worldwide, increasing resistance and the need for reskilling.

What performance data discrepancies create uncertainty in AI traffic tracking claims? Performance data varies across sources, particularly in environmental accuracy claims. One analysis reports AI accuracy levels of 95-98% in challenging conditions and darkness, while other findings state that video-based components require adequate lighting to maintain precision. This discrepancy indicates that performance may degrade in low-light conditions without supplemental sensors such as radar, which complicates validation and trust in reported metrics.

What are the Differences Between AI Visibility vs. LLM Traffic?

AI visibility refers to a brand’s presence, brand mentions, citations, and trust signals inside AI-generated answers, while LLM traffic refers to measurable referral visits and clicks that arrive on a website from LLM interfaces such as ChatGPT or Gemini. AI visibility matters as an authority and brand recognition metric inside zero-click environments, while LLM traffic matters as a direct traffic and conversion metric recorded in analytics.

What is the goal difference between AI visibility and LLM traffic? AI visibility aims to increase brand recognition and authority inside LLM responses, even when users do not click a link. LLM traffic aims to move users from the AI interface to a website through direct click-through visits. This goal difference matters because AI systems can satisfy intent without a website visit, which means visibility can grow while traffic remains flat.

What is the measurement difference between AI visibility and LLM traffic? AI visibility is measured using AI-specific query sets, brand mentions, citation frequency, and sentiment analysis inside AI responses. LLM traffic is measured in analytics systems as referral sessions from AI domains and referrer patterns associated with LLM interfaces. This measurement difference matters because analytics platforms capture attributable clicks but do not capture zero-click brand exposure.

What performance metrics define AI visibility compared to LLM traffic? AI visibility performance uses metrics such as inclusion in AI answers, AI Overview presence, brand mention rate, and citation frequency. LLM traffic performance uses metrics such as click-through rates, sessions, engagement, and conversion rates. This performance difference matters because AI visibility indicates recommendation and trust, while LLM traffic indicates measurable movement and action.

What functional difference separates being selected from being clicked? AI visibility functions as being found and recommended inside the AI answer layer. LLM traffic functions as transferring the user from the AI answer layer to the website. This functional difference matters because AI systems often resolve the user question inside the interface, which reduces click incentive.

How does the relationship between visibility and traffic work in practice? AI visibility functions as a precursor signal because frequent mentions as a trusted source increase authority signals even when clicks do not occur. This relationship matters because high AI visibility does not guarantee high LLM traffic when the AI answer fully satisfies the intent. AI visibility can still influence outcomes indirectly through increased branded search, direct navigation, and long-term trust formation.

How should the optimization strategy differ for visibility versus traffic? Optimizing for AI visibility requires entity-based content that is easy to extract, summarize, and reuse inside AI answers. Optimizing for LLM traffic requires citation-ready placement and page-level relevance that encourages click-through when the AI provides sources. This strategy difference matters because visibility prioritizes selection and trust, while traffic prioritizes compelling pathways that convert exposure into a visit.

How to Optimize Content for AI Visibility and Traffic?

You can optimize content for AI visibility and traffic by making content crawlable, extractable, and trustworthy for AI systems that retrieve passages and synthesize answers. AI visibility optimization matters because AI Overviews reduce click-through rates by 30% to 50% and can satisfy intent inside the interface, which shifts success from rankings to citations, mentions, and high-intent referral sessions.

What are the 7 core methods to optimize content for AI visibility and traffic? The 7 methods to optimize content for AI visibility and traffic are listed below.

- Ensure technical accessibility

- Build topical authority

- Write structured content

- Prioritize E-E-A-T

- Leverage semantic search and intent

- Maintain content freshness

- Use multi-modal content

1. Ensure Technical Accessibility

Ensuring technical accessibility optimizes content for AI visibility and traffic by making pages reliably crawlable, readable, and indexable for AI crawlers and retrieval systems. Technical accessibility matters because many AI crawlers do not execute JavaScript, and AI assistants direct users to 404 pages nearly 3 times more often than traditional Google Search, which makes technical errors a direct cause of lost AI traffic.

How should teams implement technical accessibility for AI visibility? Implement technical accessibility using the steps below.

- Allow crawler access in robots.txt for AI crawlers such as GPTBot and Claude-Web, and keep pages unblocked for Googlebot.

- Serve content in indexable HTML and use server-side rendering (SSR) when the site depends on client-side rendering.

- Return HTTP 200 status codes for indexable pages and fix 404 errors and redirect chains to reduce AI referral loss.

- Maintain high page speed and mobile readiness, because slow pages reduce crawl success and signal low quality.

- Implement schema markup in JSON-LD for products, reviews, FAQs, and events, because structured data improves entity identification and citation eligibility.

- Use clear heading hierarchy and machine-readable lists and tables, because AI systems extract content in reusable segments.

- Avoid hiding important content in tabs or accordions, because AI systems may not render hidden text.

2. Build Topical Authority

Building topical authority optimizes content for AI visibility and traffic by increasing repeated selection in AI answers through deep topic coverage and consistent internal context. Topical authority matters because AI systems evaluate content depth and citation patterns, and established topical authority is associated with a 68% increase in AI answer appearances.

How should teams build topical authority for AI visibility?

Build topical authority using the steps below.

- Define 3 to 5 core subtopics that represent real depth within the primary topic.

- Create a topic cluster where every cluster page links to the pillar page and each cluster page links to 2 to 3 other cluster pages.

- Use descriptive H2 and H3 headings, because hierarchical organization signals a deep understanding of AI systems.

- Increase proof density using data tables, measurable claims, and original research, because AI systems prioritize verifiable evidence.

- Identify knowledge gaps where AI answers remain generic or outdated, then publish content that resolves those gaps with specific facts.

- Validate visibility by testing 20 to 30 baseline queries across ChatGPT, Claude, and Perplexity, then track whether citations and mentions increase over time.

- Refresh top-performing cluster content every 6 to 12 months to maintain authority signals.

3. Write Structured Content

Writing structured content optimizes content for AI visibility and traffic by improving passage-level extraction, summarization accuracy, and citation selection inside AI answers. Structured content matters because studies associate AI citations with structural factors such as clarity and summarization at 32.83%, E-E-A-T signals at 30.64%, Q and A formatting at 25.45%, headings at 22.91%, and lists or tables at 21.60%.

How should teams structure content for AI extraction and reuse?

Structure content using the steps below.

- Write modular content chunks of 200 to 400 words that answer one query completely.

- Place the direct answer in the first 1 to 2 lines of each section, because AI crawlers prioritize early answer placement for extraction.

- Format headings as questions and keep a consistent H1 to H4 hierarchy, because headings act as chapter titles for machine parsing.

- Use short paragraphs and scannable layouts with numbered steps and bullet lists, because long walls of text reduce segment reuse.

- Use HTML tables for comparisons and specifications, because AI extracts data from tables more reliably than images.

- Add 8 to 10 substantial FAQ questions and answers in visible page content, and avoid hiding FAQs in accordions.

- Use simple punctuation and avoid decorative symbols, because complex characters can break machine parsing.

4. Prioritize E-E-A-T

Prioritizing E-E-A-T optimizes content for AI visibility and traffic by increasing trust signals that AI systems use during passage ranking, verification, and citation selection. E-E-A-T matters because AI Overviews appear in 57% of search engine results pages, and 52% of cited sources come from the top 10 organic results, which ties trust signals to both ranking and AI citation eligibility.

How should teams prioritize E-E-A-T for AI visibility?

Prioritize E-E-A-T using the steps below.

- Make authorship transparent using visible bylines, credentials, and organizational attribution, because AI systems evaluate accountability.

- Support claims with primary sources and verifiable evidence, because 84% of US users fear hallucinations, and engines prefer high-confidence data.

- Include experience signals such as original photos, first-hand trials, or field anecdotes, because AI lacks real-world interaction and prioritizes direct evidence.

- Maintain HTTPS and keep Time to First Byte under 200 ms, because performance and security support trust and crawler persistence.

- Use schema to connect author and organization entities, because nested identity signals reinforce authority.

- Permit AI crawlers through robots.txt when business policy allows, because blocked access prevents selection regardless of quality.

- Track citation inclusion and brand mentions as the primary KPI, because zero-click exposure can reach 75% for AI overview keywords, and only 8% of users click through in some contexts.

5. Leverage Semantic Search and Intent

Leveraging semantic search and intent optimizes content for AI visibility and traffic by aligning content with entity relationships and intent categories that AI systems use for retrieval and synthesis. Semantic intent alignment matters because AI systems prioritize entities and context over exact-match keywords, and long natural language queries are growing faster than short searches.

How should teams implement semantic and intent optimization?

Implement semantic and intent optimization using the steps below.

- Define entities and attributes explicitly using consistent terminology, because entity-based search depends on stable identity resolution.

- Map content to intent categories such as informational, navigational, commercial investigation, and transactional, then match page structure to the intent type.

- Write headings that mirror real prompts and include direct answers that reduce synthesis work for AI systems.

- Use schema markup and knowledge graph connections in JSON-LD to define relationships and reduce ambiguity.

- Add contextual anchors, such as use-case constraints and environment qualifiers, because specific context improves classification accuracy.

- Maintain clean code and structured layouts, because AI bots can deprioritize pages with high processing costs.

6. Maintain Content Freshness

Maintaining content freshness optimizes content for AI visibility and traffic by matching the recency bias used in AI citation selection and real-time retrieval workflows. Freshness matters because 53% of content cited in ChatGPT was updated within the previous 6 months, and AI-cited content is 25.7% fresher on average than traditional organic search results.

How should teams maintain freshness for AI visibility and traffic outcomes?

Maintain content freshness using the steps below.

- Update high-impact pages monthly when topics change frequently, including pricing, regulations, and fast-moving technology.

- Update evergreen pages every 6 to 12 months to maintain citation eligibility and reduce interpretation drift.

- Replace outdated statistics with current research, because meaningful updates outperform date-only refreshing and preserve trust.

- Keep visible publication dates and update timestamps, because temporal clarity supports relevance scoring.

- Request re-indexing when major updates occur, because performance changes often appear within 2 to 6 weeks after re-index requests.

- Prioritize pages ranking in positions 11 to 20 for refresh cycles, because these pages typically require less effort to move into higher visibility zones.

- Monitor AI mentions and citations to detect when older external sources define the narrative, then publish corrective updates quickly.

7. Use Multi-Modal Content

Using multi-modal content optimizes content for AI visibility and traffic by increasing citation eligibility across systems that prioritize images, video, and structured visuals as grounding evidence. Multi-modal optimization matters because AI search engines send 96% less referral traffic than traditional search, which increases the value of being cited and recognized in rich formats, and video increases dwell time by 88%.

How should teams execute multi-modal optimization for AI systems?

Execute multi-modal optimization using the steps below.

- Publish video content with accurate time-synced transcripts, because videos without transcripts are effectively invisible to AI extraction.

- Implement the VideoObject schema, including duration and key moment markup, because AI systems need segment-level access for direct answers.

- Implement the ImageObject schema and use modern image formats such as WebP or AVIF, because AI systems favor efficient and readable assets.

- Write descriptive alt text focused on intent and context, because AI extraction from images is less reliable without text grounding.

- Use charts and process visuals that present factual proof, because structured visuals reduce hallucination risk and increase citation confidence.

- Embed videos alongside related articles inside content clusters, because mixed formats increase citation likelihood compared to single-format publishing.

- Distribute multi-modal assets on high-trust platforms such as YouTube, because inclusion can occur within hours or minutes in AI results.

What Does the Future of LLM-driven Traffic Look Like?

The future of LLM-driven traffic points toward rapid growth in influence, higher conversion efficiency, and a structural shift from ranking-based discovery to AI-mediated selection, even as direct referral volume remains constrained. This future matters because LLMs increasingly act as the primary information guide, shaping decisions inside AI interfaces before any website visit occurs.

How will market share and usage patterns evolve for LLM-driven traffic? LLM-driven traffic continues to expand across platforms and contexts. ChatGPT currently controls approximately 84.2% of AI referrals and reached a peak of over 200,000 sessions in October 2025, representing a 4.29x increase from its November 2024 baseline. At the same time, embedded AI systems such as Microsoft Copilot and Claude show faster relative growth, signaling a shift toward AI integrated into the workplace and productivity tools. Industry projections suggest that LLM search traffic could surpass traditional organic search by 2028, with some models forecasting LLMs reaching 75% market share by that point.

Why will zero-click behavior define the future of LLM-driven traffic? Zero-click behavior will dominate because AI systems are designed to retain users by delivering complete answers inside the interface. Traditional search usage is projected to decline by 25% by 2026 as AI assistants replace exploratory browsing. When an AI Overview appears, only about 8% of users click through to source material, and overall zero-click rates already exceed 60% in many contexts. This creates a decoupling between brand impressions and measurable traffic, where influence grows faster than sessions.

How will economic value change despite lower click volume? LLM-driven traffic is small in volume but high in intent. Although AI referrals represent roughly 0.13% of total sessions, they convert on average 4.4 times better than traditional search traffic, with some reports showing conversion efficiency as high as 23 times in specific contexts. By late 2027, AI-driven discovery is expected to deliver economic value equal to or greater than traditional search, even if raw traffic volume remains lower. A known limitation is the signup versus purchase gap, where signups may increase sharply while paid conversions lag, indicating a need for better mid-to-bottom funnel alignment.

Which industries will adopt LLM-driven discovery fastest? Adoption accelerates most rapidly in high-trust and high-stakes categories. Legal, finance, and health show the fastest growth rates, while e-commerce experiences strong seasonal spikes. Landing page behavior already reflects this shift, with ecommerce users landing on product pages, finance users on educational guides, and health users on credibility-focused pages such as About sections. These patterns indicate that AI systems route users based on intent verification rather than generic discovery.

How will content and SEO practices evolve? Success will shift from ranking higher to being selected and cited. Generative Engine Optimization focuses on content that is easy to parse, summarize, and trust, using clear headings, factual bullet points, and structured metadata. User-generated content and discussion platforms play a growing role, as AI systems heavily reference human discussion sources. Technical standards such as llms.txt and structured documentation formats are emerging, although adoption and effectiveness remain uneven.

What user behavior trends shape the long-term outlook? Younger demographics lead adoption. Nearly half of Gen Z users report discovering new products through AI tools, and hybrid usage dominates, with most AI users continuing to use Google alongside LLMs. AI chat is now frequently cited as the primary discovery source in customer surveys, reinforcing its role as a top-of-funnel and mid-funnel influence channel.

Is LLM Traffic the Same as SEO Traffic?

No, LLM traffic is not the same as SEO traffic because LLM-driven discovery relies on AI synthesis, entity selection, and recommendations inside answers, while SEO traffic relies on ranked retrieval and user clicks from search results. In SEO, influence begins after the click when a user lands on a page, whereas in LLM systems, influence begins before any click through explanations, summaries, and brand mentions that may never generate a visit. As a result, organic SEO still drives most measurable volume at roughly 15.6% of site sessions, while LLM traffic remains small at around 0.13% but delivers disproportionately high intent and conversion efficiency. Measurement also differs: SEO success is tracked through rankings, sessions, and click-through rates, while LLM success is tracked through brand mentions, citations, sentiment, and inclusion rates, often inferred indirectly through branded search lift and downstream behavior rather than direct referrals.

Is Prioritizing AI Search Optimization Worth It?

Yes, prioritizing AI search optimization is worth it because AI-driven discovery is growing quickly, delivers higher-intent users, and increasingly determines which brands are recommended before a website visit occurs. AI interfaces are becoming a primary decision layer, with AI Overviews appearing in a rising share of searches, while most organizations still do not actively optimize for AI visibility. This creates a clear first-mover advantage, especially as AI-referred users arrive pre-informed and convert at higher rates, particularly on decision-stage content such as comparisons and buying guides.

AI search optimization also future-proofs visibility by strengthening entity authority rather than relying on fixed keyword rankings. As AI systems replace static positions with contextual answers, success depends on appearing consistently across many variable responses, including zero-click environments where exposure matters more than traffic. While risks exist, such as reduced direct clicks and less deterministic ROI measurement, sectors like legal, healthcare, finance, SaaS, and education already show strong returns. Strategically, AI search optimization extends SEO rather than replacing it, and delaying adoption risks losing recommendation moments that influence decisions long before a click happens.