An AI audit checklist is a structured governance framework that defines how an AI audit evaluates artificial intelligence systems for accuracy, fairness, security, transparency, and compliance across the full lifecycle. An artificial intelligence audit assesses data integrity, model performance, bias controls, explainability standards, deployment safeguards, and post-production monitoring controls. The AI audit checklist converts these evaluation domains into measurable stages, documented controls, and enforceable accountability thresholds. This structure transforms AI governance from informal review into standardized risk validation aligned with regulatory and enterprise requirements.

The AI audit checklist matters because it establishes defensible oversight, regulatory alignment, and lifecycle accountability for artificial intelligence systems. AI auditing verifies that governance frameworks, risk classifications, bias mitigation protocols, and compliance documentation operate within defined thresholds. Artificial intelligence audit procedures prevent regulatory exposure, reduce operational blind spots, and reinforce public trust in AI-driven decisions. Unlike traditional software audits that test deterministic logic, an AI audit examines probabilistic models, adaptive behavior, drift exposure, and ethical impact. This distinction positions AI auditing as a specialized governance discipline required for responsible AI deployment.

The AI audit checklist organizes evaluation into six core domains and five structured stages that guide systematic execution. Core domains include Governance and Compliance, Data Quality and Integrity, Fairness and Bias Mitigation, Security and Robustness, Explainability and Transparency, and Post-Deployment Monitoring. The five-stage AI audit checklist consists of Preparation and Scope Definition, Technical Evaluation of Models and Data, Risk and Compliance Assessment, Operational Review and Validation, and Reporting and Remediation. These domains and stages create traceable checkpoints that align artificial intelligence audit practices with measurable validation standards and structured remediation workflows.

The AI audit checklist is conducted through multidisciplinary governance, technical validation, risk-based prioritization, continuous monitoring, and structured reporting supported by specialized AI audit platforms and compliance tools. Best practices include early governance integration, documented version control, fairness testing, adversarial validation, and regulatory framework mapping (EU AI Act, NIST AI Risk Management Framework, ISO/IEC 42001). Effective AI auditing leverages AI audit platforms, compliance automation tools, drift detection systems, and governance frameworks to maintain traceability and audit readiness. Common challenges include skill shortages, regulatory uncertainty, data privacy risk, integration complexity, cost barriers, and resistance to change. Structured AI auditing mitigates these challenges by embedding accountability, continuous oversight, and enforceable controls throughout the artificial intelligence lifecycle.

What Is an AI Audit?

An AI audit is a structured, evidence-based evaluation that assesses how an AI system is designed, trained, validated, and deployed against defined governance, risk, and compliance standards. An AI audit examines the full lifecycle of an artificial intelligence system and verifies alignment with legal, ethical, and operational requirements. An AI audit establishes documented proof that artificial intelligence systems operate accurately, fairly, securely, and transparently.

How does an AI audit function as a governance mechanism? An AI audit functions as a governance mechanism that connects artificial intelligence systems to accountability frameworks and regulatory controls. An AI audit evaluates algorithmic behavior, data bias, explainability standards, and lifecycle oversight instead of only testing functional software performance. This governance role positions the AI audit within risk management and compliance structures for advanced technology systems.

What core properties define an AI audit? An AI audit is defined by three core properties: scope, evidence, and validation. Scope covers the entire lifecycle from data collection to post-deployment monitoring. Evidence includes documented metrics, testing logs, model documentation, and governance records. Validation applies measurable testing to confirm accuracy thresholds, bias limits, robustness levels, and regulatory alignment.

What components does an AI audit evaluate within an AI system? An AI audit evaluates three interconnected components. The components are data, model, and deployment. Data evaluation reviews dataset accuracy, labeling integrity, representativeness, and privacy safeguards. Model evaluation tests performance metrics, demographic parity, robustness under stress conditions, and reproducibility controls. Deployment evaluation reviews governance workflows, human oversight mechanisms, monitoring systems, and compliance documentation.

Why has the AI audit become a regulatory requirement? An AI audit has become a regulatory requirement because governments have formalized accountability standards for artificial intelligence systems. Regulatory frameworks (OECD AI Principles 2019, GAO AI Accountability Framework 2021, EU AI Act 2024) introduced documentation, transparency, and testing obligations. This regulatory evolution establishes the AI audit as a formal control layer in high-risk artificial intelligence environments.

What primary functions does an AI audit perform? An AI audit performs three primary functions. Firstly, risk identification detects bias, performance degradation, and security vulnerabilities. Secondly, compliance validation confirms alignment with governance frameworks and regulatory mandates. Thirdly, accountability documentation records model decisions, training lineage, and monitoring controls for stakeholder review.

How does an AI audit operate over time? An AI audit operates as a continuous governance process rather than a one-time inspection. Continuous monitoring verifies drift detection, fairness stability, and performance thresholds across production environments. This lifecycle continuity defines the AI audit as a structured accountability system for auditing artificial intelligence at scale, and it connects directly with Artificial Intelligence Optimization (AIO), which structures AI systems and data signals so models remain interpretable, reliable, and compliant within AI-driven environments.

What Does an AI Audit Evaluate?

An AI audit evaluates data integrity, model performance, fairness controls, security safeguards, transparency standards, and governance compliance across the full lifecycle of an AI system. An AI audit verifies whether artificial intelligence systems operate within defined legal, ethical, and organizational boundaries. An AI audit measures documented evidence instead of relying on internal claims or informal reviews.

What does an AI audit evaluate in relation to data quality and bias? An AI audit evaluates data sources, data preprocessing methods, dataset accuracy, representativeness, and bias exposure before and after deployment. An AI audit inspects data lineage, labeling consistency, retention controls, and privacy protections. An AI audit applies fairness metrics to detect disparities across demographic groups and documents bias thresholds against defined risk standards.

What does an AI audit evaluate in relation to model transparency and explainability? An AI audit evaluates whether model logic, feature importance, and decision pathways are documented, interpretable, and reproducible. An AI audit reviews explainability reports, validation metrics, and technical documentation that describe how outputs are generated. An AI audit confirms that stakeholders receive traceable explanations for automated decisions, especially in high-risk environments.

What does an AI audit evaluate in relation to regulatory compliance? An AI audit evaluates adherence to applicable regulations and governance frameworks that regulate artificial intelligence systems. An AI audit maps system controls to regulatory requirements (GDPR, EU AI Act, HIPAA, ISO 27001, ISO/IEC 42001) and documents compliance status. An AI audit identifies control gaps and records remediation actions within formal audit reports.

What does an AI audit evaluate in relation to security and robustness? An AI audit evaluates exposure to adversarial attacks, data poisoning risks, model extraction vulnerabilities, and system misuse scenarios. An AI audit reviews access controls, encryption practices, monitoring logs, and anomaly detection systems. An AI audit confirms that performance remains stable under stress conditions and that defensive controls prevent unauthorized manipulation.

What does an AI audit evaluate in relation to organizational governance? An AI audit evaluates oversight structures, accountability roles, policy documentation, and escalation workflows that govern AI system deployment. An AI audit reviews documented responsibilities for model approval, monitoring, and exception handling. An AI audit verifies that governance processes align with defined risk appetite and enterprise compliance standards.

What defines the scope of what an AI audit evaluates? An AI audit evaluates the entire AI lifecycle from data acquisition and model training to deployment and post-deployment monitoring. An AI audit applies comprehensive lifecycle coverage to prevent fragmented risk assessment. An AI audit integrates technical testing, compliance validation, and governance verification into a single structured accountability framework.

Why Are AI Audits Important?

AI audits are important because they establish trust, enforce regulatory compliance, reduce algorithmic bias, strengthen security controls, improve audit quality, and preserve human accountability in artificial intelligence systems. AI audits create documented evidence that artificial intelligence systems operate within defined ethical and legal boundaries. AI audits reduce operational, legal, and reputational risk through structured lifecycle evaluation.

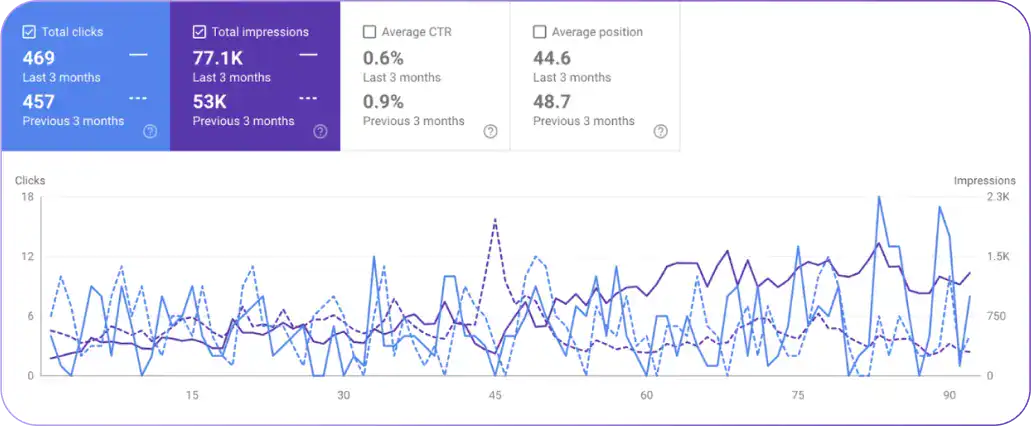

How do AI audits uphold trust and confidence in artificial intelligence systems? AI audits uphold trust and confidence by providing independent verification that AI systems operate securely, accurately, and transparently. Internal audit functions act as a control layer that validates risk management practices and governance alignment. A 2024 IBM Institute for Business Value survey reported that 82% of C-suite executives consider secure and trustworthy AI essential for business success. Transparent audit reporting reinforces accountability in high-impact domains (finance, healthcare, and public administration).

Why is regulatory compliance a key driver for AI audits? Regulatory compliance is a key driver for AI audits because emerging AI regulations require documented oversight, transparency, and risk controls. Frameworks (EU Artificial Intelligence Act 2024, NIST AI Risk Management Framework, GAO AI Accountability Framework 2021) define governance expectations and auditing standards. AI audits verify conformity with ISO 27001, ISO/IEC 42001, SOC 3, and sector-specific compliance obligations. Formal verification prevents regulatory penalties and strengthens defensibility during external review.

What role do AI audits play in mitigating algorithmic bias? AI audits mitigate algorithmic bias by detecting disparities in training data, model outputs, and demographic performance metrics. AI audits apply fairness evaluations, subgroup error-rate comparisons, and dataset representativeness analysis. Bias detection prevents discriminatory outcomes in hiring systems, credit scoring models, healthcare triage tools, and law enforcement applications. Structured bias controls reduce unequal treatment and protect organizational credibility.

How do AI audits strengthen security and data integrity? AI audits strengthen security and data integrity by evaluating access controls, encryption standards, adversarial resilience, and monitoring systems. AI audits test vulnerability exposure to adversarial attacks, data poisoning, and model extraction attempts. AI audits review governance controls that restrict unauthorized access to training and production environments. Structured security validation reduces breach probability and protects sensitive operational data.

In what ways do AI audits enhance audit quality and efficiency? AI audits enhance audit quality and efficiency by combining automated analysis with comprehensive dataset evaluation. AI-powered review systems analyze full datasets instead of limited samples, which increases anomaly detection accuracy. Automated reconciliation and transaction monitoring reduce manual error rates. Expanded dataset coverage improves risk detection precision and strengthens overall audit reliability.

Why is human oversight and accountability critical in AI auditing? Human oversight and accountability are critical in AI auditing because automated systems require expert interpretation and ethical judgment. Governance frameworks require documented human review for high-risk decisions and exception handling. Auditors define evaluation standards, interpret model findings, and validate remediation plans. Human control ensures that artificial intelligence decisions remain aligned with legal, ethical, and organizational accountability requirements.

How Do AI Audits Differ From Traditional Software Audits?

AI audits differ from traditional software audits because AI audits evaluate probabilistic models, dynamic learning behavior, bias risk, and lifecycle governance, while traditional software audits evaluate deterministic code, static controls, and rule-based system compliance. An AI audit examines training data, model drift, fairness metrics, and explainability standards. A traditional software audit examines configuration accuracy, access controls, change logs, and system integrity within predictable logic structures.

What methodological differences separate AI audits from traditional software audits? AI audits rely on full-population model evaluation and lifecycle validation, while traditional software audits rely on sampling, manual procedures, and control testing. An AI audit evaluates 100% of model outputs through automated analytics and behavioral testing. A traditional software audit reviews selected transactions and predefined checkpoints. This methodological shift expands coverage and increases anomaly detection precision in AI systems.

How do monitoring and frequency differ between AI audits and traditional software audits? AI audits operate through continuous monitoring and drift detection, while traditional software audits operate through periodic review cycles. An AI audit tracks model performance in real time and flags deviations from accuracy or fairness thresholds. A traditional software audit reviews controls quarterly or annually and evaluates historical performance. Continuous oversight reduces delayed risk detection in AI environments.

How does data coverage differ between AI audits and traditional software audits? AI audits analyze entire datasets and training pipelines, while traditional software audits analyze limited data samples within transactional systems. An AI audit processes large-scale datasets through automated pattern recognition. A traditional software audit applies risk-based sampling due to manual resource constraints. Full dataset analysis increases visibility into hidden bias, model drift, and anomalous behavior.

How do risk detection capabilities differ between AI audits and traditional software audits? AI audits apply predictive analytics and behavioral testing, while traditional software audits focus on retrospective compliance verification. An AI audit identifies bias patterns, adversarial vulnerabilities, hallucination risk, and performance degradation trends. A traditional software audit identifies misstatements, access control failures, and procedural deviations. Predictive detection strengthens proactive mitigation in AI systems.

How do compliance and regulatory drivers differ between AI audits and traditional software audits? AI audits align with emerging AI governance regulations, while traditional software audits align with established financial and IT control standards. AI audits address regulatory frameworks (EU AI Act, NIST AI Risk Management Framework, ISO/IEC 42001). Traditional software audits align with GAAP, IFRS, SOX, COBIT, and ISO 27001. AI-specific oversight addresses explainability, fairness, and lifecycle accountability beyond conventional compliance checks.

How does the nature of audited systems differ between AI audits and traditional software audits? AI audits evaluate adaptive machine learning systems, while traditional software audits evaluate rule-based deterministic systems. AI systems evolve through retraining and environmental interaction, which introduces drift and probabilistic outcomes. Traditional systems execute fixed logic and generate predictable outputs. Dynamic learning behavior creates audit complexity unique to artificial intelligence.

How do human involvement and skill requirements differ between AI audits and traditional software audits? AI audits require interdisciplinary expertise across data science, ethics, cybersecurity, and regulatory governance, while traditional software audits require domain expertise in accounting, IT controls, and compliance. AI audit teams interpret model outputs, fairness metrics, and adversarial testing results. Traditional audit teams interpret control effectiveness and transaction accuracy. Expanded technical requirements reflect the analytical depth of auditing artificial intelligence systems.

How do cost structures differ between AI audits and traditional software audits? AI audits involve higher initial infrastructure investment but lower marginal analysis cost over time, while traditional software audits remain labor-intensive with recurring manual costs. AI automation reduces repetitive verification effort and increases analysis speed. Traditional audits depend on sustained human resource allocation for sampling and reporting cycles.

How does the regulatory landscape differ between AI audits and traditional software audits? AI audits operate within emerging AI governance ecosystems, while traditional software audits operate within established auditing standards. AI governance continues to evolve and lacks universal auditing standards. Traditional software auditing follows mature global frameworks and established certification models. Regulatory fluidity increases the strategic importance of structured AI audit programs.

What Are the Core Domains of an AI Audit?

There are 6 core domains of an AI audit. These 6 domains define lifecycle coverage and align with AI audit best practices. Each domain evaluates a distinct risk category within artificial intelligence systems.

The 6 core domains of an AI audit are listed below.

- Governance and Compliance.

- Data Quality and Integrity.

- Fairness and Bias Mitigation.

- Security and Robustness.

- Explainability and Transparency.

- Post-Deployment Monitoring.

1. Governance and Compliance

Governance and Compliance AI audit is a structured evaluation that assesses whether an organization’s AI systems operate within defined legal, ethical, and risk management frameworks. Governance and Compliance AI audit verifies documented accountability structures, regulatory mapping, and policy enforcement across the AI lifecycle. Governance and Compliance AI audit provides independent assurance that artificial intelligence systems align with enterprise governance standards and external regulations.

What does Governance and Compliance AI audit evaluate? Governance and Compliance AI audit evaluates oversight structures, risk classification models, regulatory controls, and documented approval workflows for AI systems. Governance and Compliance AI audit reviews model inventories, deployment authorization records, escalation procedures, and board-level accountability documentation. Governance and Compliance AI audit confirms that high-risk AI applications receive proportionate scrutiny and formal review before production release.

How does Governance and Compliance AI audit differ from general IT audits? Governance and Compliance AI audit differs from general IT audits because it evaluates AI-specific risks, bias exposure, explainability requirements, and algorithmic accountability. General IT audits focus on infrastructure integrity, access control enforcement, and system configuration accuracy. Governance and Compliance AI audit focuses on decision transparency, lifecycle traceability, and risk categorization of adaptive models. This distinction positions Governance and Compliance AI audit within AI audit best practices for regulated AI environments.

What evidence supports Governance and Compliance AI audit findings? Governance and Compliance AI audit findings rely on documented bias mitigation controls, regulatory compliance records, data governance policies, and traceable model approval logs. Governance and Compliance AI audit reviews documentation that demonstrates GDPR alignment, risk-based model classification, and defined retraining criteria. Governance and Compliance AI audit validates that roles, responsibilities, and decision authorities remain clearly assigned and consistently enforced.

Why is Governance and Compliance AI audit critical in modern AI oversight? Governance and Compliance AI audit is critical because emerging regulations require documented accountability, lifecycle transparency, and risk-based control enforcement. Governance and Compliance AI audit addresses AI model risk, regulatory exposure, and ethical oversight gaps that arise in high-impact deployments. Governance and Compliance AI audit strengthens defensibility during regulatory inspection and reinforces structured AI governance at enterprise scale.

2. Data Quality and Integrity

Data Quality and Integrity AI audit is a structured evaluation that assesses whether data used in artificial intelligence systems is accurate, complete, consistent, timely, and logically coherent across the full AI lifecycle. Data Quality and Integrity AI audit verifies that training, validation, and inference datasets meet defined quality thresholds. Data Quality and Integrity AI audit ensures that unreliable data does not degrade model performance or introduce hidden risk.

What dimensions does the Data Quality and Integrity AI audit evaluate? Data Quality and Integrity AI audit evaluates five core dimensions. The dimensions are accuracy, completeness, consistency, timeliness, and integrity. Accuracy testing verifies correct labeling and value precision within datasets. Completeness testing verifies that required attributes are present and populated. Consistency testing verifies standardized formats and eliminates duplicate or conflicting records. Timeliness testing verifies that data freshness aligns with operational requirements. Integrity testing verifies logical relationships between data elements across systems.

How does Data Quality and Integrity AI audit differ from general data quality programs? Data Quality and Integrity AI audit differs from general data quality programs because it evaluates the impact of data quality on model training, feature engineering, inference stability, and fairness metrics. General data programs assess data fitness for reporting or operational use. Data Quality and Integrity AI audit evaluates how data quality directly influences probabilistic model behavior and output reliability. This lifecycle-specific focus aligns with AI audit best practices for artificial intelligence governance.

How does Data Quality and Integrity AI audit operate across the AI lifecycle? Data Quality and Integrity AI audit evaluates data at ingestion, feature engineering, model training, validation, and production inference stages. Ingestion review verifies source traceability and preprocessing logic. Feature engineering review verifies transformation integrity and variable construction accuracy. Training and validation review verifies representativeness and bias exposure. Inference review verifies that real-time data streams maintain defined quality thresholds.

Why is continuous monitoring essential in Data Quality and Integrity AI audit? Continuous monitoring is essential in Data Quality and Integrity AI audit because data drift, schema changes, and anomaly patterns directly affect model reliability. Continuous monitoring systems detect missing fields, statistical shifts, and unexpected value distributions. Automated alerts trigger an investigation before degraded data propagates through model outputs. Lifecycle monitoring preserves consistency and strengthens defensibility during audit review.

What governance relationships support Data Quality and Integrity AI audit? Data Quality and Integrity AI audit depends on documented data ownership, metadata lineage systems, access control policies, and formal data governance frameworks. Defined accountability ensures traceable responsibility for dataset updates and corrections. Metadata lineage verifies origin, transformation logic, and storage controls. Structured governance integration ensures that data integrity remains measurable, enforceable, and aligned with enterprise risk standards.

3. Fairness and Bias Mitigation

Fairness and Bias Mitigation AI audit is a structured evaluation that assesses whether an artificial intelligence system operates without unlawful discrimination and within defined ethical fairness standards. Fairness and Bias Mitigation AI audit examines both model outputs and development processes to verify equitable treatment across demographic and protected groups. Fairness and Bias Mitigation AI audit provides documented assurance that bias risk remains identified, measured, and controlled.

What does the Fairness and Bias Mitigation AI audit evaluate? Fairness and Bias Mitigation AI audit evaluates dataset representativeness, subgroup performance disparities, fairness metric thresholds, and remediation controls. Fairness and Bias Mitigation AI audit reviews whether training, validation, and testing datasets reflect demographic diversity and avoid historical imbalance. Fairness and Bias Mitigation AI audit measures statistical parity, error-rate distribution, and outcome consistency across defined population segments.

How does Fairness and Bias Mitigation AI audit address bias detection? Fairness and Bias Mitigation: AI audit applies quantitative bias detection techniques to identify unequal treatment patterns. Fairness and Bias Mitigation AI audit performs disaggregated testing across subpopulations and compares false positives, false negatives, and accuracy differentials. Fairness and Bias Mitigation AI audit documents measurable disparities and records whether disparities exceed predefined tolerance levels.

How does Fairness and Bias Mitigation AI audit enforce bias mitigation controls? Fairness and Bias Mitigation AI audit evaluates pre-processing, in-processing, and post-processing mitigation strategies designed to reduce discriminatory outcomes. Pre-processing review verifies dataset cleansing and rebalancing controls. In-processing review verifies fairness-aware model training constraints. Post-processing review verifies output calibration and threshold adjustment mechanisms. Mitigation validation ensures corrective action does not introduce secondary risk.

How does regulation influence Fairness and Bias Mitigation in an AI audit? Fairness and Bias Mitigation AI audit aligns with regulatory mandates that require active bias mitigation in high-risk AI systems. Regulatory frameworks mandate representative data usage, fairness testing, and documented oversight for sensitive decision domains. Fairness and Bias Mitigation AI audit verifies compliance with these fairness obligations through traceable documentation and measurable performance controls.

Why is continuous monitoring necessary in Fairness and Bias Mitigation AI audit? Continuous monitoring is necessary in Fairness and Bias Mitigation AI audit because fairness performance shifts after deployment due to data drift and contextual change. Fairness and Bias Mitigation AI audit reviews post-deployment performance metrics and emergent disparity indicators. Continuous oversight ensures corrective retraining or recalibration occurs before discriminatory patterns persist.

What governance structures support Fairness and Bias Mitigation AI audit? Fairness and Bias Mitigation AI audit depends on documented AI ethics policies, defined fairness KPIs, accountability roles, and independent review mechanisms. Governance structures assign responsibility for fairness testing, remediation approval, and compliance reporting. Structured oversight reinforces AI audit best practices by embedding fairness evaluation into formal enterprise risk management.

4. Security and Robustness

Security and Robustness AI audit is a structured, evidence-based evaluation that assesses whether an artificial intelligence system resists adversarial threats, protects sensitive data, and maintains stable performance across its lifecycle. Security and Robustness AI audit verifies that AI systems operate within defined security controls, risk thresholds, and resilience standards. Security and Robustness AI audit ensures that artificial intelligence deployments remain protected from manipulation, degradation, and unauthorized access.

What does the Security and Robustness AI audit evaluate? Security and Robustness AI audit evaluates data protection controls, model resilience mechanisms, deployment safeguards, and access management policies. Security and Robustness AI audit reviews encryption standards, authentication enforcement, role-based access control, and anomaly detection systems. Security and Robustness AI audit tests model stability under adversarial inputs and verifies incident response readiness within production environments.

How does Security and Robustness AI audit address adversarial threats? Security and Robustness AI audit identifies vulnerabilities to prompt injection, data poisoning, model inversion, and model extraction attacks. Security and Robustness AI audit conducts stress testing, red teaming simulations, and error-rate analysis across controlled attack scenarios. Security and Robustness AI audit documents exposure levels and verifies corrective controls before system deployment.

How does Security and Robustness AI audit ensure lifecycle protection? Security and Robustness AI audit evaluates controls at data ingestion, model training, validation, deployment, and post-deployment monitoring stages. Ingestion review verifies secure data acquisition and retention controls. Training review verifies model integrity and robustness thresholds. Deployment review verifies monitoring dashboards, incident logging systems, and conformity assessments aligned with regulatory frameworks. Lifecycle coverage strengthens AI audit best practices through continuous risk validation.

How does regulation influence the Security and Robustness of the AI audit? Security and Robustness AI audit aligns with global risk management frameworks that mandate structured AI oversight. Frameworks (NIST AI Risk Management Framework 2023, EU Artificial Intelligence Act 2024, NAIC Model Bulletin adoption across jurisdictions) establish formal expectations for AI risk evaluation. Security and Robustness AI audit verifies compliance through documented safeguards, measurable security thresholds, and traceable governance records.

Why is continuous monitoring essential in the Security and Robustness AI audit? Continuous monitoring is essential in Security and Robustness AI audit because AI systems evolve and interact with dynamic operational environments. Continuous monitoring detects model drift, abnormal behavior patterns, and emerging threat vectors. Continuous monitoring reduces exposure to undetected exploitation and preserves operational resilience throughout production use.

What governance dependencies support the Security and Robustness AI audit? Security and Robustness AI audit depends on formal risk frameworks, skilled technical oversight, and structured control documentation. Governance alignment integrates cybersecurity standards (COBIT, COSO ERM, NIST CSF 2.0, OWASP Top 10 for LLM Applications) into AI lifecycle controls. Structured dependency mapping ensures that AI security controls remain measurable, enforceable, and aligned with enterprise risk management objectives.

5. Explainability and Transparency

Explainability and Transparency AI audit is a structured evaluation that assesses whether artificial intelligence decisions are understandable, traceable, and accountable across the AI lifecycle. Explainability and Transparency AI audit verifies that model logic, feature influence, and decision pathways are documented and reviewable. Explainability and Transparency AI audit ensures that AI outputs are interpreted by stakeholders and defended under regulatory scrutiny.

What does an Explainability and Transparency AI audit evaluate? Explainability and Transparency AI audit evaluates model interpretability methods, decision justification records, data traceability controls, and governance documentation. Explainability and Transparency AI audit reviews feature attribution techniques (LIME, SHAP) and confirm that influence weights and decision factors remain measurable. Explainability and Transparency audit verifies that system outputs are traced back to input variables and documented development processes.

How does Explainability and Transparency AI audit address black-box risk? Explainability and Transparency AI audit reduces black-box risk by requiring documented explanation techniques that clarify how predictions are generated. Explainability and Transparency AI audit inspects local and global explanation reports, feature importance metrics, and model documentation artifacts. Explainability and Transparency AI audit ensures that complex neural network outputs remain interpretable within defined reporting standards.

How does Explainability and Transparency AI audit verify decision justification? Explainability and Transparency AI audit verifies that each automated decision includes traceable reasoning aligned with defined logic and input data. Explainability and Transparency AI audit confirms that outputs contain documented causal drivers rather than unexplained probabilistic results. Decision justification analysis strengthens accountability in finance, healthcare, hiring, and public administration systems.

How does regulation influence Explainability and Transparency in AI audit? Explainability and Transparency AI audit aligns with regulatory frameworks that mandate disclosure, traceability, and human oversight for high-risk AI systems. Frameworks (EU AI Act 2024, NIST AI Risk Management Framework 2023, ISO/IEC 42001:2023) require documented explainability and accountable governance. Explainability and Transparency AI audit validates that documentation meets these compliance expectations through measurable evidence.

Why is stakeholder comprehension central to Explainability and Transparency AI audit?

Stakeholder comprehension is central to Explainability and Transparency AI audit because AI decisions must be understandable to technical teams, regulators, and affected individuals. Explainability and Transparency AI audit evaluates whether explanations are formatted in human-readable language without obscuring technical integrity. Structured explanation strengthens trust and reinforces AI audit best practices within enterprise governance.

What governance structures support Explainability and Transparency AI audit? Explainability and Transparency AI audit depends on documented model development records, transparency reports, defined accountability roles, and structured review committees. Governance integration ensures traceable approval, version control, and ongoing monitoring of explanation artifacts. Formal oversight embeds transparency evaluation into enterprise AI risk management processes.

6. Post-Deployment Monitoring

Post-Deployment Monitoring AI audit is a continuous evaluation process that assesses whether an artificial intelligence system maintains accuracy, fairness, security, and compliance after production release. Post-Deployment Monitoring AI audit verifies real-time performance against predefined thresholds and governance controls. Post-Deployment Monitoring AI audit ensures that deployed AI systems remain stable under changing data conditions and operational environments.

What does the Post-Deployment Monitoring AI audit evaluate? Post-Deployment Monitoring AI audit evaluates performance metrics, drift indicators, anomaly signals, compliance status, and incident response readiness in live environments. Post-Deployment Monitoring AI audit tracks key indicators (accuracy, precision, recall, F1 score, latency, fairness metrics) across production data streams. Post-Deployment Monitoring AI audit verifies that alerts trigger structured investigation and corrective action workflows.

How does Post-Deployment Monitoring AI audit detect drift and anomalies? Post-Deployment Monitoring AI audit detects data drift, concept drift, and abnormal output patterns through statistical monitoring and threshold-based alerts. Post-Deployment Monitoring AI audit compares live input distributions against training baselines to identify deviation. Post-Deployment Monitoring AI audit flags unexpected categorical variables, out-of-range numerical values, and prediction instability before degradation propagates.

How does Post-Deployment Monitoring AI audit differ from pre-deployment validation? Post-Deployment Monitoring AI audit differs from pre-deployment validation because it operates continuously instead of performing a one-time release check. Pre-deployment validation tests model performance in controlled environments. Post-Deployment Monitoring AI audit evaluates model behavior under real-world usage, variable inputs, and operational stress conditions. Continuous oversight strengthens AI audit best practices by extending lifecycle accountability.

What regulatory and governance frameworks influence the Post-Deployment Monitoring AI audit? Post-Deployment Monitoring AI audit aligns with AI governance frameworks that mandate ongoing oversight and lifecycle risk management. Framework guidance (NIST AI monitoring standards, EU Artificial Intelligence Act requirements) requires organizations to maintain traceable logs, compliance controls, and documented monitoring procedures. Post-Deployment Monitoring AI audit validates that deployed systems operate within defined legal and ethical constraints.

Why is continuous monitoring critical in Post-Deployment Monitoring AI audit? Continuous monitoring is critical in Post-Deployment Monitoring AI audit because AI systems evolve and interact with dynamic environments that introduce new risk factors. Continuous monitoring reduces financial loss, regulatory exposure, and reputational damage linked to unnoticed performance failure. Structured monitoring preserves operational reliability and ensures sustained governance compliance across the AI lifecycle.

What governance dependencies support Post-Deployment Monitoring AI audit? Post-Deployment Monitoring AI audit depends on defined escalation procedures, structured logging systems, anomaly detection infrastructure, and documented review cycles. Governance integration assigns accountability for incident review and remediation approval. Structured oversight ensures that monitoring outputs translate into measurable corrective action and enforceable compliance controls.

What Is the 5-Stage AI Audit Checklist?

The 5-Stage AI audit checklist is a structured framework. The AI audit checklist defines sequential control gates that standardize lifecycle oversight. The AI audit checklist ensures consistent documentation, measurable validation, and enforceable corrective action across artificial intelligence systems.

The 5 stages of the AI audit checklist are listed below.

- Preparation and Scope Definition.

- Technical Evaluation of Models and Data.

- Risk and Compliance Assessment.

- Operational Review and Validation.

- Reporting and Remediation.

1. Preparation and Scope Definition

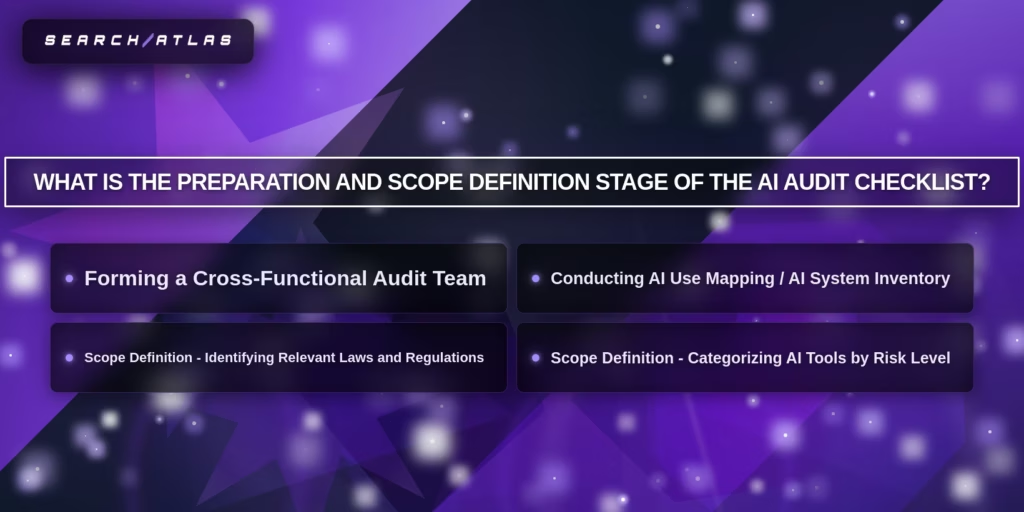

The Preparation and Scope Definition stage of the AI audit checklist is the foundational phase that defines audit boundaries, objectives, risk categories, and accountability structures before technical evaluation begins. Preparation and Scope Definition establishes which AI systems fall within audit scope and determines applicable regulatory exposure. Preparation and Scope Definition aligns audit criteria with organizational strategy, governance controls, and legal obligations.

What does Preparation and Scope Definition establish at the beginning of an AI audit? Preparation and Scope Definition establishes system inventory, stakeholder roles, regulatory mapping, and risk classification thresholds. Preparation and Scope Definition requires a documented inventory of AI models, automated decision tools, machine learning pipelines, and generative systems in production. Preparation and Scope Definition assigns oversight responsibility across compliance, legal, IT, and data science functions to ensure structured governance.

How does the Preparation and Scope Definition structure risk prioritization? Preparation and Scope Definition categorizes AI systems into defined risk tiers based on impact level, regulatory exposure, and data sensitivity. High-risk systems receive immediate evaluation priority due to potential legal and societal impact. Medium-risk and low-risk systems follow proportional review intensity. Risk-based categorization increases audit efficiency and aligns with the AI audit checklist methodology.

How does Preparation and Scope Definition address regulatory alignment? Preparation and Scope Definition identify the applicable laws, standards, and regulatory frameworks governing AI system operation. Regulatory mapping includes jurisdictional AI regulations, sector-specific compliance requirements, and international standards (ISO 42001, EU AI Act, local bias audit mandates). Documented regulatory alignment ensures subsequent audit stages apply measurable compliance criteria.

Why is cross-functional collaboration required during Preparation and Scope Definition? Preparation and scope definition require cross-functional collaboration to integrate legal, technical, operational, and compliance perspectives. A structured audit team includes representatives from compliance, legal, IT security, HR, and data science. Cross-functional participation reduces oversight gaps and strengthens governance validation before technical testing begins.

How does Preparation and Scope Definition improve audit efficiency? Preparation and scope definition improve audit efficiency by defining clear objectives, measurable criteria, and prioritized system coverage before execution. Clear scope boundaries prevent redundant testing and fragmented documentation. Structured planning reduces execution delays and strengthens alignment with AI audit checklist best practices across the full audit lifecycle.

2. Technical Evaluation of Models and Data

The Technical Evaluation of Models and Data stage of the AI audit checklist is the structured phase that rigorously tests dataset integrity, model architecture, validation logic, and measurable performance thresholds before or during production deployment. The Technical Evaluation of Models and Data stage verifies that models perform within defined accuracy, robustness, fairness, and reproducibility limits. The Technical Evaluation of Models and Data stage serves as the analytical core of the AI Audit Checklist, applying quantifiable technical controls.

What does the Technical Evaluation of Models and Data stage evaluate at the data level? The Technical Evaluation of Models and Data stage evaluates dataset accuracy, completeness, representativeness, lineage, and preprocessing controls. Data examination verifies source traceability, labeling consistency, distribution balance, and absence of systematic distortion. Data validation confirms that training, validation, and test datasets reflect production conditions and defined population segments. Structured documentation ensures traceable data governance aligned with the AI audit checklist methodology.

What does the Technical Evaluation of Models and Data stage evaluate at the model level? The Technical Evaluation of Models and Data stage evaluates model architecture, feature engineering logic, optimization procedures, and validation design. Model review verifies algorithm selection, hyperparameter configuration, retraining frequency, and version control discipline. Model validation confirms performance testing on held-out datasets that mirror operational conditions. Documentation review ensures reproducibility and lifecycle traceability.

How does the Technical Evaluation of Models and Data stage measure model performance? The Technical Evaluation of Models and Data stage measures performance using defined quantitative metrics and stress-testing protocols. Performance evaluation applies metrics (accuracy, precision, recall, F1 score, and latency thresholds) and compares results against predefined benchmarks. Robustness testing evaluates system stability under adversarial inputs, edge cases, and distribution shifts. Continuous metric monitoring detects degradation before customer impact occurs.

How does the Technical Evaluation of Models and Data stage identify bias and failure patterns? The Technical Evaluation of Models and Data stage identifies bias and failure patterns through subgroup testing and structured error analysis. Bias analysis defines protected groups and measures disparity across false positive, false negative, and outcome distributions. Failure analysis documents edge-case errors and systematic deviations from expected behavior. Bias mitigation steps and retraining criteria remain formally recorded to reinforce AI audit checklist standards.

Why is documentation critical in the Technical Evaluation of Models and Data stage? Documentation is critical in the Technical Evaluation of Models and Data stage because measurable validation requires traceable evidence of testing procedures and threshold compliance. The stage depends on comprehensive model documentation, data governance records, and performance benchmarks. Structured documentation transforms technical evaluation into defensible audit evidence and supports regulatory review readiness.

3. Risk and Compliance Assessment

The Risk and Compliance Assessment stage of the AI audit checklist is the structured evaluation phase that identifies, classifies, and documents legal, ethical, operational, and security risks associated with an AI system. The Risk and Compliance Assessment stage verifies alignment with applicable regulatory frameworks and internal governance controls. The Risk and Compliance Assessment stage produces defensible documentation that confirms risk awareness and compliance enforcement.

What does the Risk and Compliance Assessment stage evaluate? The Risk and Compliance Assessment stage evaluates safety exposure, fairness risk, data protection compliance, operational impact, and regulatory adherence. The Risk and Compliance Assessment stage reviews model documentation, system architecture, data flows, and deployment context. The Risk and Compliance Assessment stage ensures that risk categories are formally defined and mapped to measurable control requirements within the AI Audit Checklist.

How does the Risk and Compliance Assessment stage structure documentation? The Risk and Compliance Assessment stage requires formal documentation artifacts that describe the system’s purpose, design logic, dataset origin, and intended operational use. Model Cards document training methodology, validation design, bias considerations, and redress mechanisms. System Maps document relationships between model, application environment, and human decision processes. Structured documentation strengthens traceability and reinforces AI audit checklist accountability standards.

How does the Risk and Compliance Assessment stage identify bias and ethical exposure? The Risk and Compliance Assessment stage identifies moments and sources of bias across preprocessing, model training, and output generation stages. Bias analysis evaluates demographic parity, disparate impact, and subgroup performance variance. The Risk and Compliance Assessment stage documents mitigation actions and defines acceptable fairness thresholds. Ethical evaluation extends beyond technical testing to assess real-world societal impact.

How does the Risk and Compliance Assessment stage align with regulatory frameworks? The Risk and Compliance Assessment stage aligns AI systems with regulatory frameworks that mandate documented oversight and lifecycle accountability. Regulatory mapping covers requirements defined in frameworks (EU AI Act, NIST AI Risk Management Framework, ISO/IEC 42001, GDPR). The Risk and Compliance Assessment stage verifies that risk controls, audit trails, and governance records meet formal compliance standards.

Why is the Risk and Compliance Assessment stage iterative? The Risk and Compliance Assessment stage operates as an iterative process because AI risk exposure evolves with deployment context, regulatory updates, and system modifications. Iterative review cycles update risk classification, bias evaluation results, and compliance status. Structured iteration strengthens AI audit checklist resilience and prevents outdated risk assumptions from persisting in production systems.

What governance dependencies support the Risk and Compliance Assessment stage? The Risk and Compliance Assessment stage depends on cross-functional coordination, documented data traceability, and clearly defined accountability roles. Security teams, legal counsel, compliance officers, and data scientists contribute domain-specific evaluation inputs. Governance integration ensures that risk findings translate into enforceable corrective actions and sustained compliance verification.

4. Operational Review and Validation

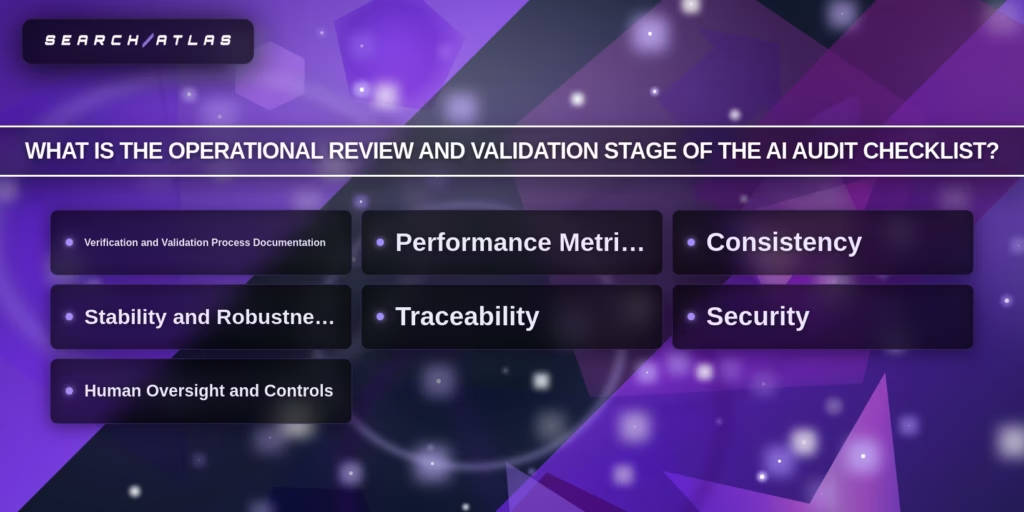

The Operational Review and Validation stage of the AI audit checklist is the structured post-deployment phase that verifies sustained performance, compliance, stability, and control effectiveness in live environments. The Operational Review and Validation stage evaluates how AI systems behave under real-world usage rather than controlled testing conditions. The Operational Review and Validation stage ensures that deployed models remain aligned with predefined risk thresholds and governance standards.

What does the Operational Review and Validation stage evaluate in production systems? The Operational Review and Validation stage evaluates real-time performance metrics, drift indicators, validation documentation, security controls, and human oversight mechanisms. Performance monitoring tracks defined metrics (accuracy, precision, recall, sensitivity, false positive rate, false negative rate). Drift monitoring verifies statistical stability across incoming data streams. Validation documentation confirms traceable testing protocols and measurable performance benchmarks within the AI audit checklist framework.

How does the Operational Review and Validation stage verify consistency and stability? The Operational Review and Validation stage verifies consistency by comparing current outputs against expected thresholds and historical baselines. Deviation thresholds define acceptable variance limits. Stability analysis identifies abnormal behavior under changing data distributions and environmental conditions. Robustness verification documents whether retraining, recalibration, or escalation procedures activate when thresholds are exceeded.

How does the Operational Review and Validation stage address traceability and version control?

The Operational Review and Validation stage requires traceable version control for datasets, model code, configuration files, and deployment artifacts. Log files record system behavior, decision outputs, and monitoring alerts. Version history documents retraining cycles, parameter updates, and architectural changes. Structured traceability strengthens defensibility and reinforces AI audit checklist accountability standards.

How does the Operational Review and Validation stage incorporate security and compliance controls? The Operational Review and Validation stage integrates security verification and regulatory alignment into ongoing production monitoring. Security evaluation reviews encryption enforcement, access restrictions, and anomaly detection alerts. Compliance monitoring verifies adherence to governance frameworks and sector-specific regulations in live operational contexts.

Why is human oversight critical in the Operational Review and Validation stage? Human oversight is critical in the Operational Review and Validation stage because automated systems require controlled intervention thresholds and escalation protocols. Human-in-the-loop checkpoints review high-impact decisions and flagged anomalies. Defined intervention criteria prevent uncontrolled automation drift and maintain governance compliance.

How does the Operational Review and Validation stage differ from pre-deployment validation? The Operational Review and Validation stage differs from pre-deployment validation because it operates continuously and evaluates adaptive system behavior under live conditions. Pre-deployment validation confirms readiness before release. Operational Review and Validation verifies resilience, fairness, stability, and regulatory alignment during active system operation. Continuous oversight reduces production failure risk and reinforces the AI audit checklist lifecycle control.

5. Reporting and Remediation

The Reporting and Remediation stage of the AI audit checklist is the structured phase that documents audit findings, prioritizes identified risks, and implements corrective actions to restore compliance, fairness, security, and performance. The Reporting and Remediation stage transforms technical audit results into formal governance records and enforceable action plans. The Reporting and Remediation stage closes the AI audit checklist cycle by ensuring that validated issues receive measurable resolution.

What does the Reporting and Remediation stage document? The Reporting and Remediation stage documents compliance gaps, performance deviations, bias findings, security vulnerabilities, and operational weaknesses identified during the audit. Structured reports include executive summaries, risk severity ratings, metric deviations, and documented root causes. Documentation records version history, dataset changes, governance updates, and regulatory alignment status to create defensible audit evidence.

How does the Reporting and Remediation stage prioritize corrective actions? The Reporting and Remediation stage assigns severity levels, remediation deadlines, responsible owners, and measurable success criteria for each identified issue. High-risk compliance failures receive immediate escalation. Medium-risk operational issues follow structured remediation timelines. Defined ownership ensures accountability and reinforces AI audit checklist governance discipline.

How does the Reporting and Remediation stage implement mitigation controls? The Reporting and Remediation stage implements mitigation controls that address bias exposure, model instability, security weaknesses, and documentation gaps. Mitigation actions include dataset correction, retraining protocols, threshold recalibration, access control strengthening, and policy updates. Auditors verify corrective implementation through follow-up validation and measurable outcome comparison.

How does documentation strengthen the Reporting and Remediation stage? Comprehensive documentation strengthens the Reporting and Remediation stage by preserving traceability, audit defensibility, and regulatory readiness. Version control systems track model updates, dataset revisions, and configuration adjustments. Centralized repositories store test results, compliance evidence, and remediation records. Structured recordkeeping aligns with governance standards (GDPR, ISO/IEC 42001, EU AI Act).

Why is cross-functional coordination required during Reporting and Remediation? Cross-functional coordination is required during Reporting and Remediation because corrective action spans technical, legal, compliance, and operational domains. Data science teams implement model corrections. Security teams enforce control updates. Legal and compliance teams verify regulatory alignment. Coordinated governance ensures that remediation actions produce measurable compliance restoration and sustained lifecycle control.

What Are the Best Practices for Conducting AI Audits?

Businesses conduct effective AI audits by aligning governance controls, data validation, and system evaluation with how AI models operate, make decisions, and evolve. AI audits assess reliability, compliance, and risk exposure across the full lifecycle. Strong audit practices increase trust, strengthen accountability, and ensure AI systems operate within defined regulatory and operational standards.

The 15 best practices for conducting AI audits are listed below.

- Integrate audit controls early in system design.

- Adopt a multidisciplinary and cross-functional approach.

- Ensure explainability and transparent decision logic.

- Maintain data traceability and quality validation.

- Implement automated testing and continuous monitoring.

- Standardize documentation and version control processes.

- Align audits with recognized regulatory frameworks.

- Conduct post-deployment reviews and continuous updates.

- Apply bias detection and ethical evaluation controls.

- Enforce privacy and security safeguards.

- Prioritize audits based on system risk levels.

- Define problem-first evaluation frameworks.

- Maintain human accountability for critical decisions.

- Execute adversarial testing and stress scenarios.

- Produce structured audit reports with clear remediation plans.

1. Integrate Audit Controls Early in System Design

Integrating audit controls early ensures that governance exists before deployment instead of reacting to failures later. Early-stage controls reduce unmanaged risk and create consistency across development and production environments. AI systems evolve quickly, which makes retroactive auditing ineffective without an initial structure. Organizations apply this by embedding validation checkpoints, compliance rules, and monitoring criteria during model design. A practical takeaway involves defining audit requirements before training begins to prevent gaps in accountability and traceability.

2. Adopt a Multidisciplinary and Cross-Functional Approach

Adopting a multidisciplinary approach ensures that audits reflect legal, technical, operational, and compliance perspectives. AI systems impact multiple domains, which creates blind spots without cross-functional oversight. Collaboration strengthens evaluation accuracy and improves decision traceability. Organizations apply this by involving legal teams, data scientists, security experts, and operations leaders in audit workflows. A practical takeaway involves structuring audit reviews as collaborative checkpoints that validate system behavior from multiple angles.

3. Ensure Explainability and Transparent Decision Logic

Ensuring explainability requires documenting how AI models generate outputs and decisions. Transparent systems increase trust because stakeholders understand how the results are formed. Lack of explainability reduces regulatory alignment and limits accountability. Organizations apply this by recording input features, model logic, and output reasoning pathways. A practical takeaway involves linking every output to a traceable decision process that auditors and stakeholders review.

4. Maintain Data Traceability and Quality Validation

Maintaining data traceability ensures that every dataset has a clear origin, transformation history, and validation record. Data quality directly impacts model reliability, which makes traceability essential for accurate audits. Poor data introduces bias and performance issues that propagate through systems. Organizations apply this by tracking data lineage and validating preprocessing steps. A practical takeaway involves documenting every dataset source and transformation to ensure audit readiness.

5. Implement Automated Testing and Continuous Monitoring

Implementing automated testing ensures that AI systems meet defined performance thresholds consistently. Continuous monitoring detects drift, bias, and performance degradation over time. AI systems change after deployment, which requires ongoing evaluation. Organizations apply this by setting measurable benchmarks and automated alerts for deviations. A practical takeaway involves monitoring accuracy, fairness, and drift continuously to prevent unnoticed failures.

6. Standardize Documentation and Version Control Processes

Standardizing documentation ensures that every system change is recorded and traceable. Version control preserves system history, which strengthens audit defensibility and compliance readiness. AI systems evolve rapidly, which requires structured tracking. Organizations apply this by logging model updates, dataset changes, and configuration adjustments. A practical takeaway involves maintaining centralized records for all system versions and updates.

7. Align Audits With Recognized Regulatory Frameworks

Aligning audits with recognized frameworks ensures compliance with established standards and reduces regulatory risk. Frameworks provide structured guidance for evaluating AI systems consistently. Organizations apply this by mapping audit processes to standards (EU AI Act, NIST AI RMF, and ISO) guidelines. A practical takeaway involves structuring audits around recognized models to ensure consistency and defensibility.

8. Conduct Post-Deployment Reviews and Continuous Updates

Conducting post-deployment reviews ensures that AI systems perform as expected in real-world conditions. Continuous updates maintain compliance as systems evolve or regulations change. Static audits fail to capture ongoing risks. Organizations apply this by scheduling recurring evaluations and updating controls after retraining. A practical takeaway involves treating audits as continuous processes instead of one-time checks.

9. Apply Bias Detection and Ethical Evaluation Controls

Applying bias detection ensures that AI systems produce fair and equitable outcomes across different groups. Ethical evaluation strengthens trust and reduces discriminatory risk. Bias impacts system credibility and compliance. Organizations apply this by testing outputs against fairness metrics and demographic benchmarks. A practical takeaway involves measuring bias regularly and documenting mitigation strategies.

10. Enforce Privacy and Security Safeguards

Enforcing privacy and security safeguards protects sensitive data and prevents unauthorized access. AI systems process large datasets, which increases exposure risk. Strong safeguards reduce vulnerabilities and strengthen compliance. Organizations apply this by implementing encryption, access controls, and anomaly detection systems. A practical takeaway involves securing both training data and live system outputs.

11. Prioritize Audits Based on System Risk Levels

Prioritizing audits ensures that high-impact systems receive deeper evaluation and stricter controls. Not all AI systems carry the same risk, which requires structured prioritization. Organizations apply this by classifying systems based on impact severity and regulatory exposure. A practical takeaway involves allocating audit resources according to risk level for efficiency and effectiveness.

12. Define Problem-First Evaluation Frameworks

Defining problem-first evaluation ensures that audits measure what matters instead of generic performance metrics. Clear objectives guide meaningful evaluation. AI systems require context-specific validation. Organizations apply this by defining success criteria and testing scenarios before evaluation begins. A practical takeaway involves aligning audit metrics with real-world use cases.

13. Maintain Human Accountability for Critical Decisions

Maintaining human accountability ensures that high-impact outputs have clear ownership and oversight. Automated systems require human judgment for ethical and strategic decisions. Organizations apply this by assigning responsibility for validation and approval processes. A practical takeaway involves defining decision ownership for every critical output.

14. Execute Adversarial Testing and Stress Scenarios

Executing adversarial testing exposes vulnerabilities by simulating hostile inputs and edge cases. Stress testing reveals weaknesses that standard evaluations miss. Organizations apply this by testing systems against manipulation attempts and unusual scenarios. A practical takeaway involves validating system resilience under extreme conditions.

15. Produce Structured Audit Reports With Clear Remediation Plans

Producing structured audit reports ensures that findings translate into actionable improvements. Clear documentation strengthens accountability and governance. Organizations apply this by compiling results, severity levels, and remediation steps into standardized reports. A practical takeaway involves closing every audit with documented actions and timelines for resolution.

How Often Should Organizations Conduct AI Audits?

Organizations need to conduct comprehensive AI audits at least annually, with quarterly audits for high-risk or rapidly evolving AI systems, and continuous monitoring in production environments. Annual audits provide a structured lifecycle reassessment. Quarterly audits strengthen oversight in dynamic environments. Continuous monitoring detects drift, bias, and performance degradation in real time.

What factors determine AI audit frequency? AI audit frequency depends on system risk level, regulatory exposure, data sensitivity, operational complexity, and deployment velocity. High-risk AI systems that influence employment, credit decisions, healthcare outcomes, or public services require quarterly or continuous auditing. Lower-risk systems operating in stable environments align with annual reviews. Risk-based scheduling strengthens AI audit checklist efficiency and resource allocation.

What events trigger targeted AI audits outside scheduled reviews? Targeted AI audits trigger from model updates, regulatory changes, drift detection alerts, compliance incidents, security breaches, and new AI deployments. Model retraining, architecture modification, or parameter adjustment requires immediate validation. Detected bias patterns or anomalous performance deviations require corrective audit review. Regulatory amendments mandate compliance reassessment.

Why is continuous AI auditing necessary in production systems? Continuous AI auditing is necessary because AI models evolve through retraining, environmental change, and data distribution shifts. Real-time monitoring tracks accuracy, fairness metrics, and operational stability. Continuous oversight prevents blind spots between formal audits and reduces exposure to unnoticed compliance failures.

What risks arise from infrequent AI audits? Infrequent AI audits increase the probability of undetected bias, performance degradation, regulatory non-compliance, security vulnerabilities, and financial loss. Delayed detection of control failures weakens governance accountability. Infrequent review cycles create operational blind spots and increase remediation costs. Structured audit cadence reduces cumulative risk accumulation.

Do regulatory frameworks mandate specific AI audit intervals? Most regulatory frameworks do not mandate fixed AI audit intervals but require ongoing oversight and documented risk management. Frameworks emphasize continuous compliance monitoring instead of static scheduling. Organizations define cadence through risk-based assessment models aligned with AI audit checklist governance standards.

What Tools Support Effective AI Auditing?

Effective AI auditing is supported by AI audit platforms, AI compliance tools, embedded workflow accelerators, general AI analysis tools, and formal governance frameworks. These tools operationalize the AI audit checklist across planning, testing, monitoring, reporting, and remediation stages. Effective AI auditing requires traceability, explainability, regulatory mapping, and measurable risk detection.

What AI audit platforms support structured AI auditing? AI audit platforms support structured evaluation through automated analytics, risk segmentation, documentation generation, and lifecycle monitoring.

The primary AI audit platforms are listed below.

- Thomson Reuters CoCounsel Audit. Tool for research unification, document review, workpaper generation, and citation-linked audit documentation. CoCounsel Audit integrates regulatory references (FASB, GASB) and exports structured audit outputs.

- Thomson Reuters Audit Intelligence. A tool for intelligent risk segmentation and automated documentation generation. Audit Intelligence ingests ledger data and reduces sampling exposure through analytics-driven segmentation.

- DataSnipper AI. Tool for Excel-based AI audit testing and traceable document linkage. DataSnipper links outputs directly to source documents and applies extraction automation.

- Caseware (AiDA, Extractly, Validate). Tool for embedded AI planning, data extraction, validation checks, and audit trail preservation. Caseware maintains governance boundaries and structured traceability.

- CCH Axcess Audit. A tool for cloud-based audit workflow management and AI-powered document analysis. CCH Axcess integrates research databases with structured audit pipelines.

- Inflo. A tool for full-population transaction analysis and risk-based methodology execution. Inflo applies explainable AI within working paper environments.

- V7 Go. Tool for autonomous multi-step audit processes using multimodal AI reasoning. V7 Go processes structured and unstructured financial artifacts with citation-backed outputs.

- AuditBoard AI. Tool for automated risk documentation, control mapping, and enterprise-level audit summaries.

- Workiva AI. Tool for regulatory reporting, compliance documentation, and integrated system connectors (SAP, Oracle, NetSuite, Workday).

- Diligent HighBond (Diligent One Platform). Tool for board-level GRC integration, automated anomaly detection, and third-party risk monitoring.

- MindBridge AI. A tool for 100% transaction analysis using ensemble AI models with explainable risk scoring.

- XBert. A tool for continuous SME-focused transaction scanning through machine learning anomaly detection.

What AI compliance tools support AI auditing? AI compliance tools support AI auditing by mapping regulatory obligations to internal controls and automating compliance evidence generation. The primary AI compliance tools are listed below.

- Centraleyes. Tool for AI-powered risk register mapping and audit-ready report generation.

- Compliance.ai (Archer). A tool for automated regulatory monitoring and policy mapping.

- Kount (Equifax). A tool for fraud detection and watchlist screening.

- SAS Compliance Solutions (SAS Viya). Tool for cloud-based compliance analytics and risk modeling.

- S&P Global Essential Intelligence. Tool for AI-driven regulatory analytics and machine-readable compliance monitoring.

- IBM Watsonx. A tool for generative compliance documentation and risk assessment automation.

- AuditOne EU AI Compliance Checker. A tool for the EU AI Act structured self-assessment.

- Certa. Tool for third-party risk assessment and vendor compliance automation.

- Darktrace. A tool for AI-based anomaly detection and cybersecurity risk identification.

- Credo AI. A tool for centralized AI governance and policy alignment automation.

- Holistic AI. Tool for independent safety, fairness, and regulatory evaluation.

- Microsoft Purview. A tool for AI governance visibility and data lineage analysis.

- Theta Lake. A tool for voice, video, and chat compliance monitoring.

What embedded workflow accelerators enhance AI auditing? Embedded workflow accelerators enhance AI auditing by integrating AI directly into daily audit tools and documentation systems. The primary workflow accelerators are listed below.

- DataSnipper Advanced Extraction Suite. A tool for document extraction and Excel-native validation.

- DocuMine. A tool for large language model extraction from structured and unstructured content.

- TeamMate+ AI Editor. A tool for documentation clarity, summarization, and secure generative writing assistance.

What general AI tools support audit analysis and reporting? General AI tools support audit analysis by generating structured drafts, summarizing documentation, and analyzing internal datasets. The primary supporting technologies are listed below.

- ChatGPT (enterprise configuration). A tool for audit planning drafts, report summaries, and scope definition.

- Copilot (enterprise configuration). A tool for internal data analysis and documentation refinement.

- Private LLM instances. A tool for secure generative drafting with isolated training boundaries.

- Retrieval Augmented Generation (RAG). A tool for report generation linked to internal document repositories.

- Agentic AI workflows. A tool for multi-step audit automation sequences.

- SAP Signavio. Tool for Continuous Control Monitoring and Conformance Checking.

- Automated monitoring systems. A tool for drift detection and anomaly alerting in production AI environments.

What governance frameworks guide AI auditing tool usage? Governance frameworks guide AI auditing tool usage by defining structured evaluation standards and risk management controls. The primary frameworks are listed below.

- COBIT. Framework for IT governance extended to AI oversight.

- COSO ERM. Framework for enterprise risk management integration.

- U.S. GAO AI Accountability Framework. Framework for fairness, data, performance, and monitoring evaluation.

- IIA Artificial Intelligence Auditing Framework. Framework for internal audit integration and lifecycle oversight.

- Singapore PDPC Model AI Governance Framework. Framework for responsible AI implementation.

- NIST AI Risk Management Framework. Framework for structured AI risk evaluation.

- ISO/IEC 42001. Framework for AI management system standardization.

- End-to-End Socio-Technical Algorithmic Audit (E2EST/AA). Methodology for real-world implementation evaluation.

Why do effective AI auditing tools require traceability and documentation controls? Effective AI auditing tools require traceability and documentation controls to ensure reproducibility, accountability, and regulatory defensibility. Structured logs, version control records, model documentation artifacts, and centralized repositories preserve audit integrity. Traceable documentation aligns tool usage with AI audit checklist governance standards and regulatory expectations.

What Are Common Challenges in AI Auditing?

Common challenges in AI auditing include unclear audit scope definition, a shortage of qualified AI auditors, data privacy and security risks, regulatory uncertainty, technical complexity, integration barriers, organizational cost constraints, client trust concerns, and resistance to change. These challenges affect governance consistency, technical validation reliability, and regulatory defensibility. AI auditing requires structured mitigation strategies aligned with the AI audit checklist framework.

What challenges arise in defining AI audit scope and assessment thresholds? AI auditing faces difficulty in defining what constitutes the primary audit object and determining measurable “good enough” thresholds. Audit objects vary between models, datasets, development processes, and governance systems. Threshold ambiguity complicates fairness, accuracy, and compliance pass criteria. Lack of standardized evaluation benchmarks increases inconsistency across audit programs.

What talent and credentialing challenges affect AI auditing? AI auditing faces a shortage of qualified professionals with expertise in machine learning, regulatory governance, cybersecurity, and ethics. Limited formal AI training across audit firms restricts technical evaluation capacity. Skill gaps increase audit duration and raise operational costs. Credential inconsistency weakens standardization and audit defensibility.

What data privacy and security challenges complicate AI auditing? AI auditing encounters heightened data privacy and cybersecurity risks due to large-scale sensitive data usage in AI systems. Generative AI systems process confidential financial, medical, and operational records. Exposure to data leakage, unauthorized access, and adversarial attacks increases audit complexity. Security vulnerability management remains a high-priority concern across firms.

What regulatory and ethical challenges impact AI auditing? AI auditing operates within evolving regulatory environments that lack fully harmonized standards. Regulatory ambiguity complicates the interpretation of compliance boundaries. Ethical obligations require bias detection, fairness documentation, and explainability controls. Continuous regulatory change increases audit adaptation demands.

What technological and integration challenges affect AI auditing? AI auditing requires integration of complex machine learning systems that differ from deterministic software architectures. Non-deterministic outputs complicate validation logic. Third-party foundation models reduce transparency and limit internal control visibility. Rapid technological evolution increases tool obsolescence risk.