Proximity vs relevance modeling explains how local SEO calculates ranking visibility by combining geographic distance, semantic intent alignment, and authority weighting inside localized search results. Local SEO is a ranking framework that prioritizes businesses within a defined geographic scope instead of global keyword markets. Local SEO differs from traditional SEO because traditional SEO ranks pages across national or global queries, while local SEO integrates location-based filtering directly into ranking logic.

What are the core elements that define how local ranking works? Local ranking works through three elements. The elements are proximity, relevance, and prominence. Proximity measures physical distance between the searcher and the business location. Relevance evaluates how accurately the business profile and website content match search intent. Prominence measures authority signals derived from reviews, backlinks, citations, and keyword prominence strength. Google determines local ranking relevance, distance, and prominence by combining these elements into a composite ranking score.

What is proximity modeling, and why does it matter in local ranking? Proximity modeling is the geographic filtering layer that calculates spatial eligibility before semantic ordering begins. Proximity modeling works by using latitude and longitude coordinates to measure the distance between the searcher and indexed businesses. Proximity modeling matters because closer entities receive higher eligibility weight, especially in Local Pack rankings and “near me” searches. Proximity modeling recalculates dynamically as user location changes, which makes proximity a continuously active ranking signal in mobile environments.

What is relevance modeling, and how does it influence local ranking? Relevance modeling is the probabilistic ranking layer that orders geographically eligible businesses according to semantic alignment with user intent. Relevance modeling transforms queries and documents into embeddings and calculates similarity scores between them. Relevance modeling influences ranking because intent alignment determines final ordering once proximity filtering completes. Proximity vs relevance modeling functions sequentially, where proximity filters candidates, and relevance ranks candidates. Prominence then adjusts ranking weight through authority scoring, which integrates reviews, citations, and backlink signals.

What is local SEO?

Local SEO is a specialized search engine optimization strategy that increases business visibility in unpaid, location-based search results within a defined geographic area. The definition of local SEO focuses on optimizing proximity signals, relevance signals, and prominence signals so search engines rank businesses for geographically modified queries.

What are the core components of local SEO? There are 3 core components of local SEO. These components are listed below.

- Google Business Profile (GBP). Google Business Profile is a verified business listing that connects a company to Google Maps and Google Search results. GBP verification affects Local Pack eligibility and map visibility.

- Local Pack. The Local Pack is a SERP feature that displays 3-4 businesses based on relevance, distance, and prominence. The Local Pack reflects how Google determines local ranking: relevance, distance, and prominence.

- Local Organic Results. Local Organic Results are traditional search listings that contain geographic intent signals and directory references (Yelp, Yellow Pages).

What are the defining attributes of local SEO? Local SEO is defined by 3 measurable attributes. These attributes are listed below.

- High local search intent. 46% of all search queries contain local intent according to Google data reported by BrightLocal. Local intent increases the probability of business discovery within a defined proximity range.

- Direct sales impact. 28% of nearby searches result in a sale, according to Think with Google. Purchase probability increases when geographic distance decreases.

- Consumer review dependency. 98% of consumers researched local businesses online in 2022, and 81% evaluated Google reviews before purchase decisions in 2024, according to BrightLocal. Review quantity, review quality, and review recency influence local ranking stability.

What affects local SEO ranking performance? local SEO ranking performance depends on proximity, relevance, prominence, and NAP consistency. Proximity measures the geographic distance between the searcher and the business location. Relevance evaluates how well a business listing matches search intent. Prominence measures authority signals derived from reviews, citations, and brand references. NAP consistency strengthens entity validation across directories and platforms.

What are the benefits of local SEO? There are 4 main benefits of local SEO. These benefits are listed below.

- Increases geographic visibility. Map placement and Local Pack inclusion expand exposure within a defined service radius.

- Drives foot traffic and calls. Location-based searches convert into in-store visits and direct contact actions.

- Generates high-intent leads. Proximity-based queries reflect immediate purchase intent within a 1-10 mile range.

- Reduces paid advertising dependency. Organic map visibility decreases reliance on paid local ads for demand acquisition.

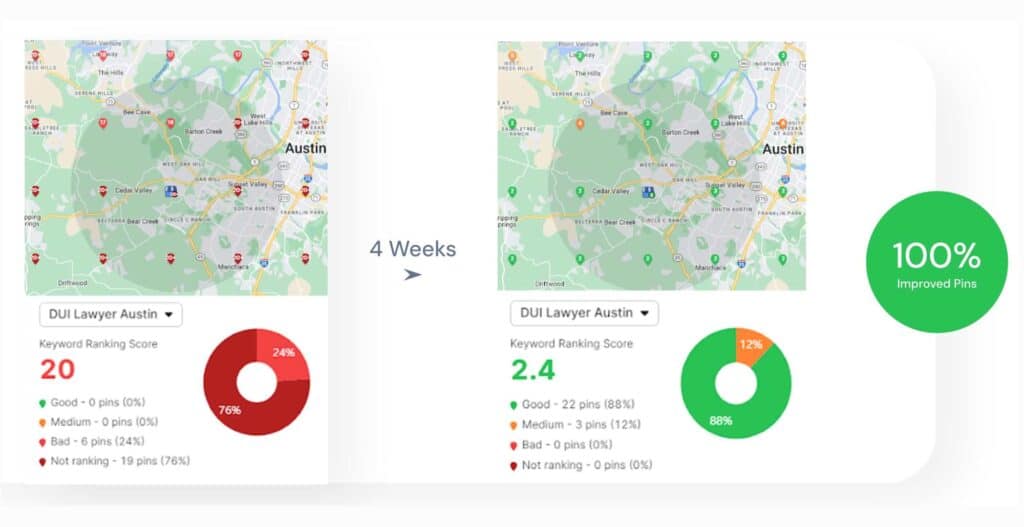

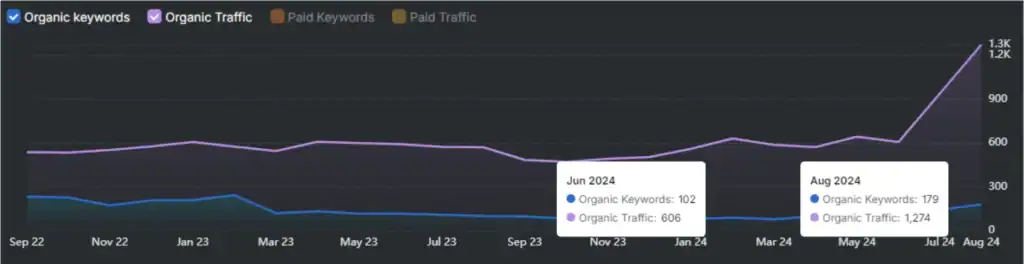

How long does local SEO take to produce measurable results? local SEO produces early visibility signals within 2 to 4 weeks in low-competition industries and requires 3 to 6 months in competitive sectors. Ranking stability strengthens through consistent review acquisition, GBP optimization, and proximity alignment across service areas.

How Local SEO Differs From Traditional SEO?

Local SEO differs from Traditional SEO in audience targeting, ranking signals, optimization focus, and conversion intent. The definition of local SEO centers on optimizing visibility for geographically restricted searches, while Traditional SEO focuses on ranking for non-location-specific queries across national or global markets.

What is the difference in audience targeting between local SEO and Traditional SEO? Local SEO targets hyper-local audiences within a defined geographic radius, while Traditional SEO targets broad audiences without geographic restriction. local SEO optimizes for queries containing explicit or implicit location modifiers (city names, “near me”), whereas Traditional SEO optimizes for general keyword phrases without proximity dependence.

What is the difference in ranking mechanisms between local SEO and Traditional SEO? Local SEO ranking depends on relevance, distance, and prominence, while Traditional SEO ranking depends on keyword relevance, authority, and technical optimization. local SEO evaluates geographic proximity and GBP signals. Traditional SEO evaluates backlinks, content authority, and domain-level trust signals.

What is the difference in optimization focus between local SEO and Traditional SEO? Local SEO prioritizes GBP optimization, citation consistency, and review management, while Traditional SEO prioritizes website content, technical structure, and link building. local SEO allocates significant ranking weight to GBP optimization and NAP consistency. Traditional SEO allocates ranking weight to content depth, keyword targeting, schema implementation, and backlink acquisition.

What are the key task differences between local SEO and Traditional SEO? There are 4 primary task differences between local SEO and Traditional SEO. These differences are listed below.

- Platform focus. Local SEO focuses on GBP and map visibility. Traditional SEO focuses on website-level optimization.

- Citation management. Local SEO requires directory consistency and local listing management. Traditional SEO does not depend on citation uniformity.

- Review signals. local SEO evaluates review quantity, review quality, and review recency. Traditional SEO does not use review signals as primary ranking determinants.

- Geographic landing pages. Local SEO requires location-specific landing pages. Traditional SEO prioritizes topic-based landing pages without geographic segmentation.

How do search intent patterns differ between local SEO and Traditional SEO? Local SEO captures immediate purchase intent, while Traditional SEO captures informational and comparative intent. Local queries often originate from mobile devices and reflect action-oriented behavior within a 1-10 mile range. Traditional queries frequently involve research, comparison, and broader category exploration without immediate geographic constraints.

What are the benefits of local SEO compared to Traditional SEO for physical businesses? The benefits of local SEO include higher conversion probability, faster visibility gains, and stronger in-person traffic generation. local SEO produces measurable results within 2-4 weeks in low-competition markets, whereas Traditional SEO frequently requires 6-12 months for stable ranking growth. Local proximity reduces decision friction, which increases conversion efficiency for service-based and storefront businesses.

What is the interdependence between local SEO and Traditional SEO? Local SEO builds upon Traditional SEO foundations, while Traditional SEO provides the structural framework for local SEO expansion. Website crawlability, content quality, and technical stability influence both strategies. local SEO extends these foundations by integrating geographic signals, entity consistency, and map-based ranking factors.

What Are the Key Elements of Local Ranking?

There are 3 key elements of local ranking. These 3 elements form the 3 pillars of SEO in local search. The 3 pillars of SEO for local SEO are Proximity Modeling, Prominence Modeling, and Relevance Modeling. These elements determine how search engines evaluate GBP ranking factors for local SEO. The key elements of local ranking are listed below.

1. Proximity Modeling. Proximity Modeling measures the physical distance between the searcher and the business location. Distance acts as a filtering signal in local SEO because search engines prioritize businesses within a defined geographic radius. Proximity increases ranking probability when a business is located closer to the user search origin.

2. Prominence Modeling. Prominence Modeling evaluates business authority and reputation signals across the web. Authority signals include review volume, review ratings, citation consistency, brand mentions, and backlink references. Higher prominence increases trust and ranking stability in local SEO because search engines interpret external validation as reliability.

3. Relevance Modeling.

Relevance Modeling evaluates how accurately a business listing matches the search query intent. Relevance depends on GBP category selection, service descriptions, keyword alignment, and on-page content structure. Strong relevance improves ranking because search engines prioritize listings that directly match user intent.

These 3 pillars of SEO operate together within local SEO ranking systems. Proximity filters results by distance. Relevance determines query alignment. Prominence strengthens authority signals. GBP ranking factors for local SEO distribute weighting across these three modeling layers to calculate final local ranking positions.

What Is Proximity Modeling?

Proximity modeling is a geospatial analytical method that evaluates distance relationships between geographic entities to determine accessibility, influence radius, and spatial priority in local SEO systems. Proximity modeling transforms raw location data into measurable ranking signals that influence how search engines filter results based on the physical distance between the user and the business location.

What category does proximity modeling belong to? Proximity modeling belongs to the broader class of spatial analysis and geospatial ranking operations. Proximity modeling quantifies how near or far two geographic points exist relative to one another. Proximity modeling differs from simple distance calculations because proximity modeling incorporates routing constraints, service boundaries, and travel paths instead of relying only on straight-line geometry.

What distance measures define proximity modeling? Proximity modeling uses 4 primary distance measurement types. These distance types are listed below.

- Euclidean distance. Euclidean distance measures the straight-line geometric distance between two points. Euclidean distance represents the simplest calculation method and appears in 90% of initial spatial analyses.

- Geodesic distance. Geodesic distance measures the shortest path on the Earth’s curved surface. Geodesic distance produces 0.5% higher accuracy than Euclidean distance for long-range calculations.

- Network distance. Network distance measures travel along constrained linear systems (roads, pathways). Network distance appears in 75% of routing-based spatial applications.

- Cost distance. Cost distance replaces physical distance with accumulated variables (travel time, emissions, construction cost). Travel time represents the most common cost factor in 60% of logistics modeling.

What properties define proximity modeling? Proximity modeling contains 3 defining properties. These properties are listed below.

- Analytical depth. Proximity modeling evaluates topology, routing, and accessibility constraints beyond raw geometry. Spatial modeling improves infrastructure planning accuracy by 20-30% according to industry reports.

- Versatility of distance metrics. Proximity modeling adapts distance measurement methods depending on the application context. Network distance improves travel time estimation accuracy by up to 40% compared to straight-line calculation.

- Actionable decision output. Proximity modeling converts spatial relationships into operational insights. Businesses report 15% operational efficiency gains when using proximity-based spatial intelligence systems.

What role does proximity modeling play in local SEO? Proximity modeling acts as the geographic filtering layer in local SEO ranking systems. Search engines calculate the distance between the searcher’s location and the business location to prioritize nearer results. Proximity modeling reduces ranking probability as geographic distance increases, which directly influences map-based and Local Pack visibility. Proximity modeling functions as the spatial evaluation mechanism that governs distance weighting inside localized search ranking environments.

Why Is Proximity Modeling Important?

Proximity modeling is important because it determines how distance influences interaction, ranking priority, spatial analysis, and system behavior across digital and physical environments. Proximity modeling quantifies geographic closeness and converts spatial distance into measurable influence signals, which directly affect visibility, accessibility, and operational outcomes.

How does proximity modeling facilitate innovation and collaboration? Proximity modeling increases innovation diffusion probability by reducing spatial barriers between entities. Knowledge spillovers concentrate within a 0 to 20 meter radius, and influence declines sharply beyond that range. Working within 20 meters of another startup increases technology adoption probability by 3 percentage points, while doubling the distance beyond 20 meters reduces adoption probability by 1.7%. Geographic closeness increases face-to-face knowledge exchange and accelerates complex information transfer.

Why is proximity modeling a strategic tool for innovation intermediaries? Proximity modeling influences the structural design of incubators, research hubs, and technology transfer centers. Innovation intermediaries optimize geographic, organizational, and cognitive proximity to increase value creation efficiency. Strategic placement decisions alter collaboration density, recruitment scope, and partnership formation probability. Integrating proximity variables into business model design strengthens relational alignment and improves coordination efficiency.

Why is proximity modeling crucial for understanding cancer invasiveness mechanisms? Proximity modeling reveals additive and synergistic cell-cell interaction effects that increase invasive force output. Well-spaced invasive cancer cells indent gels to approximately 10 µm depth, while closely adjacent non-contacting cells indent up to 18 µm depth. Cells positioned within a 0.5-50 µm range generate additive force amplification. Two to three cells applying 220 nN increase indentation depth by 3%, and unequal force application at 10 µm separation increases indentation by 7.8%. Distance reduction amplifies mechanical interaction intensity.

How does proximity modeling function as a design principle? Proximity modeling improves information clarity by grouping related elements based on spatial closeness. Visual grouping increases readability and reinforces structural hierarchy. Placing related components within close range directs attention flow and increases comprehension efficiency. Spatial grouping reduces cognitive load and strengthens user navigation patterns.

Why is proximity modeling important for spatial analysis and geographic inquiry? Proximity modeling serves as the foundational mechanism for distance-based geographic algorithms. Spatial analysis tools rely on buffer zones, cost-distance analysis, and Voronoi partitioning to quantify influence areas. Euclidean, Manhattan, and Geodesic distance metrics calculate spatial relationships. Distance decay principles, including the friction of distance, explain why interaction probability decreases as spatial separation increases.

What impact does proximity modeling have on device characteristics and circuit reliability? Proximity modeling affects semiconductor device behavior through the Well Proximity Effect and the Shallow Trench Isolation stress. Well Proximity Effect alters doping concentration up to 1 µm from well edges and shifts threshold voltage by tens of millivolts in 90 nm technology. Shallow Trench Isolation stress modifies semiconductor band structure and mobility characteristics. Mobility varies inversely with trench distance, and threshold voltage variation increases as channel length decreases. Distance sensitivity directly influences circuit stability and reliability.

How Does Proximity Modeling Work?

Proximity modeling works by calculating the distance between a source entity and a target entity, converting that distance into influence values, and applying distance-based modifications to the source structure. Proximity modeling transforms spatial separation into quantifiable control signals that modify geometry, ranking weight, or system behavior.

What are the required components for proximity modeling to function? There are 5 required components for proximity modeling. These components are listed below.

- Source Geometry. Source geometry represents the object or entity that receives modification. Source geometry provides the structural base for proximity response.

- Target Geometry. Target geometry represents the influencing object that defines spatial interaction boundaries.

- Distance Measurement Algorithm. Distance measurement algorithms calculate the separation between source points and target points using Euclidean or Manhattan distance logic.

- Proximity Tool or Node. Proximity tools interpret calculated distances and apply conditional logic to the source structure.

- Modification Parameters. Modification parameters define influence radius, scaling magnitude, displacement intensity, and response thresholds.

What is the step-by-step operational process of proximity modeling? Proximity modeling operates through 5 sequential steps. These steps are listed below.

- Define source and target entities. Source geometry receives modification, and target geometry provides spatial reference.

- Calculate spatial distance. The system computes the distance from each source vertex to the nearest target point. A mesh containing 10,000 vertices produces 10,000 individual distance calculations.

- Normalize distance into influence values. Distance values are mapped to a 0-1 scale. Points within a 0-2 meter radius receive higher influence scores than distant points.

- 4. Apply geometric or behavioral modification. Vertices within 0.5 meters scale to 150%, while vertices at 1 meter scale to 110%. Influence magnitude decreases as distance increases.

- Render or export modified output. The system produces an adapted structure reflecting spatial response dynamics.

What core mechanisms control proximity modeling behavior? Proximity modeling operates through distance-based influence, parametric control, and real-time recalculation. Distance-based influence increases modification strength as separation decreases. Parametric control allows adjustment of falloff curves, influence radius, and scaling thresholds. Real-time recalculation updates modifications dynamically as target position changes, improving workflow efficiency by up to 30%.

What are common failure conditions in proximity modeling? Proximity modeling fails under 3 primary edge conditions. These conditions are listed below.

- Complete overlap. Zero-distance values across large areas generate uniform modification instead of localized response.

- High computational load. Meshes containing 5 million vertices require 10-20 seconds per calculation cycle on standard workstations, reducing real-time performance.

- Incorrect distance mapping. Improper normalization or inverted scaling parameters produce unintended shrinkage or expansion effects.

Proximity modeling functions as a structured spatial evaluation system that converts measurable distance into deterministic modification behavior across geometric, analytical, and ranking-based environments.

What Are the Key Signals Used in Proximity Modeling?

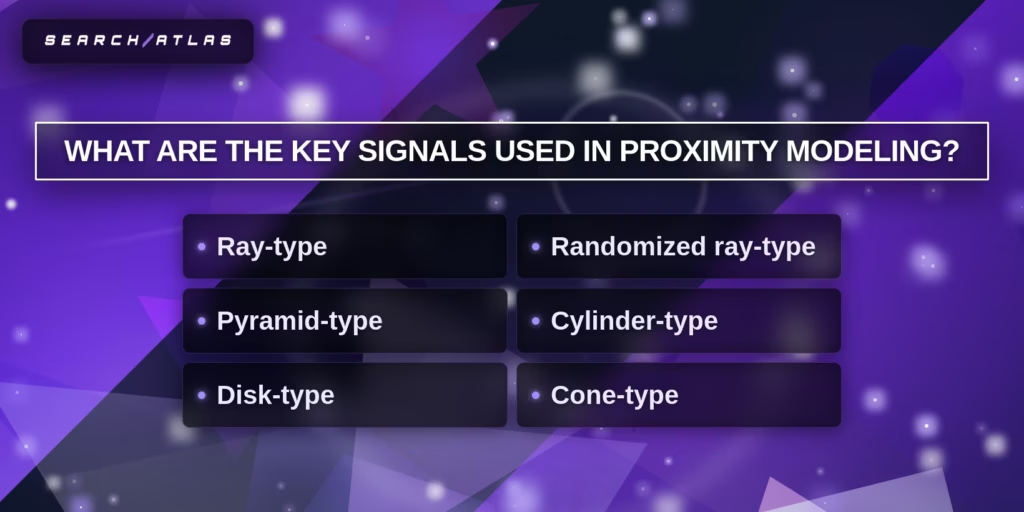

There are 6 key signals used in proximity modeling. These signals define how distance detection and spatial influence operate inside geometric and spatial systems. Proximity modeling uses sensor-based detection structures to calculate directional influence, area coverage, and spatial falloff strength.

The 6 key signals used in proximity modeling are listed below.

- Ray-type sensor. Ray-type sensors project a linear directional beam from the source toward a target. Ray-type detection measures direct line-of-sight distance and calculates the closest intersection point. Ray-type signals apply precise influence along a single axis and operate efficiently in straight-line proximity evaluation.

- Randomized ray-type sensor. Randomized ray-type sensors emit multiple stochastic rays within a defined angular range. Randomized ray-type detection samples spatial interaction probability rather than single-direction contact. Randomized ray-type signals improve detection robustness in complex environments where occlusion or irregular geometry exists.

- Pyramid-type sensor. Pyramid-type sensors emit detection rays within a four-sided angular volume. Pyramid-type detection captures forward spatial influence within a defined frustum range. Pyramid-type signals evaluate broader directional fields than ray-type sensors while maintaining structured angular boundaries.

- Cylinder-type sensor. Cylinder-type sensors detect proximity within a uniform radial column. Cylinder-type detection measures distance across a circular cross-section with a consistent radius height. Cylinder-type signals apply uniform influence across vertical spatial stacks, which improves spatial consistency in volumetric modeling.

- Disk-type sensor. Disk-type sensors detect influence within a flat circular radius. Disk-type detection evaluates horizontal spatial relationships based on radial distance from a central point. Disk-type signals function efficiently in ground-plane proximity analysis and area-based influence mapping.

- Cone-type sensor. Cone-type sensors emit influence within a conical angular spread. Cone-type detection calculates falloff intensity as distance increases from the apex origin. Cone-type signals model directional influence gradients and allow controlled angular attenuation.

Proximity modeling interprets these 6 sensor signals as geometric detection volumes that define influence zones. Sensor geometry determines the spatial coverage pattern. Distance calculation within each sensor structure produces influence weights. Influence weights control modification strength or ranking weight inside spatial systems. Proximity modeling depends on structured detection geometry to translate spatial distance into measurable interaction intensity.

What Are the Use Cases of Proximity Modeling?

There are 3 primary use cases of proximity modeling in local SEO systems. These use cases determine how geographic distance influences ranking, filtering, and visibility. Proximity modeling applies distance-based logic to prioritize businesses located closer to the search origin.

The 3 use cases of proximity modeling are listed below.

- Local Pack Rankings.

- “Near Me” Searches.

- Mobile and Real-Time Location Queries.

Proximity modeling operates as the geographic filtering mechanism that controls which businesses appear first when physical distance influences search intent.

1. Local Pack Rankings

What Are Local Pack Rankings in Proximity Modeling? Local Pack Rankings are the ranking system that determines which GBP listings appear in the map-based result block at the top of Google Search based on proximity, relevance, and prominence. Local Pack Rankings function inside local SEO and prioritize businesses closest to the searcher while evaluating authority and query alignment.

How do Local Pack Rankings operate inside local SEO? Local Pack Rankings operate through proximity modeling, relevance evaluation, and prominence scoring. Proximity filters list based on physical distance between the searcher’s location and the verified business address. Relevance evaluates category accuracy, service descriptions, and keyword alignment inside the Google Business Profile. Prominence measures authority signals derived from review count, review recency, average rating, backlinks, and branded search volume.

What makes proximity dominant in Local Pack Rankings? Proximity acts as the primary geographic filter in Local Pack Rankings. The distance between the searcher device and the business address directly influences listing eligibility. Proximity accounts for approximately 19% of ranking influence and overrides other factors on mobile devices in up to 40% of localized comparisons.

How do Local Pack Rankings differ from traditional organic results? Local Pack Rankings prioritize geographic distance and GBP data, while traditional organic results prioritize domain authority and page-level content signals. Local Pack Rankings extract information from verified business profiles. Traditional organic rankings evaluate website authority and backlink strength across broader keyword markets.

What performance characteristics define Local Pack Rankings? Local Pack Rankings generate higher click-through rates and stronger conversion behavior than non-pack listings. Top 3 Local Pack positions produce 93% more user actions and 126% more traffic compared to listings outside the pack. Mobile devices display the 3-pack in approximately 93% of local searches, which increases exposure frequency.

How dynamic are Local Pack Rankings? Local Pack Rankings shift based on real-time geographic coordinates and device movement. Results vary by approximately 47% when tested from locations 1 mile apart. GPS recalculation updates ranking composition as user position changes, which makes proximity modeling continuously active.

What data dependencies affect Local Pack Rankings? Local Pack Rankings depend on accurate GBP data, NAP consistency, category precision, and review signals. Complete and verified profiles increase trust signals. Review volume, review velocity, and rating distribution influence prominence scoring. Local Pack Rankings represent the proximity-weighted display layer of local SEO, where geographic filtering determines eligibility and relevance plus prominence refines final ordering.

2. “Near Me” Searches

What are “Near Me” searches in Proximity Modeling? “Near me” searches in proximity modeling are location-intent queries that trigger distance-based ranking logic using device coordinates, semantic cues, and contextual inference to prioritize geographically closest entities. “Near me” searches activate proximity modeling inside local SEO systems and shift ranking weight toward minimal physical distance between the searcher and the business location.

How do “Near Me” searches operate inside proximity modeling? “Near me” searches operate by combining real-time location data with semantic interpretation of proximity language. Search engines retrieve device GPS coordinates and calculate geographic separation between the search origin and indexed business addresses. Distance calculations apply spatial filtering before relevance and prominence scoring refine ordering.

How do language models interpret “Near Me” intent without explicit coordinates? Language models interpret “near me” intent through embedding space proximity and semantic co-occurrence patterns. Tokens that frequently appear together in a local context cluster within a high-dimensional vector space. Phrases, “next to the train station” or “five minutes from campus,” encode proximity signals without numeric coordinates.

What mechanisms define semantic proximity in “Near Me” modeling? Semantic proximity in “near me” modeling depends on 3 core mechanisms. These mechanisms are listed below.

- Embedding space proximity. Tokens that share contextual frequency patterns compress within vector similarity neighborhoods.

- Non-map local logic. Descriptive cues (reviews, landmarks, neighborhood names) substitute direct coordinate measurement.

- Trajectory-based similarity. Continuation probability distributions align sentences that produce similar next-token outputs.

What accuracy characteristics define “Near Me” searches? “Near Me” searches provide approximate spatial filtering rather than meter-level geometric precision. Semantic inference captures human-level “close enough” judgments but does not calculate exact walking distance or obstacle-aware routing. Dedicated geospatial engines perform precise routing tasks, while proximity modeling provides contextual distance weighting. “Near me” searches represent the linguistic activation layer of proximity modeling, where semantic inference and coordinate-based filtering converge to determine geographically constrained visibility.

3. Mobile and Real-Time Location Queries

What are Mobile and Real-Time Location Queries in Proximity Modeling? Mobile and real-time location queries in proximity modeling are dynamic spatial queries that calculate the live distance between a mobile device and nearby entities using GPS, Wi-Fi, or cellular signals to deliver context-aware results. Mobile and real-time location queries activate proximity modeling continuously and adjust output based on the current geographic position of the user.

How do mobile and real-time location queries operate inside proximity modeling? Mobile and real-time location queries operate by retrieving device coordinates, computing geographic separation, and returning results within a defined radius threshold. Systems use formulas, the Haversine calculation to determine spherical distance between two latitude-longitude points. Queries often filter results within predefined boundaries, a 600-meter radius, which enables precise spatial inclusion decisions.

What technical systems enable mobile and real-time location queries? Mobile and real-time location queries rely on 3 core infrastructure layers. These layers are listed below.

- Device sensors. GPS, Wi-Fi triangulation, and cellular tower signals supply live coordinate data.

- Processing services. Backend APIs calculate spatial relationships using algorithms and queue-based processing systems.

- Spatial databases. Databases, PostgreSQL, with PostGIS execute indexed spatial queries and improve lookup speed from 43.7 ms to 2.3 ms through GiST indexing, which represents a 19-24x performance gain.

What performance characteristics define mobile and real-time location queries? Mobile and real-time location queries prioritize accuracy, response time, and scalability. Systems deliver responses in approximately 131 ms in optimized implementations. PostGIS processes queries across 1,000,000 locations in 80-150 ms with spatial indexing. Geographic data types account for Earth’s curvature and increase precision compared to planar calculations.

How do mobile and real-time location queries differ from static geofencing? Mobile and real-time location queries compute distance dynamically, while static geofencing defines fixed virtual boundaries. Real-time queries adjust results as the user moves. Geofencing triggers predefined actions when a device enters or exits a boundary, but does not continuously recalculate distance relationships.

What Are the Limitations of Proximity Modeling?

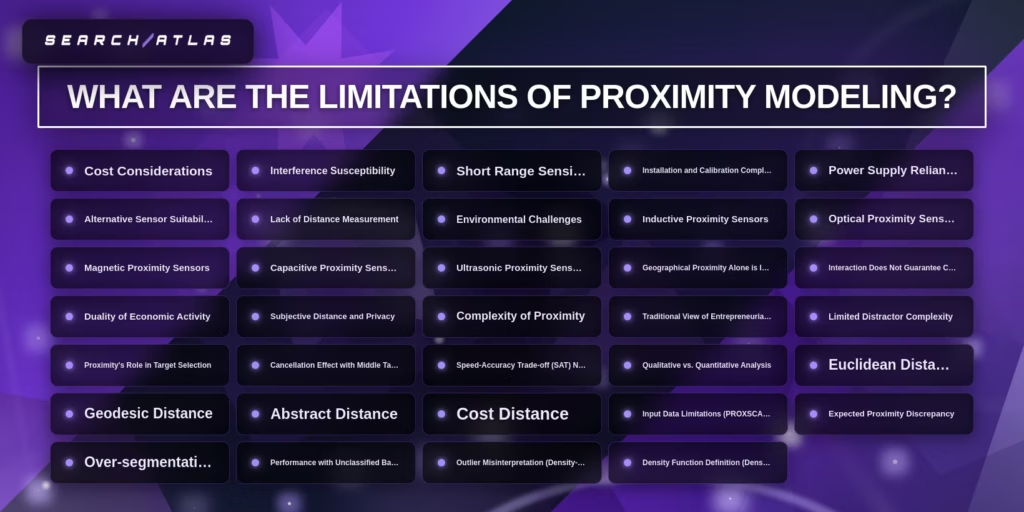

Proximity modeling has technical, conceptual, and computational limitations because distance alone does not capture intent, coordination quality, or contextual relevance modeling signals. Proximity modeling prioritizes spatial closeness, yet spatial closeness does not guarantee optimal interaction, ranking accuracy, or decision precision.

What are the technical limitations of proximity modeling? There are 8 primary technical limitations of proximity modeling. These limitations are listed below.

- Cost considerations. Sensor hardware, spatial databases, and real-time processing infrastructure increase operational expenses.

- Interference susceptibility. Radio, optical, and ultrasonic sensors experience signal disruption in dense or obstructed environments.

- Short-range sensing. Many proximity sensors operate within limited distance thresholds and cannot detect long-range spatial relationships.

- Installation and calibration complexity. Calibration errors distort measurement precision and reduce modeling reliability.

- Power supply reliance. Continuous sensing and computation increase energy consumption requirements.

- Environmental challenges. Temperature variation, surface reflection, and physical obstacles alter signal stability.

- Lack of precise distance measurement in basic sensors. Some proximity sensors detect presence without measuring the exact separation magnitude.

- Alternative sensor suitability constraints. Inductive, optical, magnetic, capacitive, and ultrasonic sensors each operate within narrow material or surface compatibility ranges.

What are the conceptual limitations of proximity modeling? There are 6 conceptual limitations of proximity modeling. These limitations are listed below.

- Geographical proximity alone is insufficient. Spatial closeness does not guarantee relevance, modeling alignment, or functional compatibility.

- Interaction does not guarantee coordination. Entities located near one another do not automatically produce synchronized outcomes.

- Duality of economic activity. Digital interaction reduces geographic constraints, weakening purely spatial proximity assumptions.

- Subjective distance perception. Human interpretation of “near” varies by context, culture, and expectation.

- Complexity of proximity dimensions. Cognitive, social, and organizational proximity operate independently from physical proximity.

- Geographic ecosystem limitations. Entrepreneurial ecosystems extend beyond physical boundaries, challenging strict spatial modeling assumptions.

What are the computational limitations of proximity modeling? There are 10 computational limitations of proximity modeling. These limitations are listed below.

- Limited distractor complexity modeling. Systems struggle to differentiate multiple overlapping spatial candidates.

- Target selection bias. Nearest entity selection ignores contextual suitability.

- Cancellation effects. Middle-positioned targets distort influence calculations between competing entities.

- Speed-accuracy trade-off. High-frequency distance recalculation reduces processing latency but increases approximation error.

- Qualitative vs quantitative mismatch. Distance metrics capture numeric separation but not semantic compatibility.

- Euclidean distance distortion. Straight-line measurement ignores road networks and physical barriers.

- Geodesic calculation overhead. Spherical computation increases processing cost for large-scale systems.

- Abstract distance misalignment. Embedding-based semantic proximity diverges from physical coordinates.

- Cost distance variability. Travel-time models depend on dynamic traffic data and introduce volatility.

- Input data limitations. Incomplete or inaccurate coordinate datasets distort output precision.

Proximity modeling cannot operate as a standalone ranking or analytical system. Proximity modeling requires integration with relevance modeling and prominence evaluation to balance geographic filtering with contextual accuracy and authority weighting.

What Is Relevance Modeling?

Relevance modeling is a probabilistic ranking framework that estimates the likelihood that a document, entity, or data point satisfies a user query based on semantic alignment and information need. Relevance modeling calculates the probability that content belongs to the relevant set rather than the non-relevant set and ranks results according to that probability.

Who developed the foundational relevance modeling framework? The probabilistic relevance framework was developed by Stephen E. Robertson and Karen Spärck Jones and later advanced through language modeling approaches. Early probabilistic models explicitly separated relevant and non-relevant document classes. Later developments shifted toward modeling how queries are generated from document language distributions.

How does relevance modeling differ from keyword matching? Relevance modeling differs from keyword matching because relevance modeling evaluates semantic relationships and probabilistic alignment rather than raw term frequency. Keyword matching counts token overlap. Relevance modeling estimates contextual meaning and subjective information fit, which aligns ranking output with human judgment of relevance.

What are the main methodologies used in relevance modeling? There are 4 primary methodologies used in relevance modeling. These methodologies are listed below.

- Relevance Models (Lavrenko and Croft, 2001). These models estimate term probabilities in relevant documents without requiring explicit relevance training labels.

- Cross-Media Relevance Model (CMRM). CMRM learns joint probability distributions for multimodal tasks (image annotation).

- Deep Relevance Matching Model (DRMM). DRMM applies neural ranking logic to evaluate fine-grained query-document interaction signals.

- ProRBP (Progressive Retrieved Behavior-augmented Prompting). ProRBP integrates behavioral retrieval signals and prompting logic to refine LLM-based relevance estimation.

What performance improvements does relevance modeling provide? Relevance modeling improves retrieval precision compared to baseline language models and term-frequency scoring systems. On TREC Ad-hoc datasets, average precision increased by 29.50% for queries 101-150 and by 10.55% for queries 151-200 relative to baseline models. R-Precision improved by 15.27% and 9.56% across the same query ranges.

Why does relevance modeling depend on human judgment? Relevance modeling depends on human judgment because relevance is inherently subjective and context-dependent. The Relevance Learning Hypothesis states that ranking systems need to learn patterns consistent with human reasoning to predict meaningful alignment. Large Language Models (LLMs) enhance semantic scoring accuracy by approximating contextual reasoning patterns.

What are the limitations of relevance modeling? Relevance modeling faces challenges related to training data scarcity, semantic ambiguity, and probability estimation complexity. Classical models require reliable estimation of term probability distributions in relevant sets. Limited labeled training data reduces model robustness. Pure semantic congruence fails in scenarios where contextual signals or user intent signals are incomplete.

Relevance modeling functions as the probabilistic interpretation layer in ranking systems, where semantic alignment determines which content satisfies user intent beyond simple proximity or term frequency scoring.

Why Is Relevance Modeling Important?

Relevance modeling is important because it increases ranking accuracy, aligns results with user intent, and improves measurable performance outcomes across search and AI systems. Relevance modeling estimates the probability that content satisfies a query and orders results according to that probability.

How does relevance modeling enhance AI application performance? Relevance modeling improves retrieval precision and ranking stability compared to baseline language models. On TREC Ad-hoc datasets, average precision increased by 29.50% for queries 101-150 from 0.2021 to 0.2617. R-Precision increased by 15.27% across the same query set. Higher precision reduces irrelevant result exposure and increases system reliability.

Why does relevance modeling improve user experience? Relevance modeling improves user experience by delivering results that match query intent with higher semantic accuracy. Information retrieval systems maximize utility when returned items align with user expectations. High semantic alignment reduces bounce rate and increases engagement duration. Irrelevant results cause immediate session termination regardless of system speed.

What measurable business results does relevance modeling produce? Relevance modeling drives measurable improvements in retention, conversion rate, and satisfaction metrics. Accurate intent alignment reduces time-to-product discovery and increases click-through probability. Lower bounce rates increase session depth and strengthen conversion efficiency. Search relevance transforms retrieval systems into revenue-generating infrastructure.

How does relevance modeling connect user intent to behavioral outcomes? Relevance modeling translates semantic intent into ranked outputs that increase click probability and conversion likelihood. Query-document alignment improves behavioral signals (clicks, dwell time, and continued engagement). Semantic scoring expresses ranking in terms meaningful to both users and business objectives.

Why is relevance modeling considered a breakthrough in artificial intelligence? Relevance modeling represents a shift from click prediction toward human-like semantic judgment. Earlier systems prioritized click probability estimation. LLMs generate contextual similarity judgments that approximate human reasoning about relationships in text and images. Combining behavioral prediction with semantic reasoning increases ranking intelligence depth.

How does relevance modeling operate without labeled training data? Relevance modeling estimates term probability distributions from the query itself when labeled data is unavailable. Classical probabilistic models require estimation of word probability in relevant sets. Query-driven estimation constructs relevance distributions directly from query terms, which addresses training data scarcity.

Relevance modeling functions as the semantic prioritization layer in ranking systems where probabilistic estimation aligns content selection with contextual intent rather than surface keyword overlap.

How Does Relevance Modeling Work?

Relevance modeling works by transforming queries and documents into comparable representations, estimating relevance probability, integrating semantic and behavioral signals, and ranking results according to combined relevance scores. Relevance modeling calculates how strongly a document satisfies a query rather than counting raw keyword overlap.

What components enable relevance modeling to function? Relevance modeling requires 7 core components. These components are listed below.

- Relevance Model. A statistical, semantic, or LLM-based ranking function that computes relevance probability.

- Query Representation. User input is transformed into a structured or vectorized form.

- Document Corpus. Indexed dataset containing searchable documents.

- Vector Space Representation. Numerical embeddings that allow mathematical similarity comparison.

- Axioms. Foundational scoring rules (Term Frequency, Document Length Penalty, Term Uniqueness).

- Behavioral Signals. Dwell time, abandonment rate, and click engagement.

- Structured Data. Entity relationships and Knowledge Graph signals.

What is the step-by-step operational process of relevance modeling? Relevance modeling operates through 6 sequential steps. These steps are listed below.

- Transform query and documents into vector representations. Queries and documents convert into multidimensional embeddings. A smaller vector distance indicates stronger semantic alignment.

- Retrieve and score an initial candidate set. Statistical language models estimate the probability of observing word w in the relevant class P(w|R). Systems retrieve approximately 30 high-ranked documents to approximate relevance distribution.

- Apply axiomatic evaluation. Term Frequency increases the score when query terms repeat in a document. Document Length Penalty reduces the weight for overly long documents. Term Uniqueness increases weight for distinctive vocabulary.

- Integrate semantic and behavioral signals. Word2Vec-style embeddings evaluate contextual meaning beyond lexical overlap. Dwell time and abandonment rate refine scoring according to engagement quality.

- Evaluate structured and authority signals. EEAT signals operate at the author, site, and entity level using embedding comparison. Knowledge Graph data strengthens entity disambiguation and contextual grounding.

- Combine signals into the final ranking output. Statistical, semantic, axiomatic, and behavioral weights aggregate into a final relevance score. Highest scores appear at the top of ranked output lists.

What mechanisms improve relevance modeling accuracy? Relevance modeling accuracy improves through axiomatic analysis, statistical language modeling, and multi-stage neural attention processing. Axiomatic decomposition reduces error rates by up to 33% in topic similarity measurement. Statistical language modeling approximates P(w|RQ) using co-occurrence probability within retrieved sets. Multi-layer neural attention blocks refine relevance judgments progressively across early, middle, and final inference stages.

What are common failure modes in relevance modeling? Relevance modeling fails under 3 primary conditions. These conditions are listed below.

- Ambiguous queries. Broad or unclear queries produce multiple competing interpretations.

- Absence of labeled feedback. Lack of explicit relevance labels reduces model calibration accuracy.

- Over-reliance on statistical signals. Pure TF-IDF weighting ranks lexically similar but semantically irrelevant documents.

Relevance modeling functions as a probabilistic ranking system that aligns semantic interpretation, statistical estimation, and behavioral feedback to determine which content satisfies a given query with the highest contextual accuracy.

What Are the Use Cases of Relevance Modeling?

There are 3 primary use cases of relevance modeling in search and ranking systems. These use cases determine how semantic alignment improves precision and ranking stability. Relevance modeling prioritizes documents, entities, and signals based on contextual intent rather than surface-level keyword overlap.

The 3 use cases of relevance modeling are listed below.

- Query Intent Matching.

- Category and Service Alignment.

- Content and Keyword Optimization.

Relevance modeling functions as the semantic alignment engine that connects user intent, entity classification, and content structure into a unified ranking decision framework.

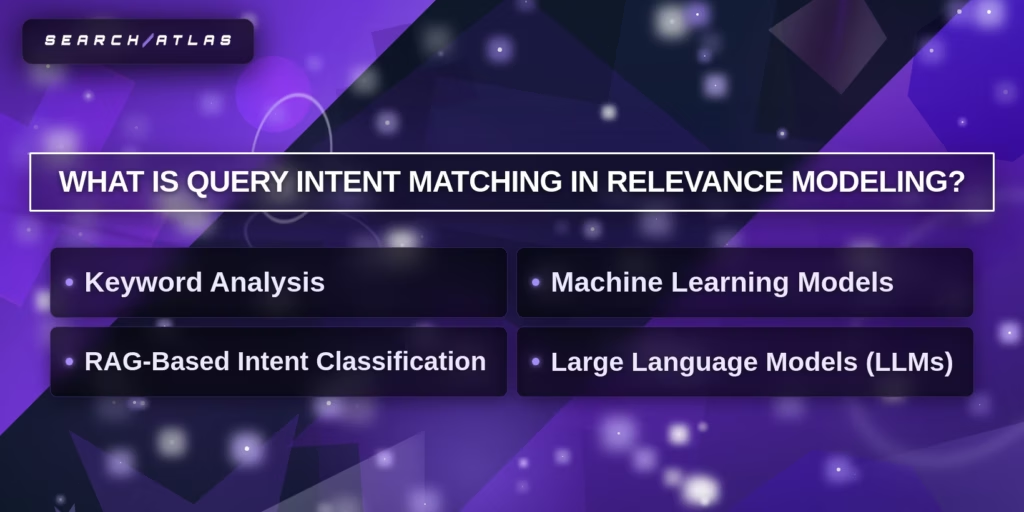

1. Query Intent Matching

What Is Query Intent Matching in Relevance Modeling? Query intent matching in relevance modeling is the process of identifying the underlying purpose of a user query and aligning ranked results with that purpose through semantic classification and probabilistic scoring. Query intent matching evaluates what the user wants to accomplish rather than matching literal keywords.

Why does Query Intent Matching operate as a core component inside relevance modeling? Query intent matching operates as a core component inside relevance modeling because relevance modeling estimates the probability that content satisfies a specific intent class. Query intent matching transforms a raw query into structured intent categories: informational, transactional, or navigational. This transformation governs downstream retrieval, ranking, and filtering logic.

Query intent matching relies on 4 core mechanisms. These mechanisms are listed below.

- Keyword Analysis. Keyword analysis detects intent indicators embedded in query terms. Words, “buy,” “review,” or “fix”, signal transactional or troubleshooting intent.

- Machine Learning Classification. Machine learning models classify queries into predefined intent categories using historical interaction data and probabilistic inference.

- Embedding Similarity Matching. Embedding vectors convert queries into numerical representations and compare cosine similarity across labeled intent clusters.

- Large Language Model Interpretation. LLMs interpret contextual nuance and resolve mixed-intent queries by modeling semantic relationships.

How does Query intent matching increase retrieval accuracy? Query intent matching increases retrieval accuracy by reducing irrelevant exposure and aligning ranking output with user expectations. Improved intent classification reduces irrelevant search results by approximately 35% and increases satisfaction metrics by up to 50%. Query intent matching functions as the intent-detection layer within relevance modeling, where semantic understanding directs ranking behavior according to user goal rather than lexical coincidence.

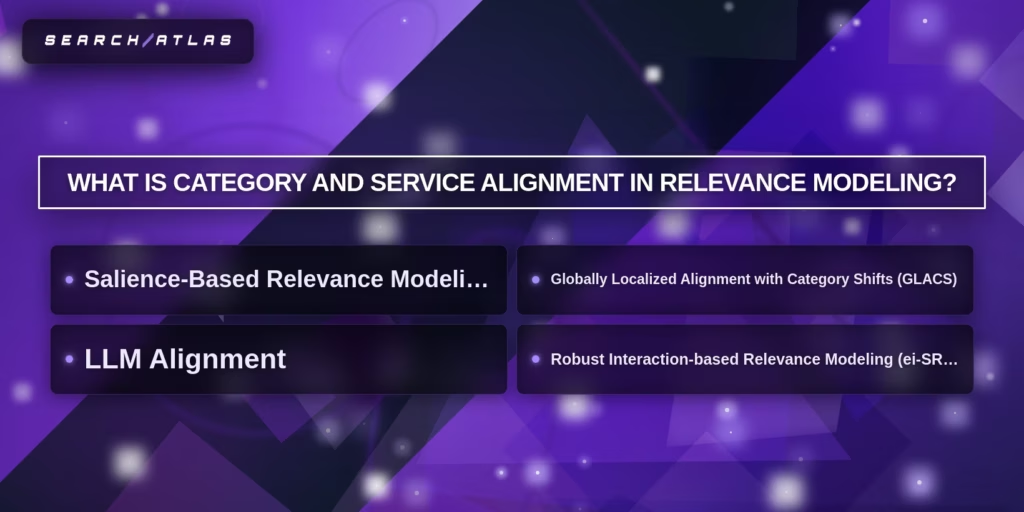

2. Category and Service Alignment

Category and service alignment in relevance modeling is the structured process of matching business categories, service definitions, and content structures to user query intent through semantic scoring and probabilistic evaluation. Category and service alignment ensures that entities classified under a defined category satisfy the conceptual requirements of that category within relevance modeling systems.

What Is Category and Service Alignment in Relevance Modeling? Category and service alignment operates by evaluating semantic similarity between query embeddings and categorized entity embeddings. Relevance modeling converts query intent and category descriptors into high-dimensional vectors. Dot product and cosine similarity calculations measure conceptual closeness. Scores above defined thresholds indicate strong alignment between category label and user intent.

What Is Category and Service Alignment in Relevance Modeling? Category and service alignment differ from keyword matching because keyword density alone does not validate conceptual correctness. Relevance modeling evaluates fine-grained content blocks, service attributes, and category definitions at granular levels. Block-level salience scoring assigns alignment weights to specific content segments instead of entire pages.

What Is Category and Service Alignment in Relevance Modeling? Category and service alignment incorporates 3 technical mechanisms. These mechanisms are listed below.

- Salience-based relevance modeling. Embedding models calculate alignment scores between service descriptions and query vectors. Scores above 0.87 indicate high semantic alignment.

- Domain and category shift adaptation. Cross-domain alignment frameworks adjust for classification drift across industries and datasets.

- Robust interaction modeling. Query-term interaction models isolate core service descriptors from long-form content to improve classification accuracy and ranking stability.

What Is Category and Service Alignment in Relevance Modeling? Category and service alignment increases ranking precision in structured systems, e-commerce platforms, and local search environments. Deployment in production environments improved click-through rate by 1.54% and conversion rate by 1.02% in applied e-commerce relevance modeling.

What Is Category and Service Alignment in Relevance Modeling? Category and service alignment function as the classification integrity layer in relevance modeling, where semantic coherence between entity category and user intent determines retrieval eligibility and ranking order.

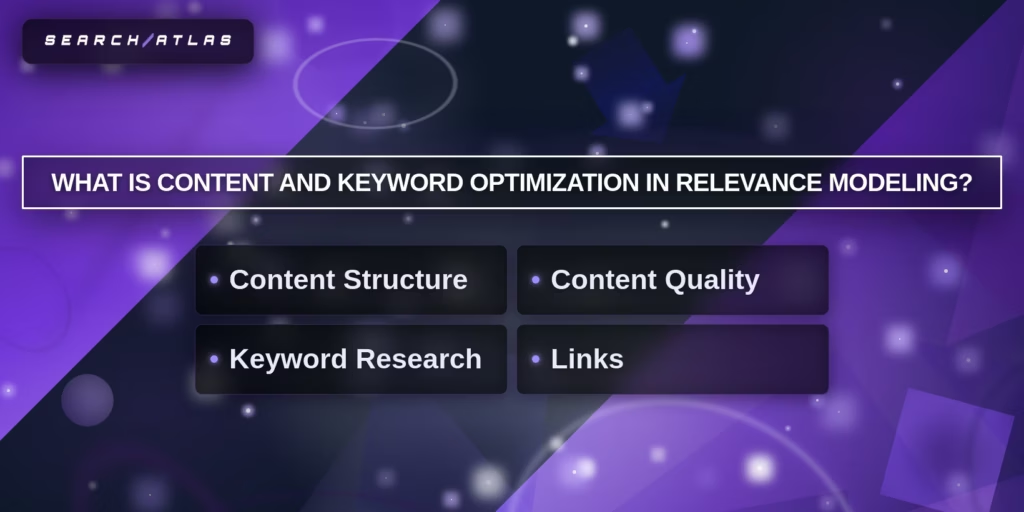

3. Content and Keyword Optimization

What Is Content and Keyword Optimization in Relevance Modeling? Content and keyword optimization in relevance modeling is the structured alignment of document content, keyword distribution, and entity coverage with user query intent to increase probabilistic relevance scoring accuracy. Content and keyword optimization strengthen how relevance modeling evaluates semantic similarity between query vectors and document vectors.

What Is Content and Keyword Optimization in Relevance Modeling? Content and keyword optimization work by transforming textual content into structured, machine-readable segments that improve semantic alignment. Relevance modeling converts both queries and documents into embeddings. Embedding proximity increases when keyword placement, entity definition, and topical coverage match the predicted intent category.

What Is Content and Keyword Optimization in Relevance Modeling? Content and keyword optimization include 3 core components. These components are listed below.

- Content Structure Alignment. Structured headings and segmented blocks increase semantic clarity and improve ranking precision.

- Intent-Based Keyword Integration. Primary and secondary keywords align with informational, transactional, and navigational intent categories.

- Entity and Topic Coverage Expansion. Explicit entity definitions and related concept mapping strengthen contextual completeness and increase relevance probability.

What Is Content and Keyword Optimization in Relevance Modeling? Content and keyword optimization differ from keyword stuffing because relevance modeling evaluates contextual coherence instead of raw term frequency. Higher semantic coherence increases ranking stability and reduces mismatch between query interpretation and document meaning.

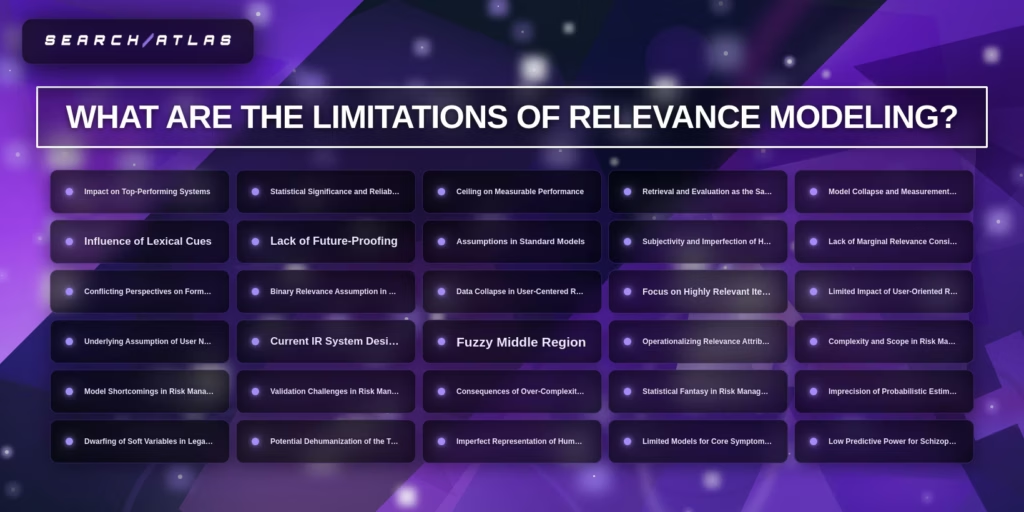

What Are the Limitations of Relevance Modeling?

Relevance modeling has evaluation, methodological, conceptual, and application limitations because probabilistic estimation of relevance depends on imperfect assumptions, incomplete data, and subjective human judgment. Relevance modeling estimates alignment probability, yet alignment probability does not guarantee objective truth or universal agreement.

What Are the Evaluation Limitations of Relevance Modeling? Relevance modeling faces 5 evaluation limitations. These limitations are listed below.

- Impact on Top-Performing Systems. Marginal gains become difficult to measure once systems approach high baseline precision.

- Statistical Significance Issues. Small metric improvements do not indicate meaningful ranking improvement.

- Performance Ceiling Effects. Evaluation datasets limit measurable improvement once near-maximum scores are reached.

- Model Collapse and Measurement Error. Overfitting to benchmark datasets reduces generalization reliability.

- Lack of Future-Proofing. Static evaluation benchmarks fail to reflect evolving user intent patterns.

What Are the Methodological Limitations of Relevance Modeling? Relevance modeling contains 6 methodological limitations. These limitations are listed below.

- Binary Relevance Assumption. Traditional systems classify documents as relevant or non-relevant without modeling graded relevance.

- Lack of Marginal Relevance Modeling. Systems fail to penalize redundancy across ranked results.

- Assumptions in Standard Models. Probabilistic independence assumptions distort term correlation effects.

- Underlying User Need Assumption. Systems assume stable intent even when queries contain ambiguity.

- Data Collapse in User-Centered Research. Aggregating user judgments reduces granularity and masks contextual variance.

- Operationalization Challenges. Translating abstract relevance attributes into measurable variables introduces simplification bias.

What Are the Conceptual Limitations of Relevance Modeling? Relevance modeling includes 5 conceptual limitations. These limitations are listed below.

- Retrieval and Evaluation Conflation. Evaluation metrics treat retrieval as identical to measured performance.

- Conflicting Formal Measures. Different relevance metrics produce inconsistent ranking interpretations.

- Fuzzy Middle Region. Documents frequently fall into partially relevant states rather than binary extremes.

- Statistical Fantasy Risk. Excessive reliance on probability metrics creates an illusion of precision.

- Subjective Assessment Dependence. Human assessors introduce inconsistency and contextual bias.

What Are the Application Limitations of Relevance Modeling? Relevance modeling faces 8 application limitations across legal, medical, and risk management domains. These limitations are listed below.

- Risk Management Model Shortcomings. Complex risk models amplify structural uncertainty.

- Validation Challenges. Limited labeled data reduces external validation reliability.

- Over-Complexity Consequences. High-dimensional models reduce interpretability.

- Imprecision in Legal Contexts. Probabilistic estimates cannot represent legal certainty requirements.

- Dwarfing of Soft Variables. Quantitative scoring suppresses qualitative contextual factors.

- Potential Dehumanization Effects. Automated scoring reduces human interpretive oversight.

- Imperfect Biological Representation. Animal models fail to replicate full human symptom complexity.

- Low Predictive Power in Diagnostic Contexts. Certain diagnostic models demonstrate limited forward prediction reliability.

What Are the System Design Limitations of Relevance Modeling? Relevance modeling struggles when system design prioritizes ranking efficiency over contextual depth. Current IR system design emphasizes scalability and latency constraints. Speed-optimized ranking pipelines reduce computational complexity but limit interpretive richness. Relevance modeling functions as a probabilistic estimation framework, yet probabilistic estimation remains constrained by subjective labeling, structural assumptions, evaluation ceiling effects, and contextual variability across domains.

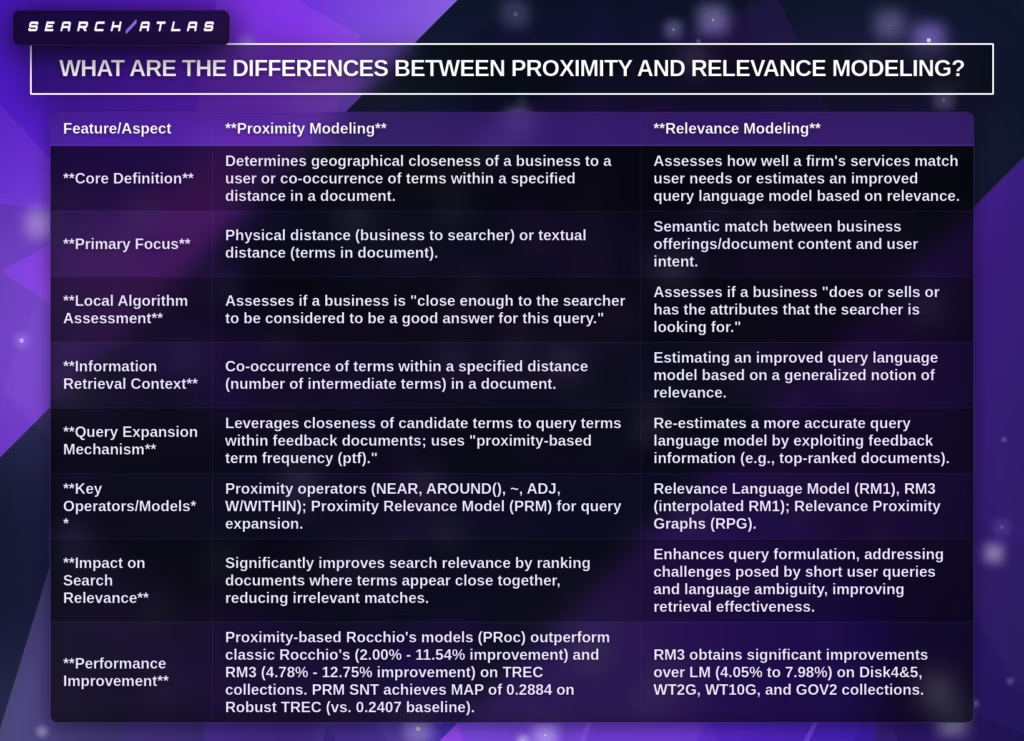

What Are the Differences Between Proximity and Relevance Modeling?

Proximity vs relevance modeling differs in signal logic, representation structure, ranking mechanics, computational design, and cost behavior inside local SEO ranking systems. Proximity vs relevance modeling operates on different primary signals where proximity evaluates physical distance, and relevance evaluates semantic intent alignment. local SEO ranking factors combine both modeling layers to determine the final ranking output.

What Are the Structural Differences in Signal Type and Representation? Proximity modeling uses distance-based signals derived from geographic coordinates, while relevance modeling uses intent-based signals derived from semantic entities and content vectors. Proximity modeling represents entities through latitude-longitude pairs and spatial indexes. Relevance modeling represents entities through inverted indexes and high-dimensional embeddings that encode contextual similarity.

What Are the Operational Differences in Matching and Index Structure? Proximity modeling applies geographic filtering over spatial indexes, while relevance modeling applies semantic matching over inverted indexes and embedding spaces. Proximity modeling filters candidates within a defined radius before scoring. Relevance modeling ranks candidates by computing similarity scores between query vectors and document vectors.

What Are the Differences in Computational Requirements and Latency? Proximity modeling requires lower semantic computation but higher coordinate recalculation frequency, while relevance modeling requires higher vector computation but stable coordinate independence. Proximity queries execute in approximately 2-20 ms using spatial indexes. Relevance scoring requires 15-80 ms, depending on embedding complexity and ranking pipeline depth.

What Are the Differences in Data Dependencies and Adaptability? Proximity modeling depends on user location data and static business coordinates, while relevance modeling depends on content signals, entity attributes, and behavioral feedback. Proximity signals update dynamically when user coordinates change. Relevance signals update dynamically when content structure, intent patterns, or entity relationships evolve.

What Are the Cost and Infrastructure Differences Between Proximity and Relevance Modeling?

Proximity modeling has a lower vector processing cost but a higher spatial query overhead, while relevance modeling has a higher model inference cost but scalable batch processing capability. The estimated cost per 1M proximity queries ranges from $5-$20 depending on spatial index efficiency. The estimated cost per 1M relevance modeling queries ranges from $20-$120 depending on embedding model size and hardware acceleration.

What Does the Comparative Structure of Proximity vs Relevance Modeling Look Like?

The comparison between proximity vs relevance modeling is structured below.

| Dimension | Proximity Modeling | Relevance Modeling |

|---|---|---|

| Signal Type | Distance | Intent |

| Representation Method | Location Data | Semantic Entities |

| Matching Approach | Geographic Filtering | Semantic Matching |

| Index Structure | Spatial Index | Inverted Index / Embeddings |

| Computational Requirements | Coordinate Calculation | Vector Similarity Computation |

| Latency | 2-20 ms | 15-80 ms |

| Data Dependencies | User Location | Content & Entity Signals |

| Adaptability | Dynamic to Movement | Dynamic to Intent & Content |

| Cost per 1M Queries | $5-$20 | $20-$120 |

Proximity vs relevance modeling defines two complementary ranking layers in local SEO ranking factors, where proximity filters candidates by distance and relevance orders candidates by semantic alignment.

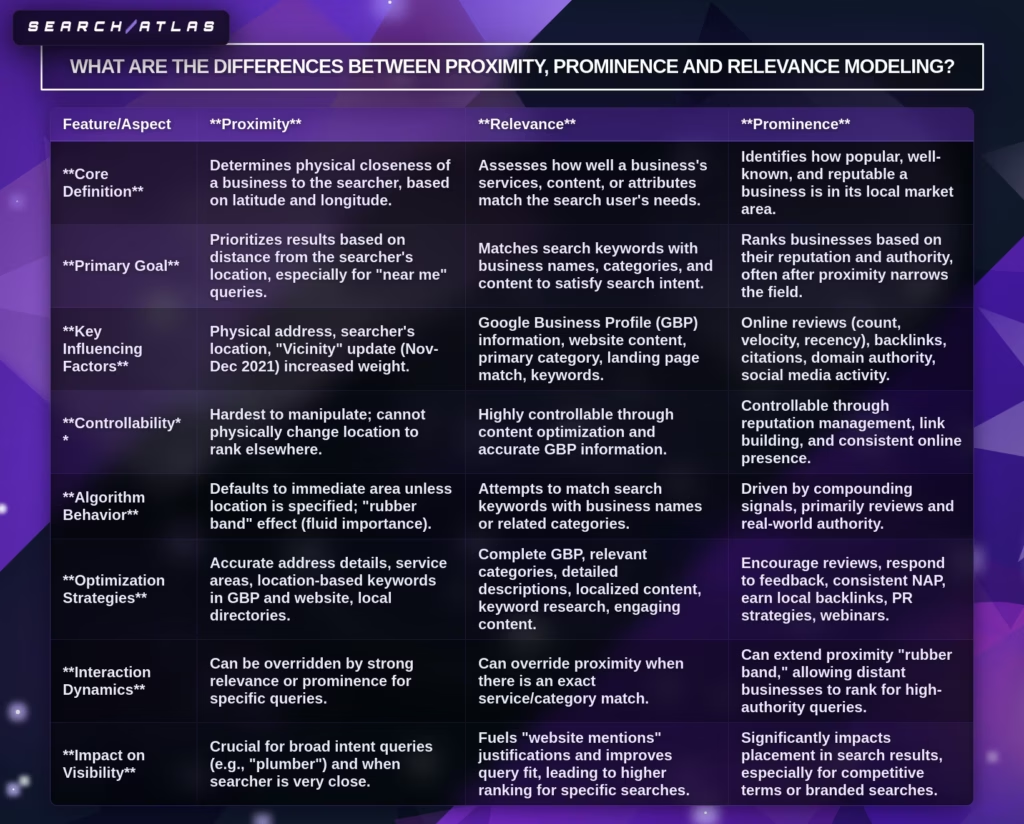

What Are the Differences Between Proximity, Prominence, and Relevance Modeling?

Proximity, prominence, and relevance modeling differ in signal type, data structure, ranking influence, computational design, and cost within local SEO ranking systems. Proximity modeling evaluates distance. Prominence modeling evaluates authority. Relevance modeling evaluates intent alignment. local SEO ranking systems combine all three layers to determine final ordering.

What Are the Differences Between Proximity, Prominence, and Relevance Modeling in Signal Type? Proximity modeling uses distance signals, prominence modeling uses authority signals, and relevance modeling uses intent signals. Distance determines geographic eligibility. Authority determines trust weight. Intent determines contextual match strength.

What are the differences between proximity, prominence, and relevance Modeling in the representation method? Proximity modeling represents entities through location data, prominence modeling represents entities through authority graphs, and relevance modeling represents entities through semantic embeddings. Location data stores latitude-longitude coordinates. Authority graphs store backlink, review, and mention relationships. Semantic embeddings store contextual similarity vectors.

What are the differences between proximity, prominence, and Relevance Modeling in the matching approach? Proximity modeling applies geographic filtering, prominence modeling applies authority scoring, and relevance modeling applies semantic matching. Geographic filtering removes distant entities. Authority scoring boosts trusted entities. Semantic matching ranks intent-aligned entities.

What Are the Differences Between Proximity, Prominence, and Relevance Modeling in Index Structure? Proximity modeling uses spatial indexes, prominence modeling uses link and entity graphs, and relevance modeling uses inverted indexes and embedding indexes. Spatial indexes accelerate coordinate lookup. Graph structures compute authority propagation. Inverted and embedding indexes compute textual similarity.

What Are the Differences Between Proximity, Prominence, and Relevance Modeling in Core Signals? Proximity modeling relies on GPS and proximity radius, prominence modeling relies on reviews, backlinks, mentions, and keyword prominence, and relevance modeling relies on content, keywords, and entity alignment. Keyword prominence inside content increases prominence weight when authority signals confirm topical strength.

What Are the Differences Between Proximity, Prominence, and Relevance Modeling in Computational Requirements and Latency? Proximity modeling requires coordinate distance calculation, prominence modeling requires graph scoring computation, and relevance modeling requires vector similarity computation. Proximity latency ranges from 2-20 ms per query. Prominence scoring latency ranges from 10 to 40 ms, depending on graph depth. Relevance modeling latency ranges from 15 to 80 ms, depending on embedding complexity.

What Are the Differences Between Proximity, Prominence, and Relevance Modeling in Data Dependencies and Adaptability? Proximity modeling depends on user location data, prominence modeling depends on external authority signals, and relevance modeling depends on content and contextual signals. Proximity signals update dynamically with device movement. Prominence signals update semi-dynamically as reviews and backlinks accumulate. Relevance signals update dynamically with content revision and intent shifts.

What Are the Differences Between Proximity, Prominence, and Relevance Modeling in Ranking Influence and Cost? Proximity modeling acts as a filtering layer, prominence modeling acts as a boosting layer, and relevance modeling acts as a primary ranking layer. Proximity determines eligibility within radius boundaries. Prominence increases trust-weighted ranking strength. Relevance determines final semantic ordering. The estimated cost per 1M queries ranges from $5-$20 for proximity, $10-$60 for prominence graph scoring, and $20-$120 for relevance vector scoring.

What Does the Structured Comparison Table of Proximity, Prominence, and Relevance Modeling Look Like?

The structured comparison is shown below.

| Dimension | Proximity Modeling | Prominence Modeling | Relevance Modeling |

|---|---|---|---|

| Signal Type | Distance | Authority | Intent |

| Representation Method | Location Data | Link / Entity Graph | Semantic Entities |

| Matching Approach | Geographic Filtering | Authority Scoring | Semantic Matching |

| Index Structure | Spatial Index | Link / Entity Graph | Inverted Index / Embeddings |

| Core Signals | GPS, Proximity Radius | Reviews, Backlinks, Mentions, Keyword Prominence | Content, Keywords, Entities |

| Computational Requirement | Coordinate Calculation | Graph Propagation Scoring | Vector Similarity Computation |

| Latency | 2-20 ms | 10-40 ms | 15-80 ms |

| Data Dependency | User Location | External Authority Signals | Content & Context |

| Adaptability | Dynamic | Semi-Dynamic | Dynamic |

| Influence on Ranking | Filtering | Boosting | Primary Ranking |

| Cost per 1M Queries | $5-$20 | $10-$60 | $20-$120 |

Proximity, prominence, and relevance modeling operate as layered ranking systems inside local SEO where distance controls eligibility, authority adjusts trust weight, and intent alignment determines semantic order.

How do These Three Factors Work Together?

Proximity, prominence, and relevance work together in a layered ranking sequence where proximity filters candidates, relevance orders candidates by intent alignment, and prominence boosts authority strength among qualified results. local SEO ranking systems combine these three modeling layers to determine the final visibility position.

How do these three factors work together in Ranking Flow? Proximity operates first as a geographic eligibility filter. Proximity removes entities outside the defined radius using coordinate-based evaluation. Eligible entities proceed to semantic evaluation. Relevance then ranks eligible entities according to intent alignment using vector similarity and keyword distribution logic. Prominence then adjusts ranking weight based on authority signals, including reviews, backlinks, and keyword prominence strength.

How do these three factors work together in Weight Distribution? Each factor contributes a different functional weight inside the ranking pipeline. Proximity contributes to spatial filtering weight. Relevance contributes primary ordering weight. Prominence contributes authority amplification weight. local SEO ranking factors distribute influence dynamically based on query type and device context.

How do these three factors work together in Conflict Scenarios? Proximity overrides prominence when the distance difference is extreme. A business located within 0.5 miles often outranks a highly prominent business located 8 miles away. Relevance overrides proximity when query specificity increases. A highly intent-aligned listing outranks a closer but loosely related listing.

How do these three factors work together in Mobile Environments? Mobile search environments amplify proximity weighting while preserving relevance and prominence evaluation. GPS-based recalculation continuously updates the proximity score. Relevance maintains semantic filtering stability. Prominence maintains trust calibration across repeated searches.

How do these three factors work together in the Final Ranking Calculation? Final ranking calculation multiplies proximity eligibility, relevance probability, and prominence authority into a composite score. Composite scoring determines Local Pack ordering. Filtering, ordering, and boosting functions operate sequentially to produce the final ranked output.

How Google Determines Local Ranking Relevance, Distance, and Prominence?

Google determines local ranking relevance, distance, and prominence by combining semantic query alignment, geographic proximity calculation, and authority signal evaluation into a composite ranking score. Google confirms that local results are primarily based on relevance, distance, and prominence. These three factors form the foundation of local SEO ranking systems.

How Google Determines Local Ranking Relevance? Google determines local ranking relevance by evaluating how well a GBP and associated website content match the user’s search query. Relevance evaluation includes primary and secondary business categories, business descriptions, service listings, website titles, and on-page keyword alignment. Complete and accurate profile information increases relevance strength because semantic clarity improves query matching probability.

How does Google determine local ranking distance? Google determines local ranking distance by calculating the physical separation between the searcher’s location and the business address using latitude and longitude coordinates. Google uses device location data or the geographic term specified in the query to compute proximity. Distance acts as a geographic filter where closer businesses receive higher eligibility weighting, particularly for “near me” searches.

How Google Determines Local Ranking Prominence? Google determines local ranking prominence by measuring how well-known and authoritative a business is across online and offline sources. Prominence evaluation includes review volume, review quality, backlinks, citations, brand mentions, and overall organic visibility. Strong authority signals increase ranking boost strength within the eligible candidate set.

How Google Determines Local Ranking (Relevance, Distance, Prominence, and Support) in Practice? Google applies relevance as semantic alignment, distance as geographic filtering, and prominence as authority boosting within a unified ranking pipeline. Distance filters candidates first. Relevance orders candidates according to intent alignment. Prominence adjusts final ordering through authority weighting.

How Google Determines Local Ranking Through Core Signals? The operational structure is summarized below.

| Factor | Primary Signal | Core Data Source | Functional Role |

|---|---|---|---|

| Relevance | Intent Alignment | Google Business Profile categories, descriptions, and website keywords | Primary ranking order |

| Distance | Geographic Proximity | GPS coordinates, query location | Eligibility filtering |

| Prominence | Authority Signals | Reviews, backlinks, citations, brand mentions | Ranking boost adjustment |

How Google Determines Local Ranking for Mobile Users? Google increases proximity weighting for mobile searches while maintaining relevance and prominence evaluation. 76% of mobile local searches result in contact within 24 hours, and 28% result in purchase. Mobile search behavior increases the impact of geographic proximity on ranking visibility.

How does Google determine local ranking in Optimization Strategy? Businesses influence relevance through complete GBP data, influence prominence through review acquisition and backlink growth, and cannot directly control distance. NAP consistency across 50+ directories strengthens legitimacy verification. Spam tactics lead to listing suspension and ranking loss.

How to Optimize for Proximity and Relevance in Local SEO?

Businesses optimize for proximity and relevance in local SEO by aligning location accuracy, business data, and content structure with how local search systems evaluate distance, intent, and authority. Local search systems rank results based on geographic proximity, semantic relevance, and prominence signals. Effective optimization increases visibility inside the Local Pack, map results, and location-based queries instead of relying only on traditional organic rankings.

The 8 methods for businesses to optimize for proximity and relevance in local SEO are listed below.

- Ensure NAP consistency across all directories and listings.

- Verify and fully optimize Google Business Profile configuration.

- Create unique landing pages for each business location.

- Align content with geo-specific and intent-driven keywords.

- Implement structured data for local entities and services.

- Encourage and manage customer reviews with geographic signals.

- Build local backlinks and citation authority.

- Monitor ranking radius, engagement, and visibility metrics.

1. Ensure NAP Consistency Across All Directories and Listings

Ensuring NAP consistency across all directories and listings strengthens proximity signals by maintaining accurate geographic identity. Local search systems compare business name, address, and phone number across sources to validate legitimacy and location accuracy. Consistent data increases trust and prevents ranking suppression caused by conflicting information. Businesses apply this method by auditing listings across directories, correcting inconsistencies, and standardizing formatting. A practical takeaway involves maintaining identical NAP data across at least 50 directories to reinforce location accuracy signals.

2. Verify and Fully Optimize Google Business Profile Configuration

Verifying and optimizing Google Business Profile configuration strengthens both proximity and relevance by defining how a business appears in local results. GBP acts as the primary data source for location, category, and service information. Complete profiles increase visibility because search systems rely on structured GBP data to match queries with businesses. Businesses apply this method by selecting accurate categories, defining service areas, and completing all profile fields. A practical takeaway involves adding services, attributes, and descriptions that match real user queries to increase relevance alignment.

3. Create Unique Landing Pages for Each Business Location

Creating unique landing pages for each business location strengthens proximity by reinforcing geographic specificity at the page level. Local search systems evaluate location pages to determine which business location matches a query. Duplicate pages weaken signals because they create ambiguity about which location is most relevant. Businesses apply this method by building one page per address with unique content, NAP data, and local context. A practical takeaway involves including maps, directions, and localized content that reflect the specific area served.

4. Align Content With Geo-Specific and Intent-Driven Keywords

Aligning content with geo-specific and intent-driven keywords strengthens relevance by matching search queries with clear service and location signals. Local search systems interpret queries based on intent, which requires content to reflect both service type and geographic context. Content that includes city names, neighborhoods, and service terms increases matching accuracy. Businesses apply this method by integrating geo-modifiers into headings, titles, and body content without repetition bias. A practical takeaway involves creating pages that target one service and one location per page.

5. Implement Structured Data for Local Entities and Services